In this article, we’ll be reviewing some of the improvements to CPU usage in Wwise 2022.1’s runtime:

- Performance Improvement Highlights

- Improvements to CPU Profiling

- Revamped CPU Scheduling

- Improved Parallelism and Concurrency

Performance Improvements

First, we want to showcase some general performance improvements in Wwise 2022.1:

Improvements to RTPC Management

Something that many developers have seen when using Wwise on large-scale projects is the cost of RTPC updates. These tend to become disproportionately expensive as projects tend to grow in size, necessitating various workarounds to get performance under control. For example, restructuring SoundBanks to attempt to reduce the number of loaded RTPCs; attempting to have very granular, selective, and manual management of game objects that are created in Wwise; or not addressing the issue at all, and attempts are made to reduce CPU usage elsewhere.

One major objective for Wwise 2022.1 was to address this much more directly, so that this is far less of an issue, and make the performance cost far more predictable.

Before, whenever Wwise processed a single RTPC update, Wwise would go over each active RTPC subscription, and determine if that RTPC update was relevant, before actually applying the RTPC update. Typically, each game object (GO) would have multiple subscriptions, so the most notable impact is that this meant that performing actions like updating all RTPCs that were based on relative distances from a listener would invoke a quadratic cost in complexity as the number of GOs in the system increased.

Now, much of that work is skipped. Instead, the list of relevant RTPC subscriptions are determined ahead of time, skipping many expensive searches that RTPC updates would cause. This should drastically reduce the amount of time spent on RTPC updates, and make the performance of that much more predictable.

The performance benefits may be such that, for developers that had to do significant amounts of effort to workaround this issue, it may be worth investigating if that is still required anymore, so as to simplify your game’s interaction with Wwise.

Improvements to built-in LPF/HPF

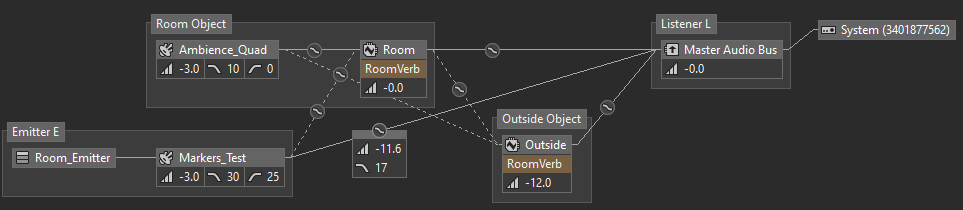

Next, we have significant improvements to the built-in low-pass filter (LPF) and high-pass filter (HPF) in the voice pipeline. These are very common operations, as a lot of designers tend to apply LPF or HPFs to many voices, or incorporate them as a part of their mixing to audio buses or aux buses, typically as a part of attenuation curves. The example below shows both cases in action – note, for example, the LPF applied during the mixing of Markers_Test to the Master Audio Bus, in addition to the LPF and HPF applied during initial voice processing.

This operation has historically been such a CPU hotspot that many developers who have done instruction-sampling of their game as a whole, let alone just focusing on Wwise, have likely seen this operation come up as a performance hotspot.

In order to address this, we’ve rewritten a large portion of the core mixing and filtering operations that our audio processing pipeline performs. A particular emphasis was put on the fact that developers are using these features on dozens or hundreds of voices at a time, and often using both LPF and HPF together. The optimizations also took into consideration the design of modern CPUs: it’s not just high-end PC and console CPUs that we expect to see improvements from this, but modern Apple Silicon and ARM Cortex chips as well!

The performance benefits vary significantly based on the CPU and usage scenarios, but in some of our internal benchmarks, when comparing Wwise 2021.1.0 against Wwise 2022.1.0, on last-generation consoles we measured up to a 2x improvement in total throughput performance of the effect, and on current-gen consoles we measured a 5x improvement in throughput performance.

It may be particularly noteworthy that, for any developers who were focused on last-generation development on Wwise 2021.1, or earlier, and are moving to focus on current-gen development on Wwise 2022.1, we expect up to a 10x improvement to throughput performance of this feature!

Improvements to Spatial Audio Performance

We also have some major improvements to performance of the spatial audio component to Wwise.

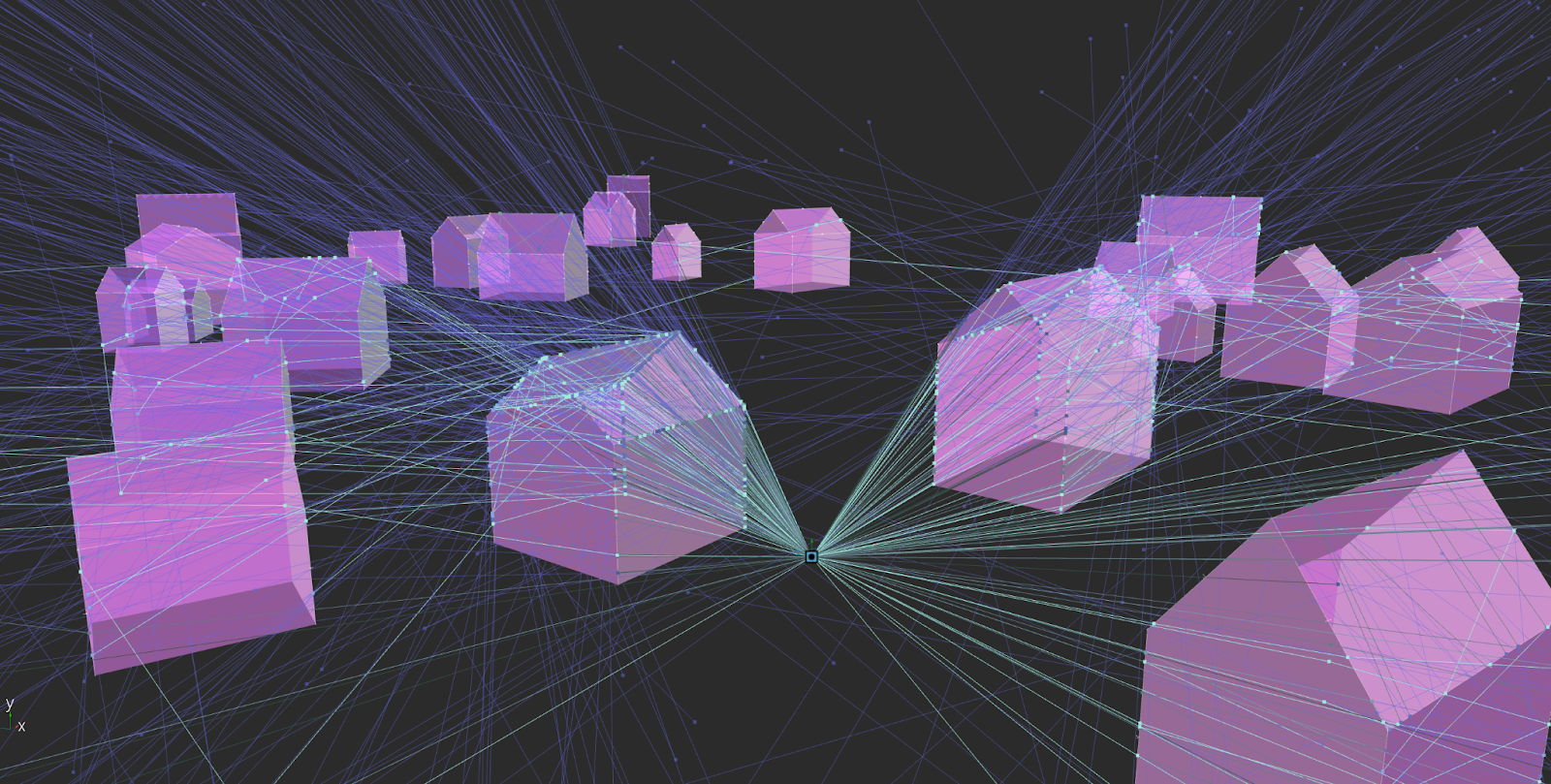

Diffraction Ray Casting

Diffraction edges for Spatial Audio are now discovered by tracing rays through the world. Before, diffraction edges were discovered by a very exhaustive inspection of all the geometry in the scene to find any potential edge, so it should be possible to more easily use more geometry for this portion of the processing. Diffraction performance also has a much lower, and fixed overhead, per emitter, which scales based on the number of rays fired and order of diffraction available.

Load Balancing

Spatial audio jobs can now be distributed across multiple soundengine ticks to mitigate CPU spikes, which is configurable in AkSpatialAudioInitSettings::uLoadBalancingSpread. One notable feature of the new load balancing system is that a priority queue is used to ensure that latency sensitive operations are still performed early, relative to other work that may be required. This means that actions such as playing new sounds or changing active rooms should still be very responsive, even when that load is balanced across multiple audio ticks.

Other Small Highlights

Lastly, we have some other smaller improvements that are a part of Wwise 2022.1, or were released during the patch releases for Wwise 2021.1 that are worth noting:

- The AK Compressor and AK Expander effects were rewritten, with throughput performance improvements of up to 4x in many scenarios. For some scenarios, such as when processing ambisonics, the difference is magnified even further, with an over 20x improvement in throughput performance.

- A related benefit to the changes for AK Compressor and Expander is that we also resolved some inconsistencies in behaviour across different channel configurations. If you had any adjustments in your mix to compensate for this, especially when targeting certain channel counts or using 3D Audio, you can probably remove those now.

- The AK Convolution effect with hardware acceleration enabled, and WEM Opus decoding with hardware acceleration enabled, can now be processed fully asynchronously. This eliminates much of the CPU wall-clock time spent on these features, making them far less expensive than they were before. Of course, being hardware accelerated, these changes are only applicable for certain platforms – please check your platform-specific documentation for more details.

- Lastly, Wwise has a debug feature that can be utilized as a part of sound engine processing, AkInitSettings::bDebugOutOfRangeCheckEnabled. This feature itself is not new; it is a tool we have had for a few releases, to help track down where erroneous values such as infinity, not-a-number, or just unreasonably loud audio values, are introduced in the voice pipeline. Now, though, this feature now runs much faster than it used to. There is still a mild hit to performance, but instead of increasing sound engine execution time by a massive factor, such as 3x, it now increases in soundengine execution time by only a factor of 1.1-1.2x. While this helps improve the debugging experience when identifying these issues, it is so inexpensive now that it may be worth considering to just leave it on all of the time in development builds of your title!

Improvements to CPU Profiling

Besides CPU performance improvements, we’re also delivering some improvements to CPU profiling, to help give developers more actionable information when investigating CPU performance.

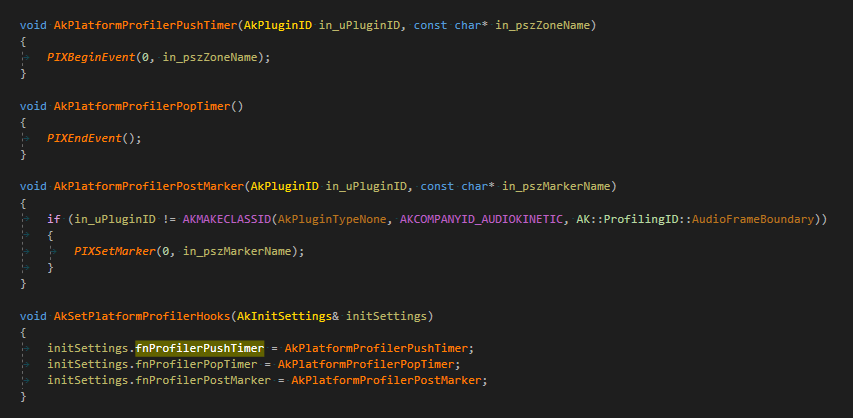

The biggest part of this are new callback functions that we have added in the SDK:

- fnProfilerPushTimer

- fnProfilerPopTimer

- fnProfilerPostMarker

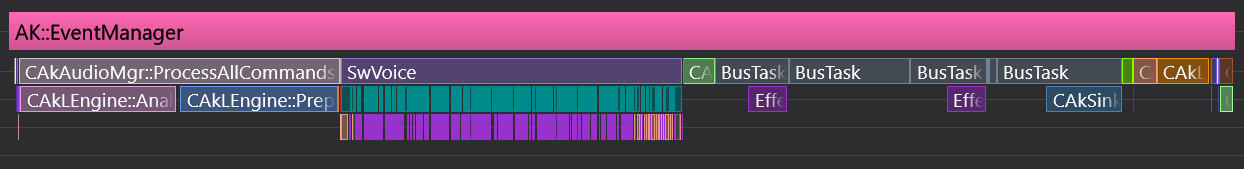

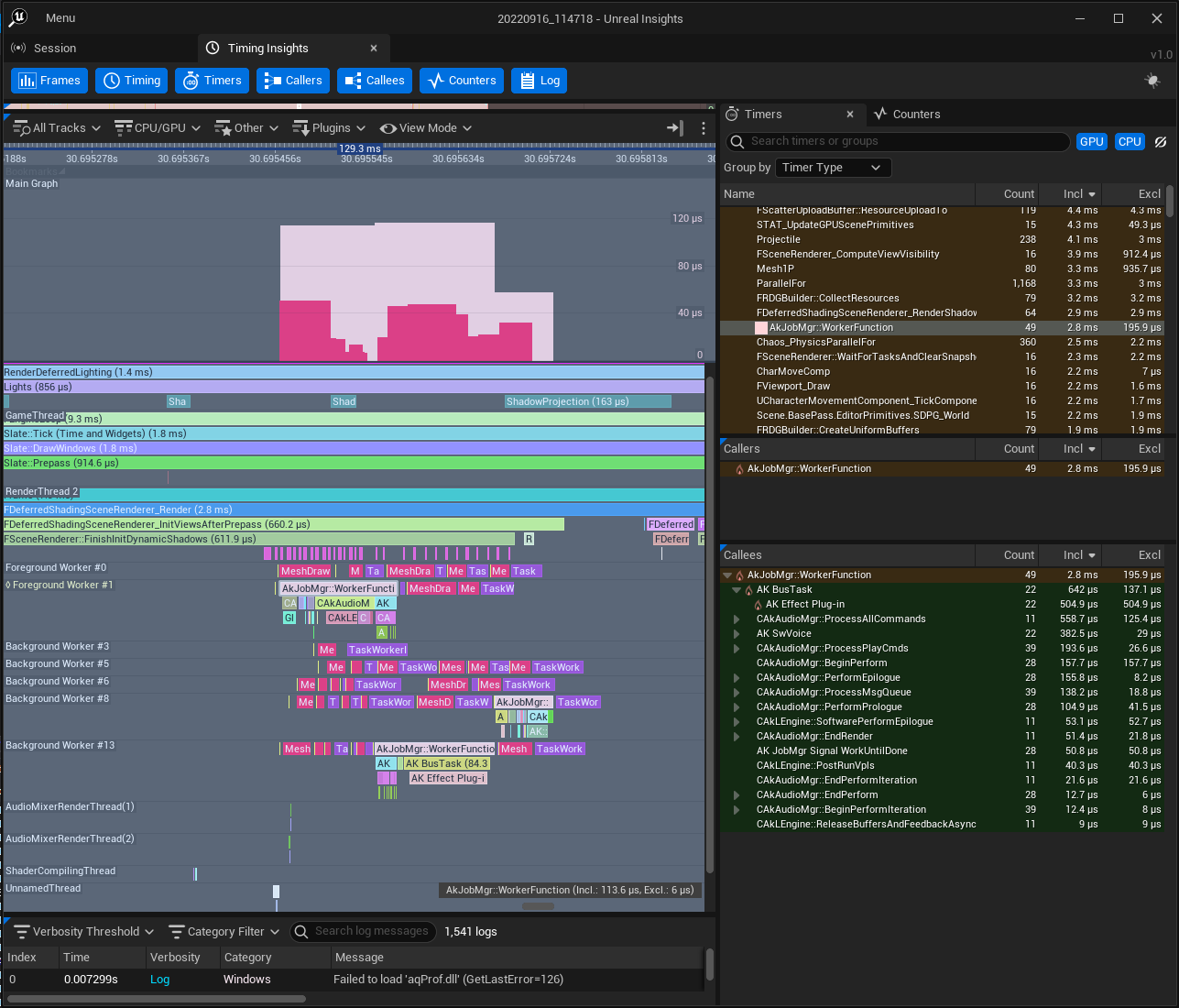

fnProfilerPushTimer and fnProfilerPopTimer are callbacks which are called frequently throughout the Wwise frame, to denote when certain CPU events start and stop. These are intended to be used to add more detailed timing data to your CPU profiler of choice – such as Superluminal, RAD Telemetry, Unreal’s Timing Insights, platform-specific profilers, or other bespoke tools used as a part of your game’s toolset. The below example demonstrates the application of these timers in one of these profilers.

fnProfilerPostMarker is used for highlighting other noteworthy standalone events, such as for adding bookmarks in a profiler, but currently is only used for “Voice Starvation” and “Audio Frame Boundary” events right now. This can be useful when browsing a large profiler session, and trying to find where Wwise experienced “Voice Starvation”, and to identify where to focus the profiling effort.

The Wwise SDK provides some example integrations of these callbacks into some platform-specific profilers, in order to demonstrate this functionality. These can be seen in SDK/Samples/SoundEngine, and shown below:

We hope this can be used both for audio programmers and non-audio programmers to better understand and inventory where the CPU time inside of Wwise is going, without having to modify the soundengine, or rely simply on program-counter sampling to get a clue as to where performance is going.

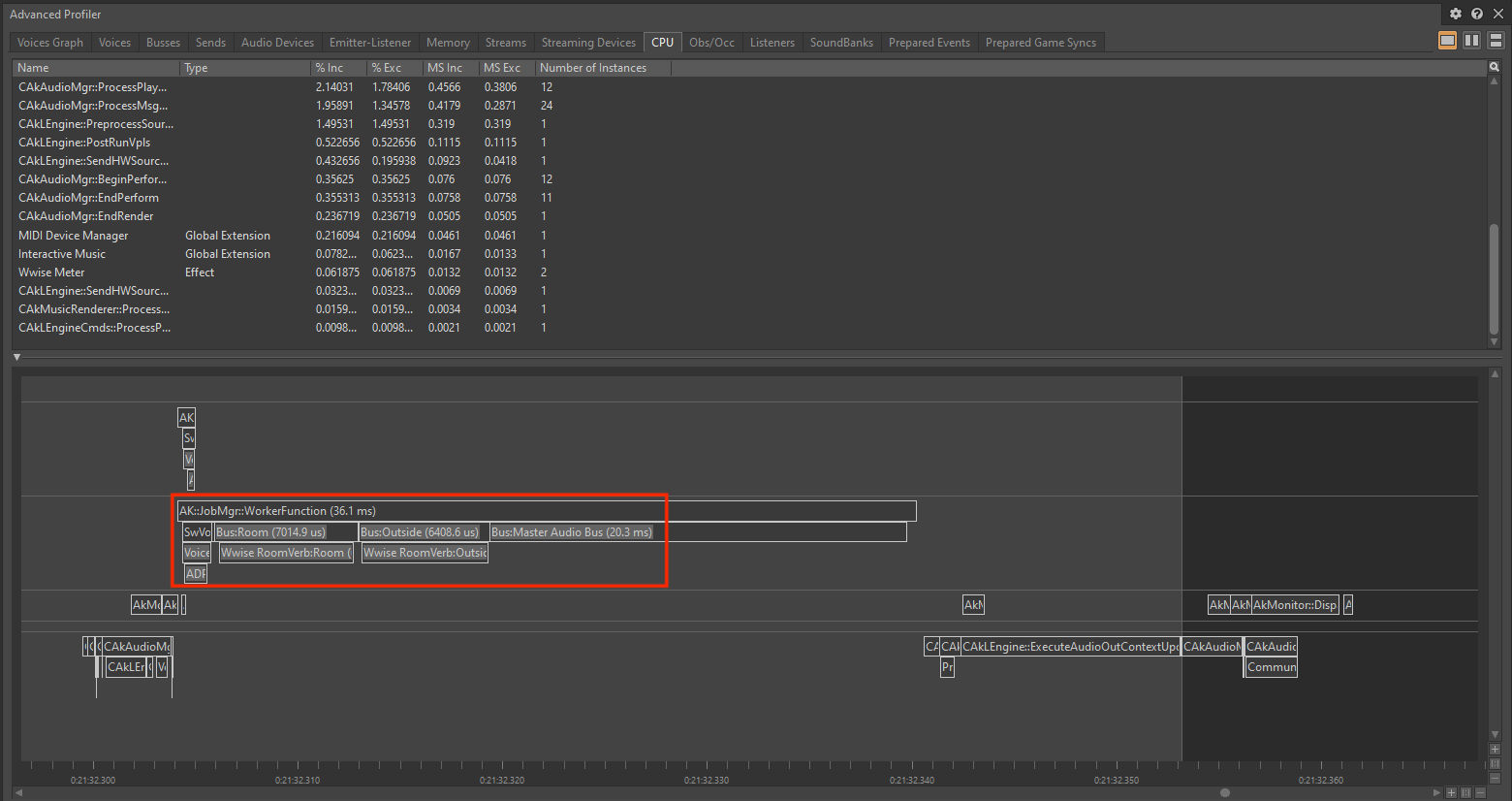

These new timer scopes are used not just for integration with external tools, though – this data is also included in Wwise profiling sessions now, as well.

It provides the same data as the SDK profiling features described above, but comes with one extra addition: It will provide extra attribution of timer scopes to specific voices and buses.

Note that this is currently intended for advanced users only, and requires a hidden setting to be toggled, in order to enable it in the Wwise Authoring tool. If you want to try out this feature for yourself, feel free to ask us for more information, but do note that it is in an early state, is not extremely polished compared to other tools available, and definitely requires a lot more awareness of how the Wwise runtime operates on the CPU in order to properly interpret the data. This information is included in every profiling session with CPU Data enabled, though, so when a profiling session is provided as part of a support ticket, we are still able to use this information to better understand performance issues you’re experiencing.

Given all that, we feel that it should be possible to better understand not just in a broad sense, “What plug-in or effect is being costly?”, but be able to trace performance problems back to specific buses or voices, or specific features in Wwise. This should be able to greatly assist in identifying CPU performance issues, and be able to make changes to affect CPU performance with greater intention, and more precise direction, than what was available before.

Revamped CPU Scheduling

Next, we wanted to tackle a more subtle, but still valuable, issue for Wwise, and that’s coordination and sharing of CPU resources with a game engine.

The CPU Scheduling Problem

Early on when Wwise was developed, it was largely from a “System-on-a-thread” era of game development. Games had relatively few threads, and were not yet taking significant advantage of multi-core CPUs.

In this way, Wwise could run in a fairly unobtrusive manner. It had its own thread that would periodically wake up, and not intrude much with the rest of the game frame. Either there were too few threads to fill up the CPU, or the threads were able to run on different cores, and could be scheduled freely across cores.

However, since then, many games have evolved how they use the CPU. Most often, it is by executing work as tasks or jobs on a pool of reusable worker threads as a Task Scheduler or Job Manager. This allows many individual systems in the game to run piles of work asynchronous to, and concurrent to, each other as smaller jobs. This pattern of work distribution is a very scalable, flexible, and performant pattern for achieving great multi-core CPU utilization. It would be easy to say that most large-scale games are performing their work in some manner like this, now.

…but, Wwise is often still running on its own freely-scheduled thread, which can cause some frustrations.

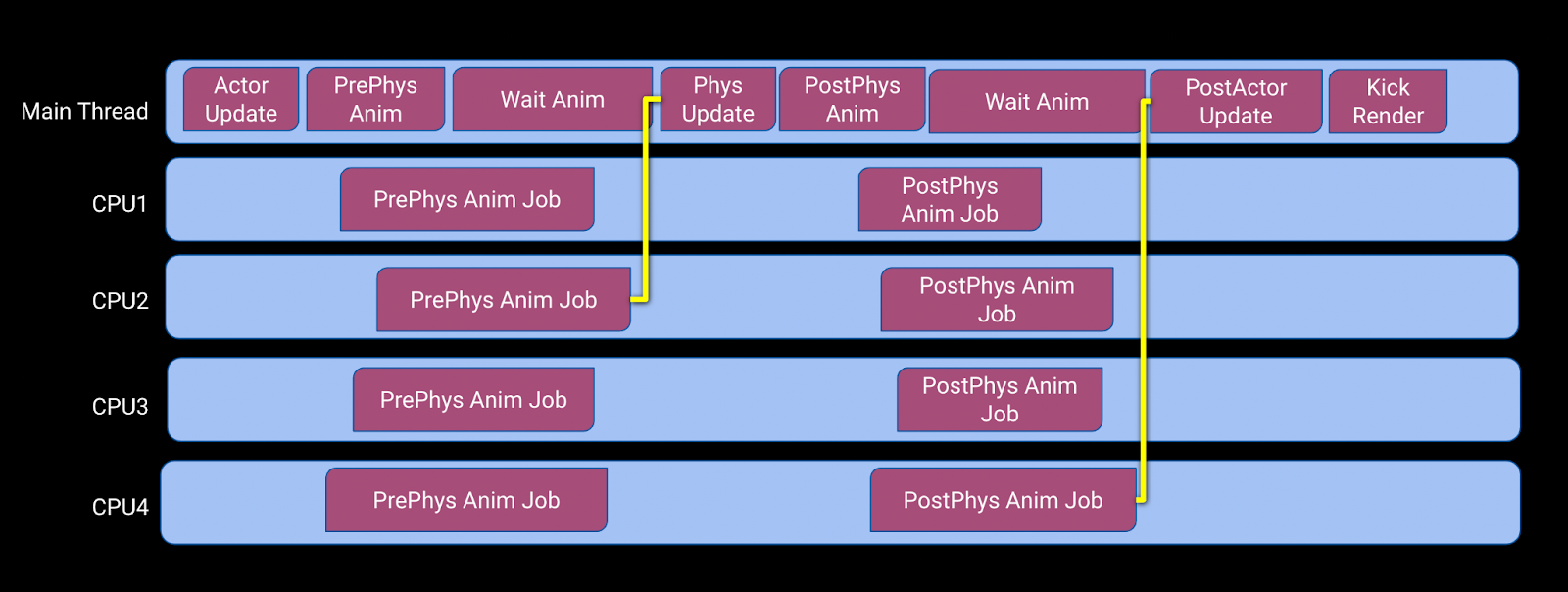

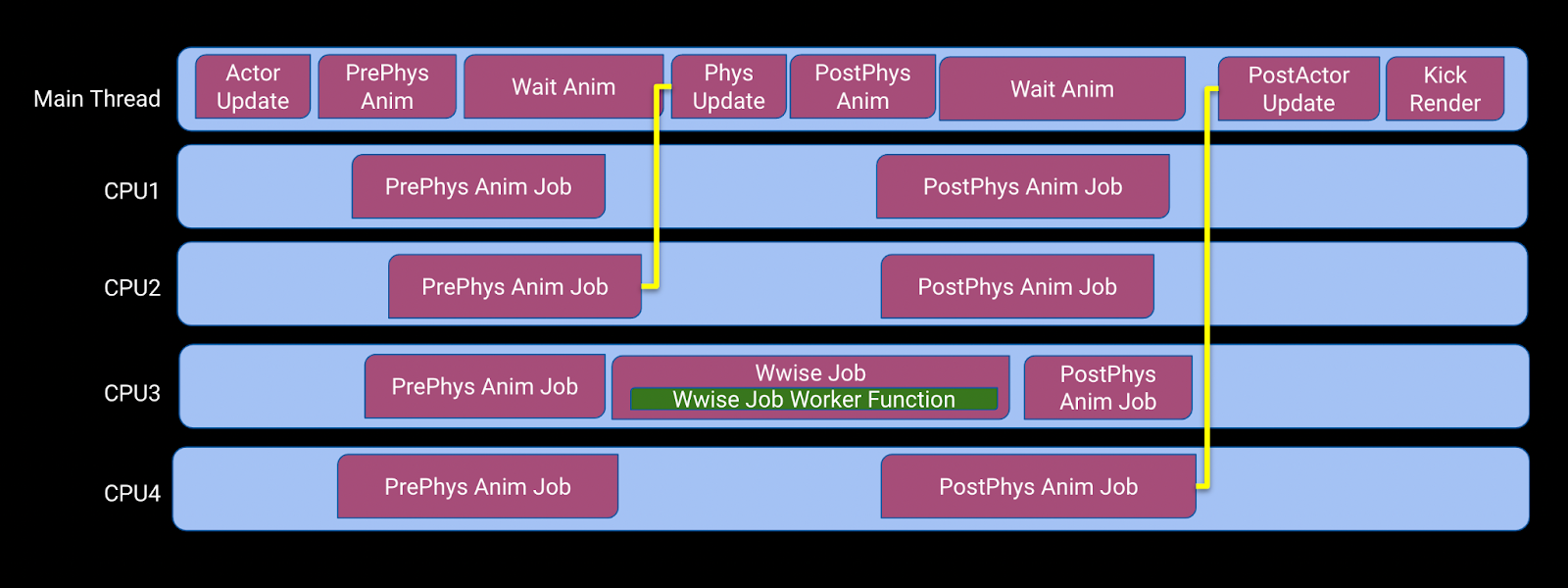

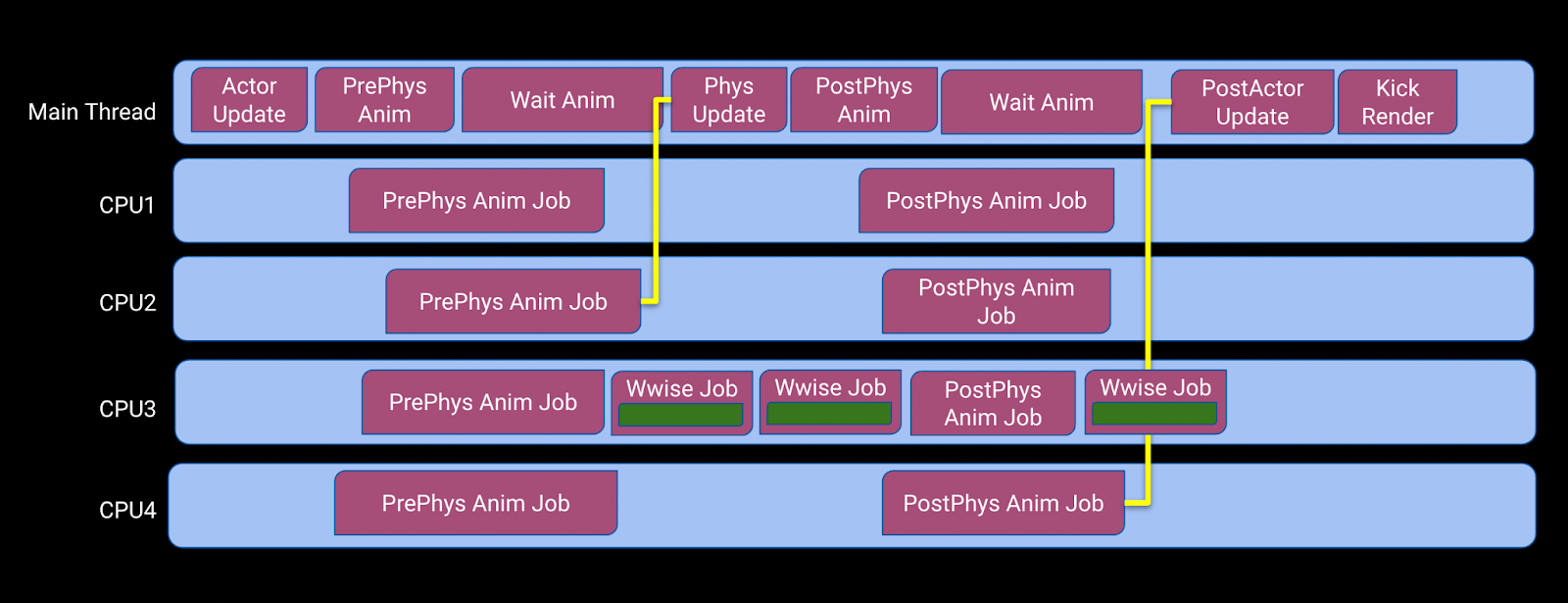

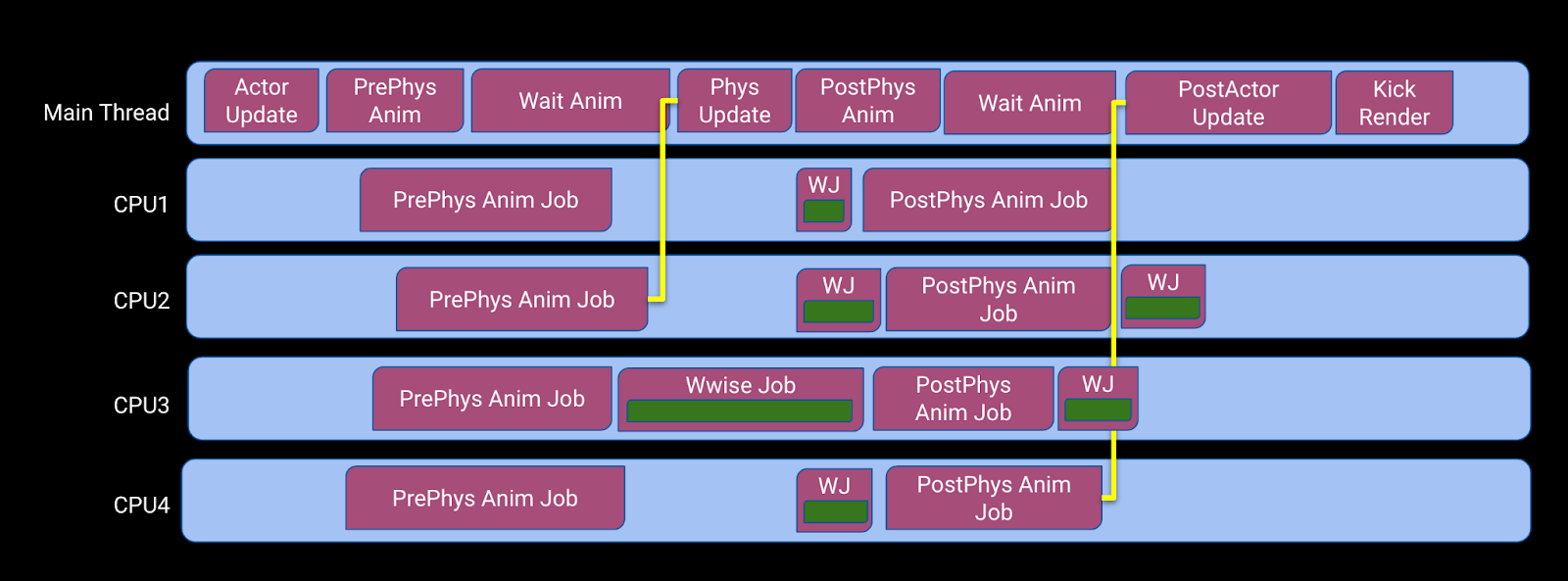

Let’s consider a simple CPU timeline that such a game may have.

The main thread is split into multiple stages for its game ‘simulation’ update, and whenever it kicks its “PrePhys anim” and “PostPhys Anim” work, it is able to spread the work across an arbitrary number of CPU cores. In order for the game update to proceed, all of those jobs need to finish before continuing.

If Wwise’s “EventMgr” thread slips in to do some work and causes one of the worker threads to be unscheduled when it is not running a job, it’s a little bit worse because one of the threads can’t pick up some of the PostPhysAnim job, but it’s manageable: everything still completes in a reasonable amount of time, with only a mild hit to performance.

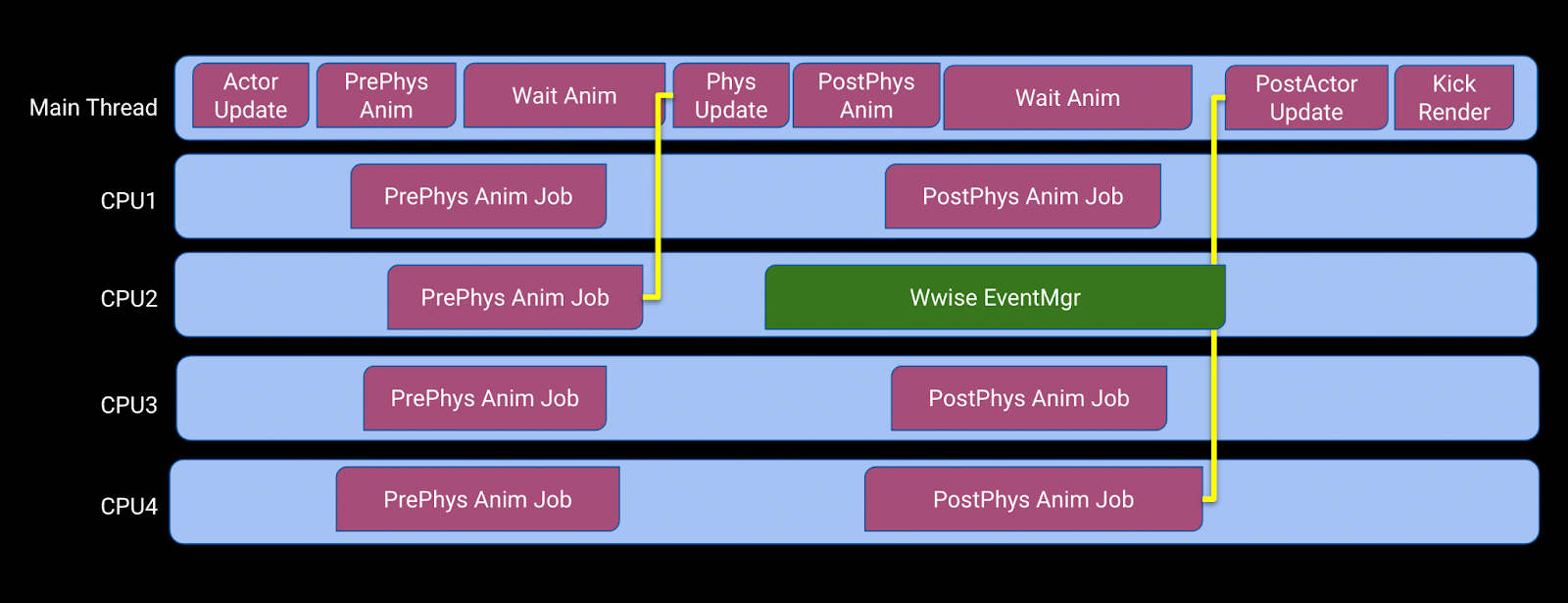

However, it is occasionally the case where this would happen, instead:

In this scenario, the EventMgr thread was woken up and scheduled in the middle of execution of one of the PrePhys Anim jobs.

As a result, the worker thread has been unscheduled and cannot make any forward progress until EventMgr has completed execution of the audio tick. The primary consequence of this is that the Anim job is unable to signal to the game thread its completion in a timely fashion, and the Main Thread spends a significant amount of time doing nothing other than waiting for the work to complete.

This is a very peculiar issue, because even though each individual operation is still taking the same number of CPU cycles, the mis-timing of the EventMgr thread will have caused the Main thread to miss its 16-millisecond-per-frame deadline by a significant amount. This can ultimately result in a “dropped frame,” hindering the game’s visual presentation and overall feel.

This is a familiar problem to many developers by now, and one which we have seen many solutions taken:

- The simplest one is just to let Wwise run on its own core all by itself. However, this has the downside of the game having one less worker thread to play with. The game’s frame-times may have stabilized, but peak throughput of the game and render updates may be reduced by up to 15%!

- Some developers have modified Wwise to try and launch jobs on their game engine’s worker threads when running the audio tick, but this kind of engine divergence makes future upgrades harder, and takes a significant amount of extra effort for the developer.

- Some developers would even go so far as to disable the EventMgr thread (i.e. LEngineThread), and then attempt to perform manual, full, executions of RenderAudio, but this requires other tight performance constraints or introduces other difficulties in scheduling audio.

Worst of all, this is all assuming that it’s a problem that developers have the time or resources to properly identify! It may just be that they finish shipping their game and one of the things affecting technical, critical, and consumer feedback are notes of the game having an uneven framerate, or some imperceptible feeling of “the game feels rough”.

Improvements to CPU Scheduling

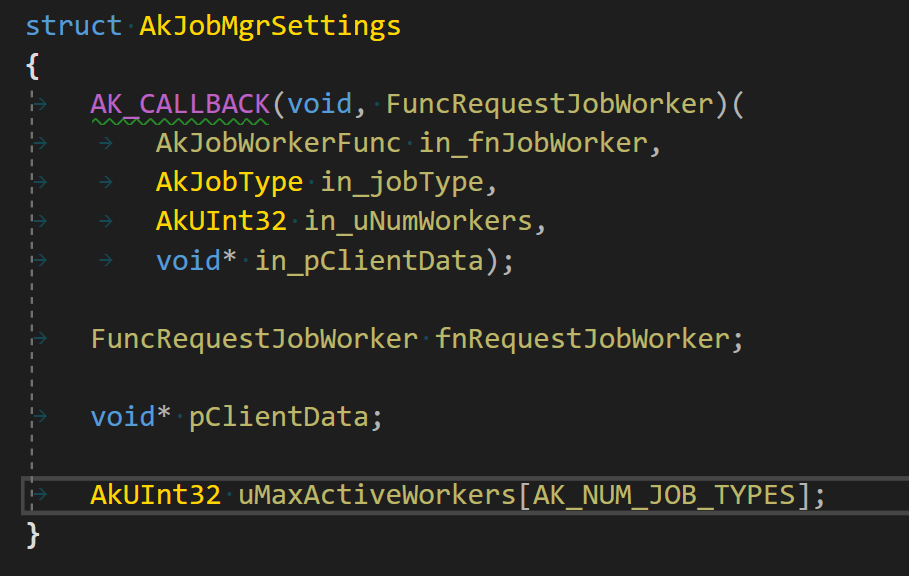

In Wwise 2022.1, we are now providing a solution for this problem that is built into the sound engine: a “Worker Function” callback!

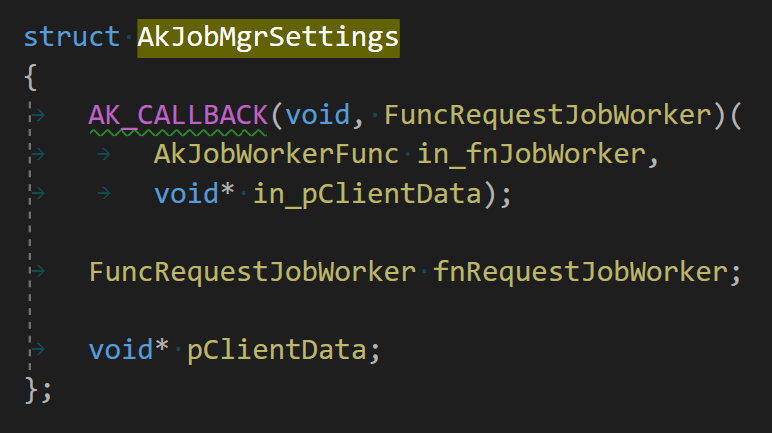

In AkInitSettings, there’s a new struct, “AkJobMgrSettings”, which includes a new callback that a developer can implement and specify, “fnRequestJobWorker”. This new callback will occasionally be called by Wwise whenever there is work by Wwise to be done.

The first parameter in this callback is a function pointer that Wwise provides for you to call: in_fnJobWorker. When Wwise calls fnRequestJobWorker, only one thing needs to be done, and that is to call in_fnJobWorker. This call to in_fnJobWorker may happen any time, or on any CPU core.

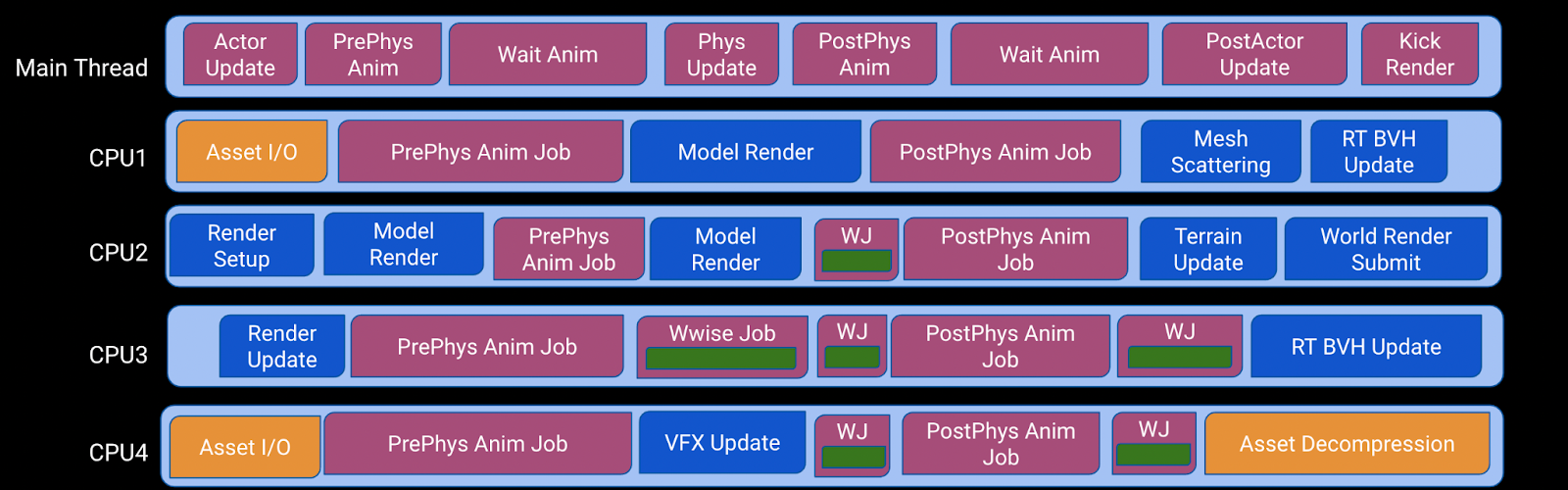

Most notably, in_fnJobWorker can be called inside of a game engine job, as shown below:

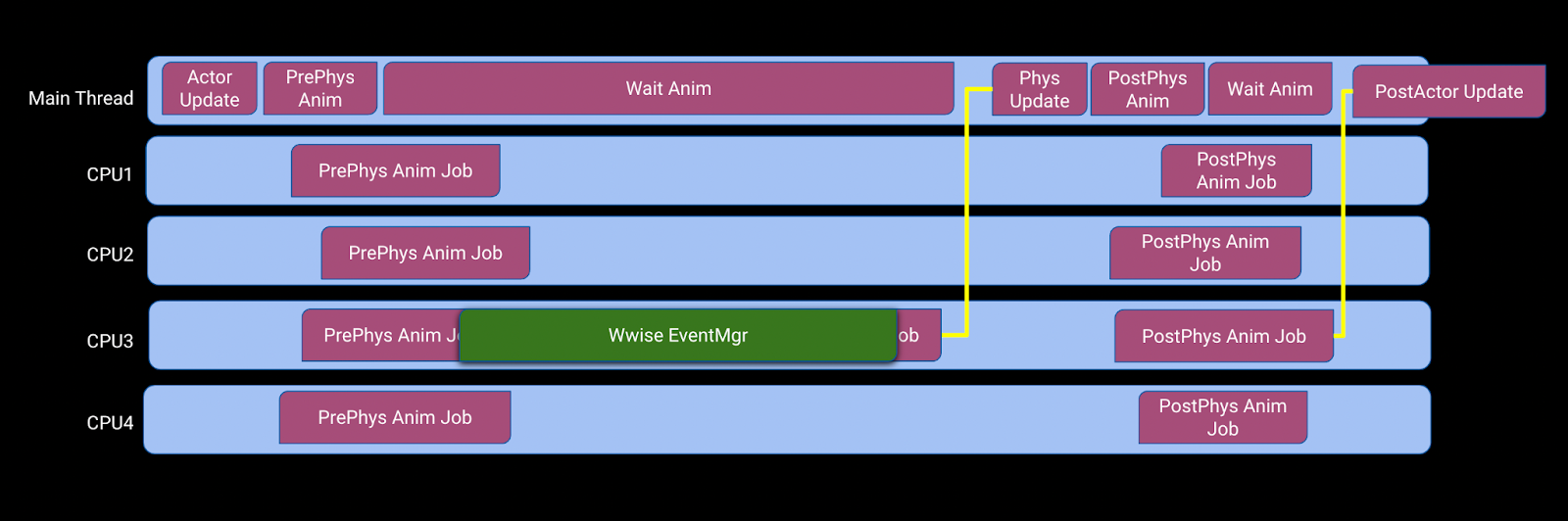

In this way, we can see that the Wwise work is now scheduled more cooperatively with the game engine.

For example, this request for a Wwise Worker was scheduled while the PrePhys Anim Jobs were running, but the game engine was able to schedule it to run afterwards, without having to halt execution of the PrePhys Anim Job.

This allows Wwise’s work to be far less obtrusive to your game’s execution, allowing for more stable performance, and without relying on sound engine divergences to Wwise.

However, that example still has one downside, and that is that it gets in the way of the execution of the PostPhys Anim Job a bit. The PostPhys Anim Job is also critically important work for the game thread, so the little bit of a delay in that execution still slows the game thread down slightly.

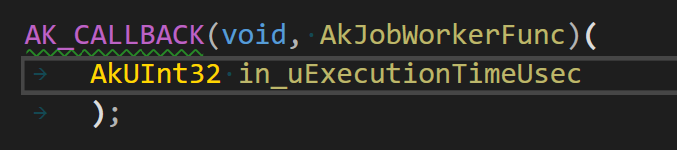

One of the features of the Worker Function helps to address that problem. When you call the in_fnJobWorker that was provided earlier, one of the parameters that you can specify is how long you want the worker to run for, measured as some number of microseconds.

During the execution of the worker function, specifically, after each task is completed, as shown below, the worker function will check how long it has been running for.

Once the worker has passed that provided deadline, Wwise will issue a new request for another worker – so that any remaining work can still be resumed later – and then the worker function will return back to the game engine.

This allows the worker function to terminate before the entire soundengine tick has completed, and make the execution a bit more granular. In the example above, it could be used to, perhaps, let the PostPhys Anim Job start its work a bit earlier than it would otherwise!

This is a somewhat idealized scenario, but on average, it may provide a net benefit. Tweaking this value may be something that is worth considering when finalizing your game’s CPU timeline, and balancing the various priorities and affinities of your game’s jobs and threads to achieve optimal scheduling of work.

Improved Parallelism and Concurrency

In addition to the improvements to allow for co-operative scheduling of work, we have also improved Wwise’s usage of parallelism and concurrency on modern CPUs.

Improvements to CPU Parallelism

For Wwise 2022.1, we have internally developed our own task-graph scheduler (i.e. the AkJobMgr) to better utilize multi-core processing. This new system entirely replaces the old “Parallel-For” functionality which Wwise used to have, for doing multi-core processing.

Once you have set up the Worker Request function to run on your systems, there should be little extra work required to go even wider on the CPU: For the most part, it should just be setting AkJobMgrSettings::uMaxActiveWorkers to values greater than 1, and then making sure your AkJobMgrSettings::fnRequestJobWorker callback supports that behaviour.

Improvements for Voice Graph Processing

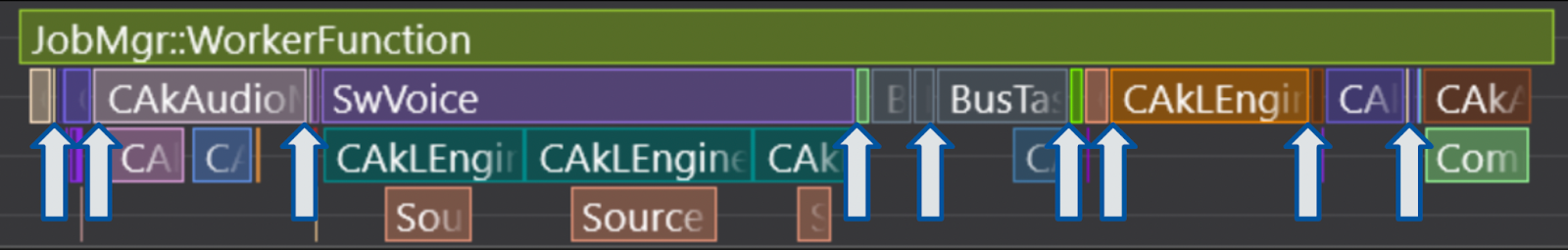

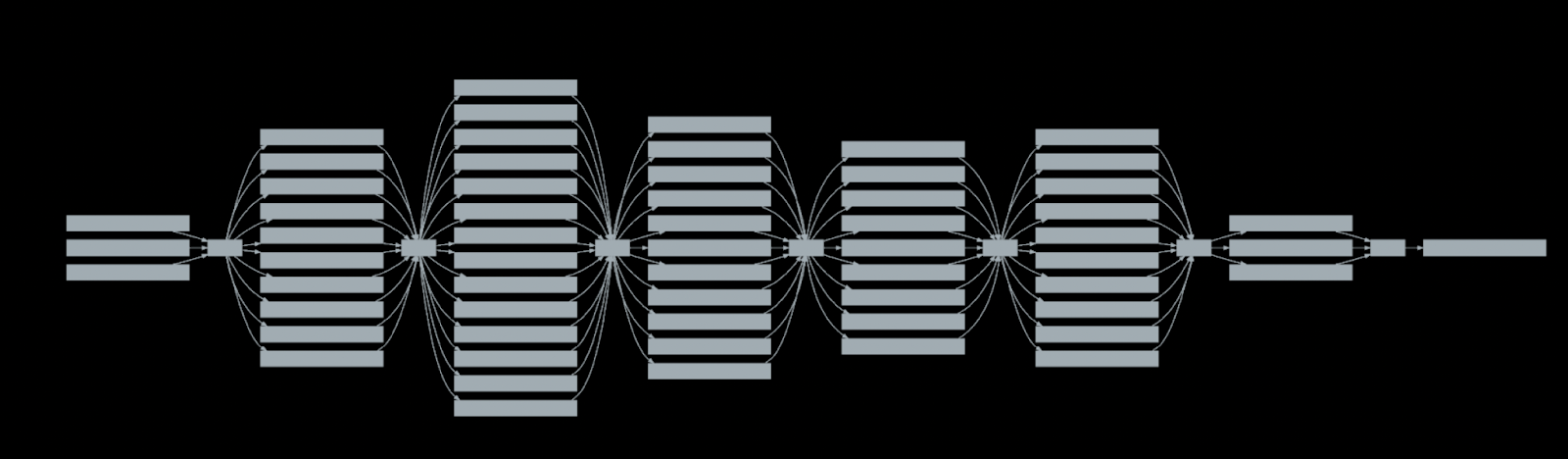

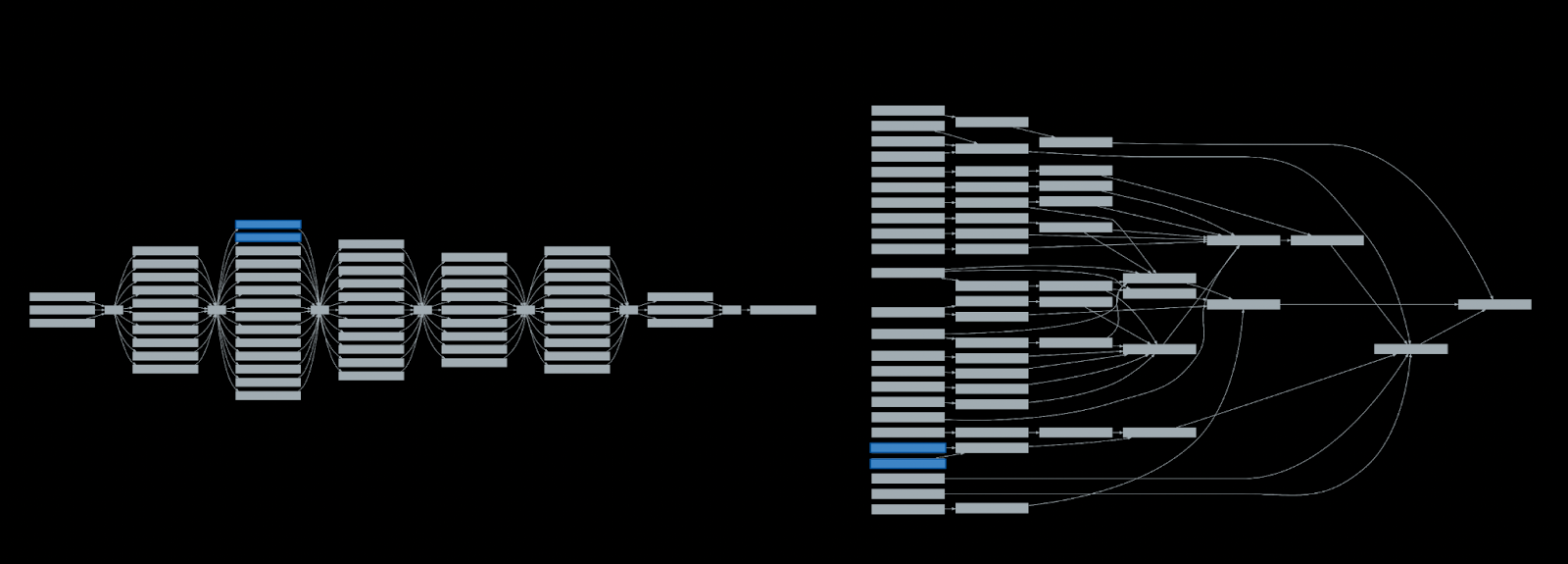

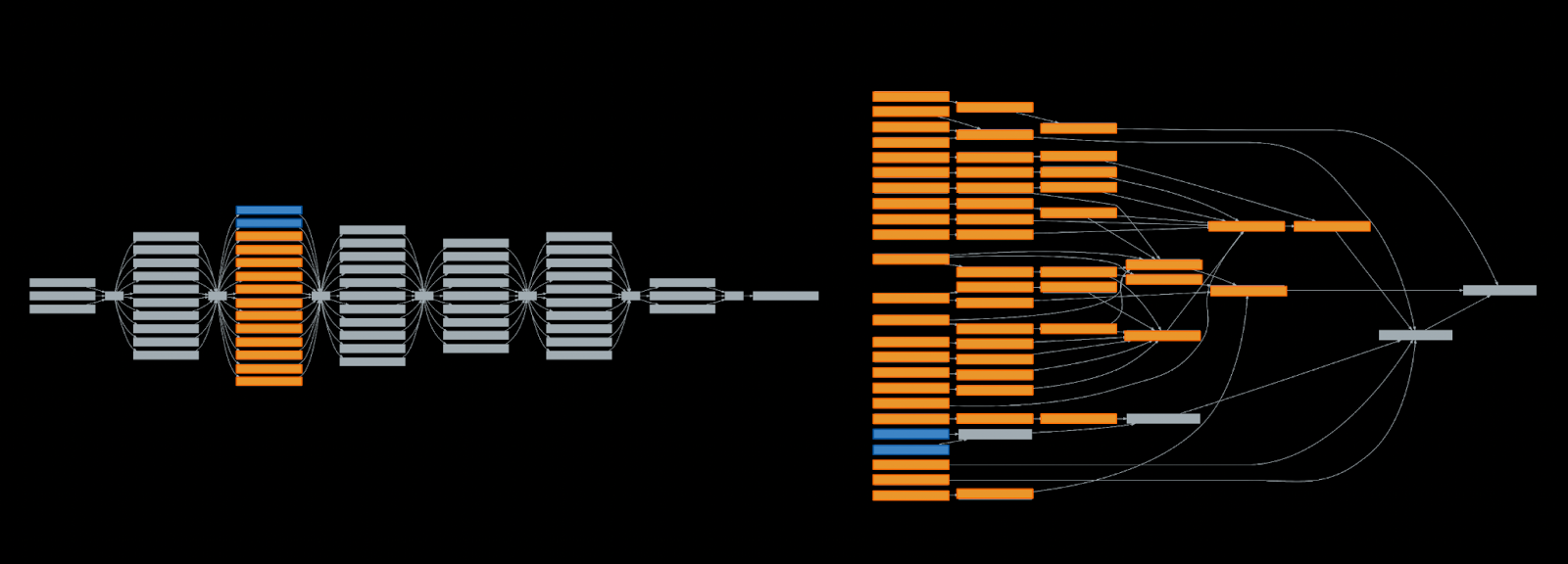

The example below, from a large-scale mid-production title, demonstrates how the old Parallel-For functionality would handle multi-core execution of the Voice Graph. In particular, note that the only portions where Wwise could go wide was when executing each level of the graph, irrespective of the actual connections and data dependencies between buses.

The degree of parallelism that could be achieved was limited because there are, in fact, many buses that can run independently of each other, but the system for dispatching work could not express the CPU work in that way.

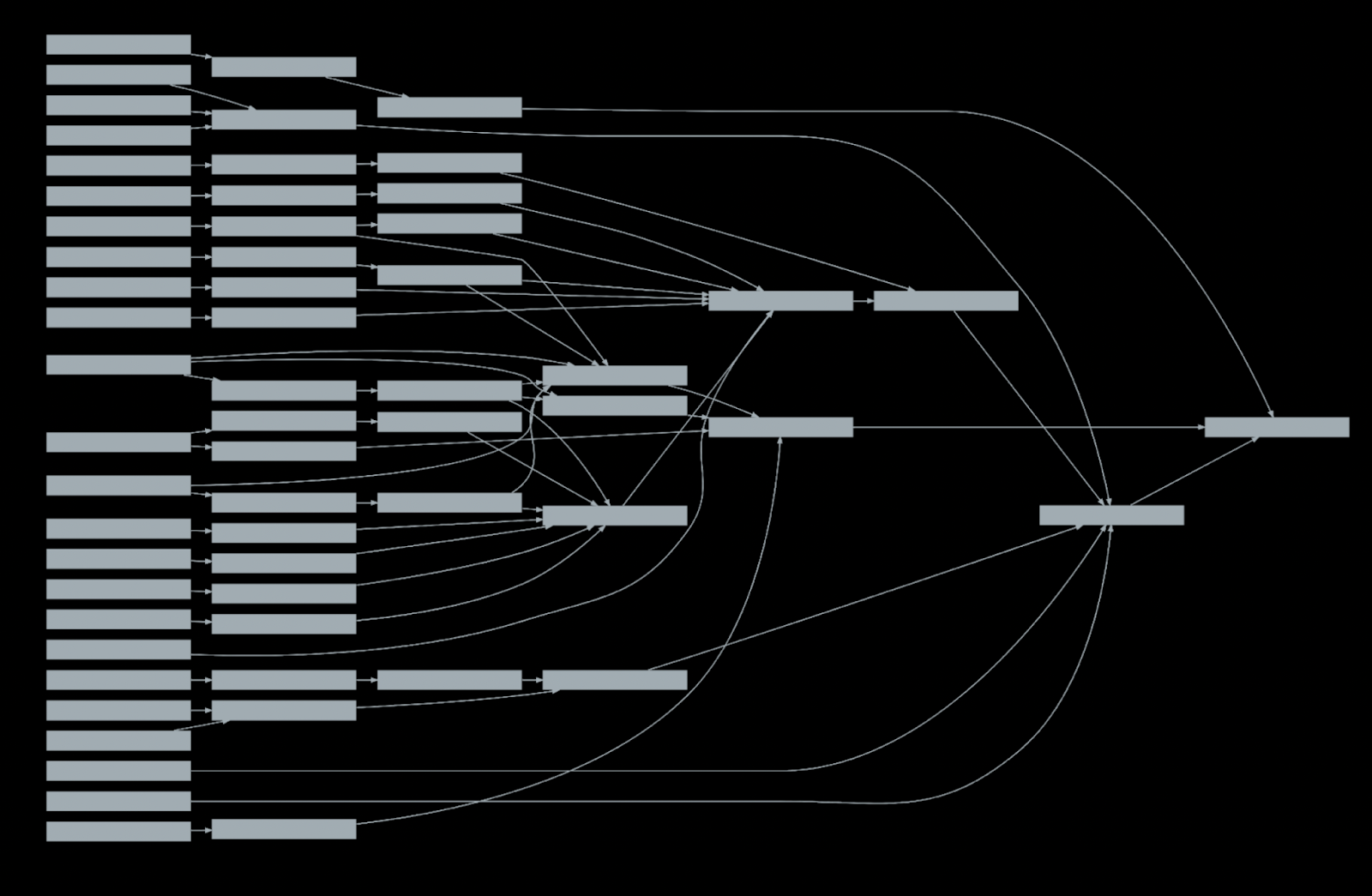

Now, Wwise can go much wider in the execution by being able to execute work in the bus graph as soon as all dependent buses have completed execution, as the below image demonstrates.

In this way, work can be scheduled across more cores at a time, to allow for work to be completed more quickly.

Moreover, Wwise can attain far greater concurrency across execution of buses than before. For example, if a couple of the buses in this sample bus graph, highlighted in blue below, take a long amount of time to process…

…then these buses, highlighted in orange, are all of the buses that can be executed concurrently to the ones in blue, in either scenario.

If most of the other buses take far less time to execute – for example, the ones in blue are running a complex multi-channel convolution effect, whereas the ones in orange are simple stereo buses with EQs and Compressors – then the total wall-clock time that it takes for the entire bus graph to execute will be greatly reduced with the new execution model. The buses highlighted in blue may be able to start execution much sooner, since they do not need to wait for any buses to be executed ahead of time, and more work can be processed while they are executing, greatly reducing the length of the critical path of execution.

Improvements to Job Dispatch

One other thing we kept in mind when designing the AkJobMgr and worker-function callback systems was to greatly reduce, or keep under control, the number of jobs Wwise requests back to the game engine.

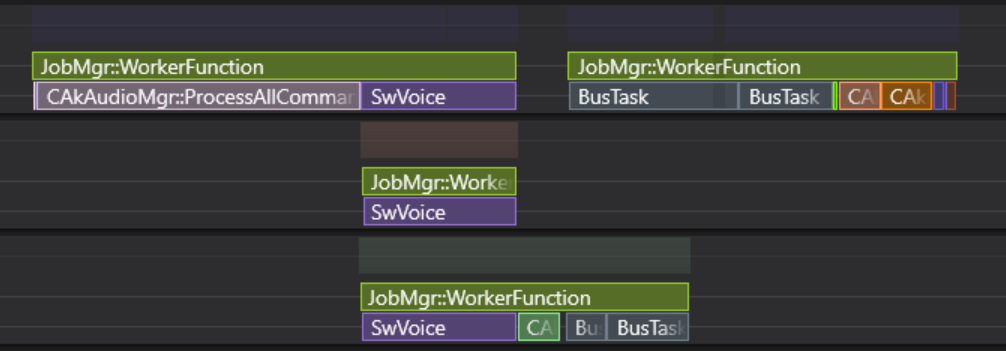

Our internal job system was designed to be very simple, and carefully tuned with an eye to performance, such that each job can take only 10 to 20 microseconds to execute, and incur very little performance overhead from the job management itself. However, we understand that larger-scale job systems for full games can be much more featureful than what our requirements are, and often end up being much more heavyweight as a result. For example, some job systems may target an average execution time of 500 to 1000 microseconds per job, in order to keep the overhead of their job system to a minimum. If Wwise issued worker requests for each of its own jobs individually, it could absolutely swamp many game engines, and cause significant performance issues.

We keep the number of worker requests under control by having worker functions internally run many of our jobs one after another, and also only issue new worker requests when there is an opportunity for more work to be done in parallel, up to the limit specified in the AkJobMgrSettings.

For example, in this example CPU timeline, we have 23 jobs which Wwise handles internally, and with AkJobMgrSettings::uMaxActiveWorkers set to 3, Wwise only ever issued 4 worker requests to complete all of the work. Note that each box labeled as “JobMgr::WorkerFunction” represents one requested worker function, and each box underneath is a separate job that Wwise processes.

In this case, Wwise’s execution goes wider during the “software voice” phase of the sound engine tick, but the “bus task” execution was too narrow to have 3 workers running concurrently, so only 1 extra request is made when that phase kicks off. After the bus tasks are complete, Wwise continues to handle more jobs that are available for execution, for the epilogue of the sound engine tick.

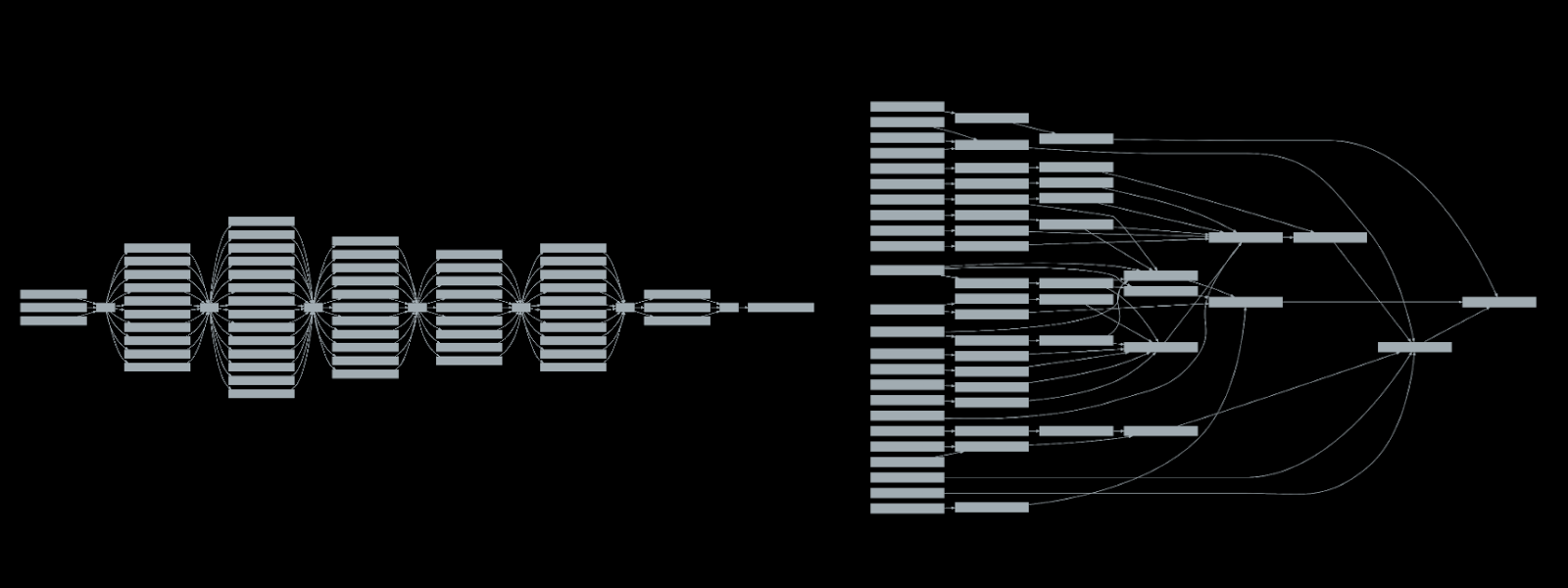

Going back to the example from before, and comparing how it operates on previous versions of Wwise, versus Wwise 2022.1…

If the game engine attempted to schedule up-to 8 jobs at a time for doing Wwise work, then the parallel-for example from before would probably attempt to schedule 47 individual jobs for the game engine to process. Now, execution of the bus graph would likely only have to schedule only 7 jobs – plus one job which would already be running, having just wrapped up the processing of the SwVoices.

Conclusion

In summary, with the new worker function model and new ways to express multi-core execution, we feel that the new JobManager should be much more effective for more developers. It has much higher throughput, improved latency, and greatly reduces the overhead required by the game engine to schedule the work. We think that this will allow developers to more effectively use the compute resources of modern multi-core CPUs to attain better performance for processing of the Wwise soundengine, but also the game as a whole.

Going back to the first example showing the issues of sub-par CPU scheduling, let’s consider that Wwise has been configured for more parallelism. It is likely that something like the following is more achievable.

Here, the game has set up the worker function callback, configured it to terminate early to avoid monopolization of core, and has configured Wwise to run on more threads where it can. In this example, Wwise is able to finish its work faster, and avoids intruding on other time-critical work for the game.

Tips for Audio Scheduling

In addition to the overview above, we would like to offer some other tips for leveraging this new functionality. A misconfigured system could result in net performance losses in some situations, or there may be precious CPU cycles left on the table.

1. We strongly recommend not to naively max out how many workers you can use at a time

For one, launching workers is not a free operation, so issuing more worker requests than can actually be used may result in a loss of CPU time. If you see that you have many worker requests which are completing very quickly, while the sound engine consistently runs ahead of schedule, it may be that you should lower the maximum number of workers that Wwise should make requests for.

Besides that, the entire sound engine is not massively parallel, so do not expect that running across eight cores will result in the sound engine taking one-eighth the amount of time to execute.

Lastly, launching more workers, or initializing more worker threads to be able to run Wwise jobs, may require more memory, due to extra thread-local heaps and caches that may be required. In the near term, this would be perceived as a minor waste of memory, but in the long term – on PC, for example – this could result in other stability issues if consumers are running your game on a 128-core CPU and your game naively configures the job manager to run on 128 workers. If Wwise’s memory systems are configured to limit how much memory is allocated, then you might accidentally start running into those memory limits, causing issues in audio playback.

It may be that simply having a max of 3 or 4 workers is all you need to satisfy your performance requirements, and that can still give a significant benefit! If that’s what works, then leave it at that!

2. Don’t assume audio CPU performance is no longer a concern

One thing I’m sure some developers have said, as they start moving onto higher-end platforms, is…

“We have next-gen CPUs now, so performance is not an issue, right?”

…only to find a few years later they’re staring down a fully-loaded CPU timeline, such as below:

Even on new hardware, we urge you not to fall into this mentality, even if it may feel like you have a lot of room for CPU execution today. In particular, just because these systems are now available to run audio across multiple cores does not mean that you suddenly have unlimited CPU time!

Certainly, we have seen some game teams already start to saturate the CPU on current-gen consoles, so it is still worth identifying and acting on improvements that can affect the total CPU usage of your game – which can still include keeping audio performance under control!

3. Audio may not have to be executed at a time-critical priority level

One thing that is worth considering when balancing Wwise execution with game engine execution is if audio still has to be executed at a time-critical priority.

In the past, our guidance has been to run the EventMgr thread at a very high priority so that it is always able to schedule the necessary work. However, the examples shown above demonstrate how there can be some value in letting other bursts of time-critical work run at a higher priority instead of audio. That is, one reason to leverage multi-core processing may be to give audio execution a lot more leeway in the scheduling, and effectively allow scheduling to be a bit “lazier”.

It may be desirable to let time-critical game work finish earlier, while other audio work is treated as a normal-or-high priority, so that audio work can fill in other bubbles of the CPU timeline that your game may have. For example, if you’re running Wwise at the stock setting of 512 samples-per-frame, that means the soundengine ticks at only 94Hz. If your game is targeting video-render rates of 120Hz or higher, then that is probably the more important performance target to hit, and it is a higher priority to complete first due to the narrower window of time available.

4. Reduce Wwise’s total latency/device buffer size

Similarly, it may be that you can use the extra CPU resources to lower the amount of audio buffering Wwise works with, because the audio updates can complete faster.

Again, Wwise’s default settings are 512 samples-per-frame on all platforms, and to initialize system output devices with 4 frames worth of data. This gives a total software latency of 2048 samples of buffered data, which is 42ms of latency (@ 48KHz).

Given that games targeting 60 or 120Hz for video can hit less than 35 milliseconds of latency, it may be that audio starts to run slightly behind the video presentation! Moreover, there may be other concerns with audio latency due to running in a cloud-streaming environment, or to handle 3D Audio processing.

If audio can complete more quickly due to use of multi-core resources, and you have the available CPU budget otherwise, it may be worth checking to see if these numbers can be lowered. For example, if your audio wall-time is consistently ahead of schedule, you may be able to lower the samples-per-frame from 512 to 256. Alternatively, it may be possible to reduce the refills-in-voice because any spikes in audio frametime can be recovered from more quickly.

5. Try to manage the affinities of workers, if you can

Next, we would recommend trying to manage the affinities of job workers carefully, where you can, and if you have the time to accommodate for such balancing. This can have a significant effect on how much CPU time Wwise consumes for audio processing, and may be worth consideration.

The biggest recommendation would be to keep Wwise workers on the same CPU cluster or core-complex, for appropriate systems. We tend to synchronize some state across workers and cores, and having to share data across CPU clusters can slow things down.

Another subtle thing is that for systems that support simultaneous multi-threading, it may be desirable to only run audio jobs on one of the two hardware threads of a single core at a time. Some portions of our audio processing can issue SIMD operations in a very dense manner, and can saturate the execution unit resources available on a CPU core. It may be preferable to try to run Wwise workers on separate cores so that workers are not competing with each other for those resources. This may be especially valuable if you have many other jobs that you know are much lighter on FP workloads, which can be scheduled concurrently to the Wwise workers.

6. How many Wwise Worker Requests can be in flight at one time?

Some game engines need memory, or other resources, for their job systems to be created or reserved upfront, so as to ensure consistent resource footprint in all scenarios for the lifetime of a game. As a part of this, one may be wondering how many worker requests may be in flight at once, and therefore how many resources to allocate, especially since we request jobs at an unpredictable basis and rate.

The maximum number of worker requests that may be outstanding at any time – both workers running, and worker requests that are yet to start execution – is not strictly “the maximum number of workers Wwise is initialized to use, across all job types” but rather, it is double that number.

This is because, theoretically, all workers may be simultaneously in a state where it is requesting a new worker, while also running a worker that is nearly about to retire.

The Present and Future

Wwise 2022.1 has all of the core job and profiler functionality available in the SDK, today. You can get it today and start utilizing it in your game project, and can work to verify proper behaviour with any custom plug-ins you may have.

The Unreal Integration for Wwise 2022.1 also implements support for these Worker Functions, which you will be able to configure in your project’s initialization settings.

Below is a screen shot from Unreal’s Timing Insights, showing not just the Wwise jobs running on Unreal’s worker threads in cooperation with other jobs Unreal has, but also showing some of the more detailed profiling data directly available inside of Timing Insights as well.

Future patches of Wwise 2022.1 will continue to incorporate further performance improvements, some of which was hinted at in this presentation. These include…

- Setting up the entirety of the audio tick to run in worker functions, not just the Voice and Bus processing, so that the footprint of the EventMgr thread is nearly eliminated

- Elimination of many temporary allocations we make in the engine, as well as some of our supported plug-ins, to reduce cross-thread contention that some memory allocation systems incur

- Eliminating some other logic that acquires critical sections during points of parallelism across jobs, which can radically slow things down. The items we have identified currently are voices making requests for I/O streams, and voices executing as a part of events that request GetSourcePlayPosition support

Lastly, we should note that we have only jobified the EventMgr thread so far. We have not yet updated our BankMgr thread, so for now, that will continue behaving the same as it has before. Performance issues with it are something we’re definitely aware of, and want to resolve, though, in future release of Wwise.

That’s All For Now

If you want to review other information on the JobManager system, we have an article in the Wwise SDK documentation, under “Going Further” and “Optimizing CPU usage”.

As well, feedback after release is always appreciated. In particular, CPU captures can be very helpful, both for validation of past decisions, but to also inform future decisions, and future developments.

Crucially, we won’t know about problems you’re experiencing if you don’t tell us about them. Even if you don’t require a fix for an issue in order to ship your game, it is worth knowing that you did run into an issue – because then it is something we can look into fixing for the future.

Thanks for reading!

Comments

Brad Snyder

January 30, 2023 at 08:54 pm

I don't understand why anyone isn't commenting on these blog posts. This information is fantastic and very thoroughly detailed in its presentation! Thanks :)

Huijae Jeon

June 07, 2023 at 10:13 pm

Thanks for the good post! How can I get more information(how to enable) about the new profiling feature in the Wwise authoring tool? I want to try out this.

Eugene Suhovei

June 23, 2023 at 03:48 pm

The list is really impressive! Thanks to the Audiokenetik team Сan't wait to check it