Clocker is an indie adventure puzzle game, developed by Wild Kid Games. The storyline focuses on the relationship between a father and his daughter, with time as the connecting bond. The game features a unique time mechanism, compelling storylines and challenging puzzles to solve, and is delivered in an artistic hand-painting graphics style. It tells the story of a father and his daughter, using a two-character narrative technique, and allows you to control either of the two characters separately when solving puzzles during your adventure.

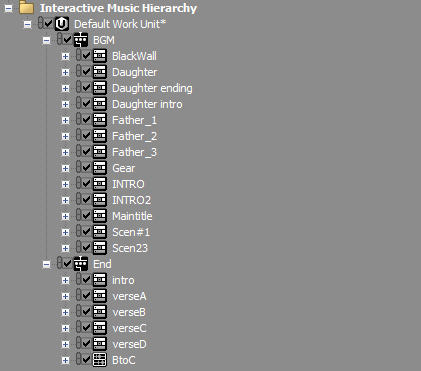

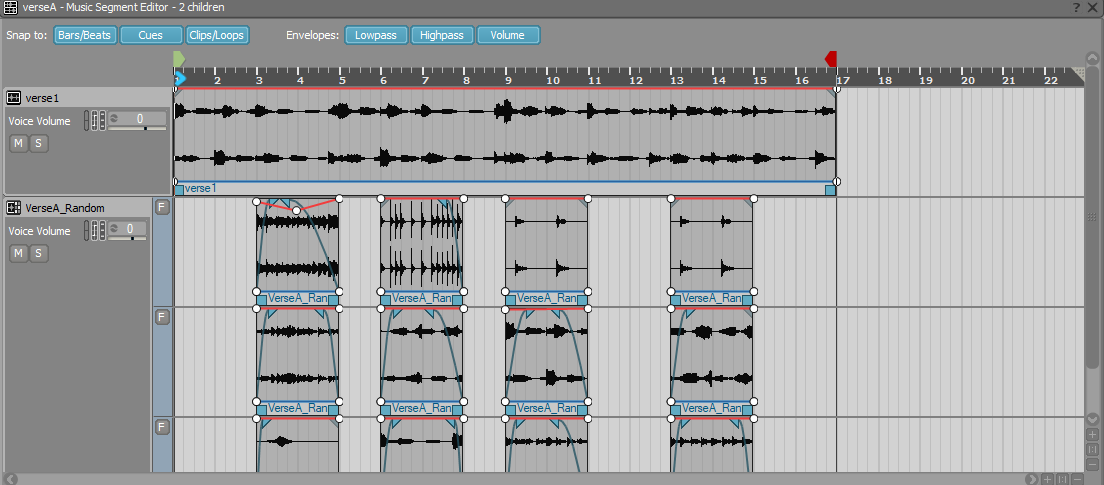

In part 1 of this blog, we focused on how we designed audio that complemented the game's complex logic. Here, we will focus on the game's interactive music. We knew that we wanted to influence the players' experience and guide the player’s actions via the in-game music. We also wanted to and express creative ideas with the game music. Altogether, we created 14 pieces of music for the game. In the image below, you can see them as they appear in our Wwise project.

We used the Father-Daughter Theme and the Chapter Theme to reflect emotional changes. As previously mentioned, the father’s world is all in black and white. The producer wanted to emphasize the father's depressed state. So, we created the Father Theme with sorrow as its keynote. In contrast, the Daughter Theme was created with lively musical elements. For special scenes and storyline considerations, we added more emotional elements for each chapter, based on the keynote, making players feel they are getting closer to the daughter while moving on to the next chapter. We hoped that the in-game music could create an atmosphere with the proper emotional elements at the right time.

In fact, we wanted players to stay in the daughter’s world longer, because there are more hidden storylines for her in the game. After several test runs, we discovered something: most players did in fact spend longer periods of time in the daughter’s world. They found the Father Theme too sad and the Daughter Theme more relaxing. So, we essentially achieved what we wanted: which was to guide players with the in-game music - in this case, specifically guide them to spend more time in the Daughter Theme.

Designing the interactive music for Clocker

At the end of the game, there is a very touching storyline. Players have to control the father and walk through the time rift. During this period, he grows old very quickly. But he doesn’t care, all he wants is to find his daughter. As the father keeps moving forward, the background changes dramatically to reflect the rapid passage of time and his aging.

Players go through the game content in several action phases:

- The father goes into the time rift;

- He explores his surroundings carefully and accidentally falls down;

- He keeps moving forward and gets out through a rock tunnel;

- Everything changes dramatically, and the father grows old very quickly;

- He reaches the end and falls into the abyss of time and space.

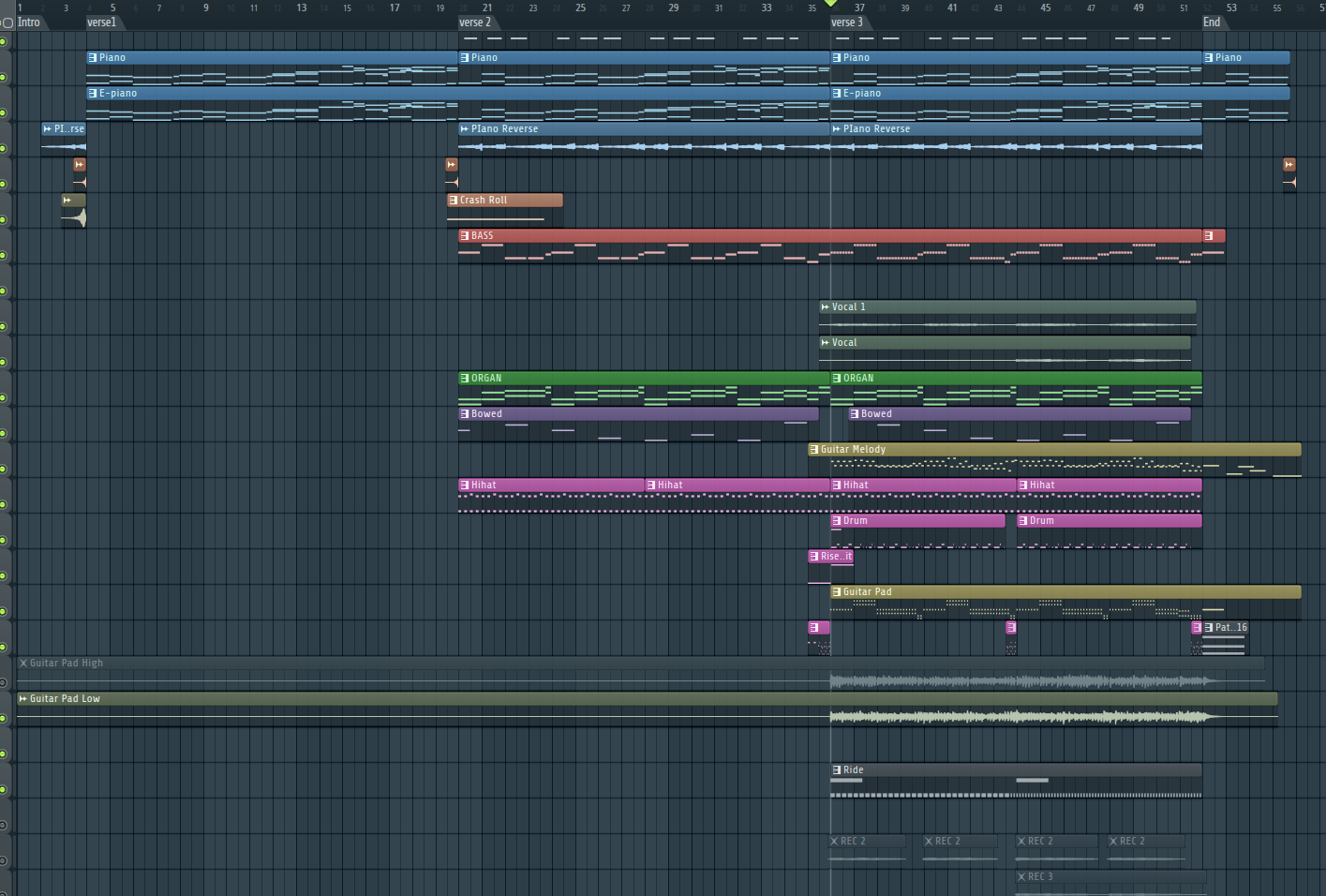

These action phases are deployed progressively, so the music has to be designed in the same way. We created 5 progressive music segments based on these interaction phases:

- Intro (introducing mysterious emotions);

- Verse1 (building up the atmosphere with block chords and simple melodies);

- Verse2 (adding some low-frequency sounds and hi-hats to underscore the exploration);

- Verse3 (adding drums, guitar, pads and vocals to emphasize emotion);

- End (quieting down and ending the process).

5 progressive music segments

5 progressive music segmentsAs a result, players experience emotional changes throughout the game. Whenever the game content changes, the atmosphere also changes to reflect the father's love and the passage of time. This was our main focus in the music design: emotional shifts.

As for the composition, there are many ways to express a character’s emotions, such as rhythms, melodies, harmony patterns and instruments. However, in Clocker, only melodies and instruments can be used in the game score. And so, we considered a second element in the music design: interaction.

Game music is quite different from other kinds of music. In a game, the visuals, the camera and the content are not fixed. They change in real time based on the player’s actions. The music has to interact with these actions accordingly.

Players can control the character to move forward/backward or stand still. If players just stop as they are moving forward or backward but the music doesn’t, the visuals and the audio won’t match. In this case, players could stop at action phase 2, but the music for action phase 4 might already be playing - that’s not good. And, there is no way to predict the player’s actions. But in order to guide them, we created interactive music.

As previously mentioned, there are many approaches to composition, but only few can be used in this game score. To get what we wanted, the following requirements had to be met:

- Except for Intro and End, the remaining 3 music segments must be seamlessly looped. No matter how long players stop and stay, the proper segment must keep playing until they resume.

- The transition between music segments must also be seamless to make sure the emotional changes feel natural.

- Variations must be introduced, when looping a single music segment. When players stop and stay for a long time, they should not be annoyed or bored.

- When players move on, the music segment should change naturally. Otherwise, the sense of immersion is affected. If it’s too slow, the atmosphere will be out of line with the game content.

Based on these requirements, we came up with the following solutions:

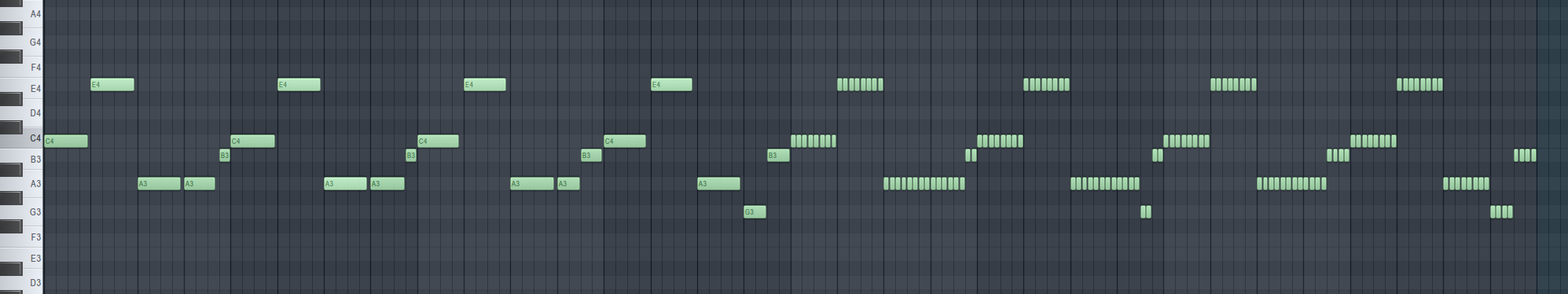

1. All music segments were looped seamlessly, using FL STUDIO as the DAW.

Looped segments from the chord part

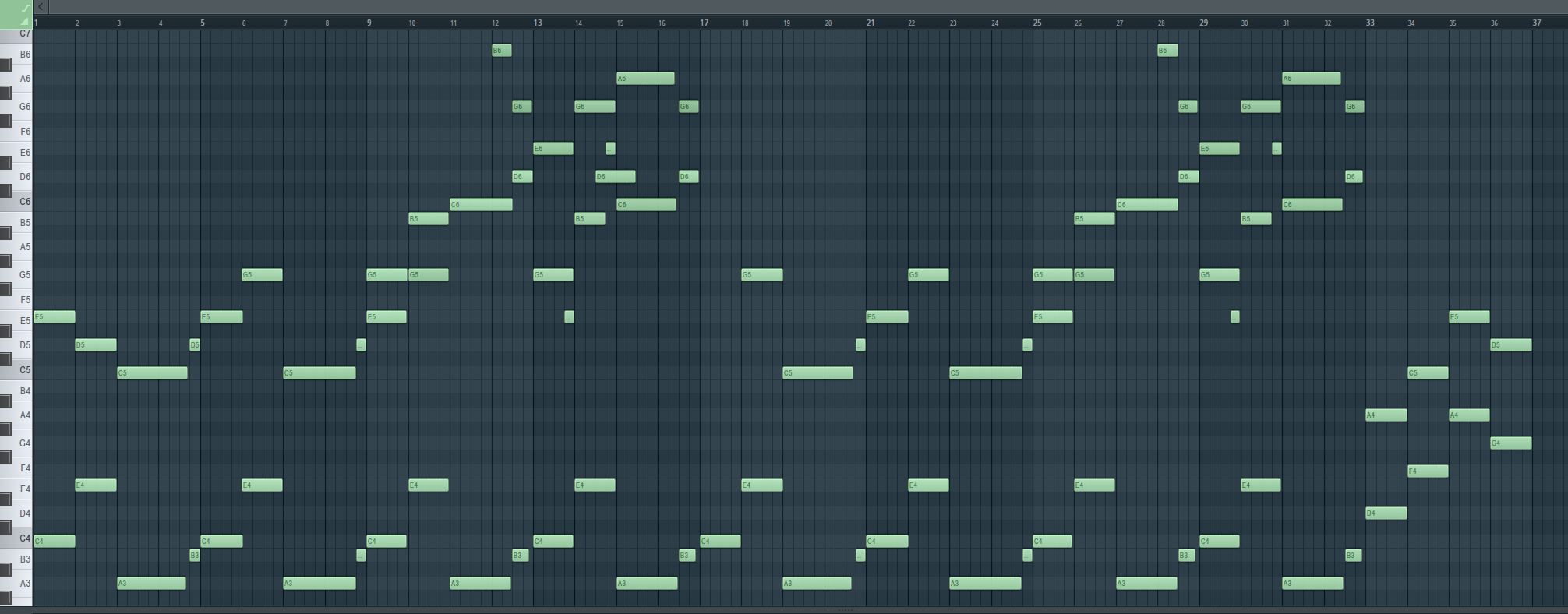

Looped segments from the chord part2. All music segments were designed with the same tempo (BPM), underlying instruments as well as harmony patterns. And we used a 1-3-6-1 chord pattern to render emotions and ensure natural transitions, regardless of their order. This also laid a foundation for the playback logic design later.

1-3-6-1 root note pattern

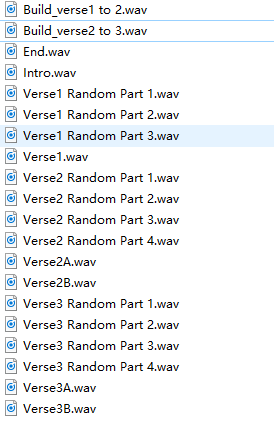

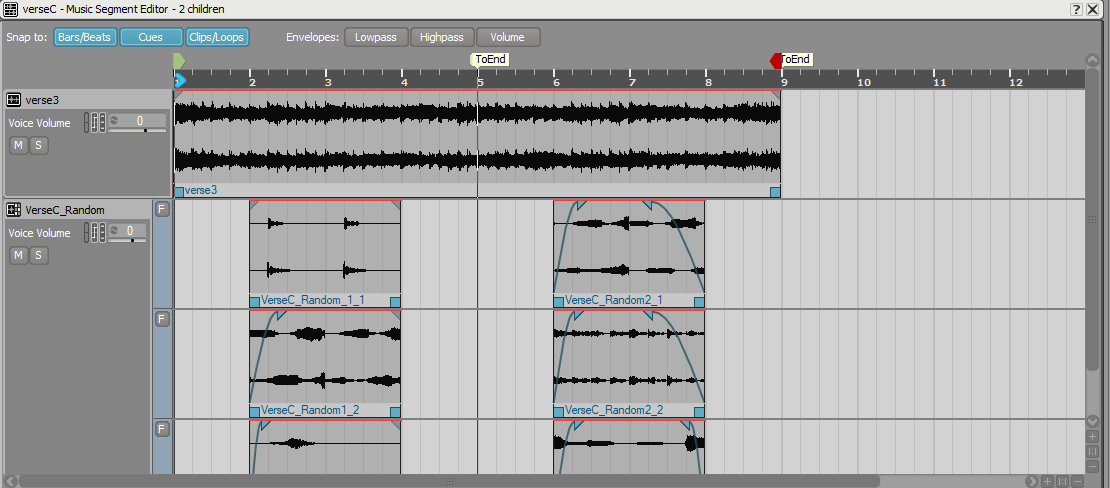

1-3-6-1 root note pattern3. We added some melody and content variations on top of each music segment, harmoniously augmenting the underlying instruments and being played randomly. When a music segment is looped and repeated, you always hear different content, but with the same emotions. In the image below, you can see more details.

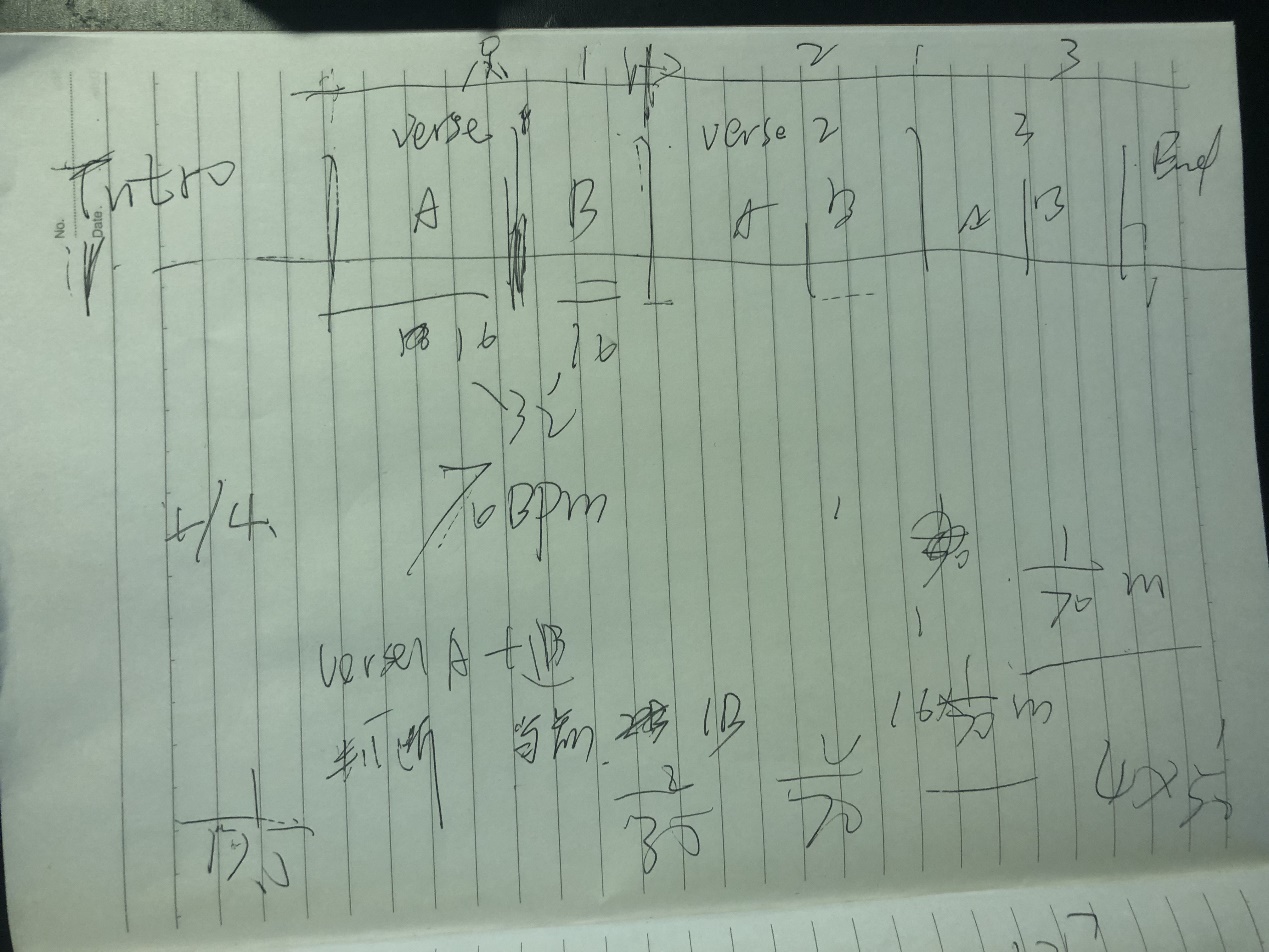

4. We split a whole music piece into multiple sections (horizontal) and layers (vertical). In the image below, except for Intro and End, the three remaining music segments were split into Verse1 - 3. Each Verse has 64 beats and 16 bars. However, a complete loop has 32 beats and 8 bars. So we split Verse1 - 3 into A and B, so that no new content needed to be added to any of the loops. In addition, we split Verse1 - 3 into melody tracks (Verse Random Parts). These tracks are used to add variations to looped segments and to avoid auditory fatigue. Finally, we created another two tracks (FX track and Fills track) to transition between segments and build emotion (Build_verse1 to 2).

So far, we looked at creating and splitting music segments based on the above requirements. After a lot of trial and error, we established our final playback logic.

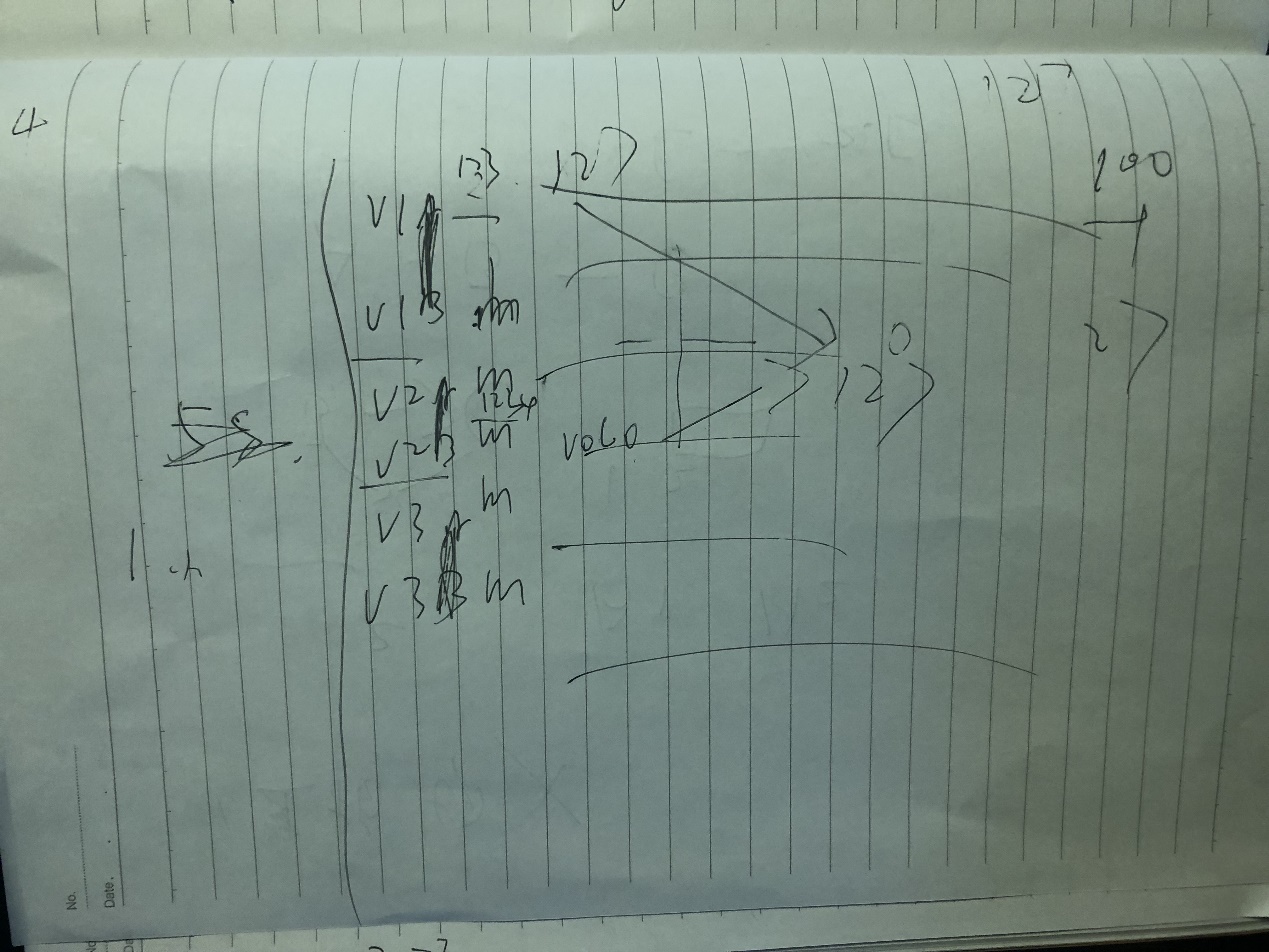

An example of raw playback logic (draft)

An example of raw playback logic (draft) The final playback logic (draft)

The final playback logic (draft)As you can see, in our final playback logic, we divided the map into 4 areas. In each area, a Verse is played. And there is a transition at the boundaries between the different parts (i.e. the triggering point). When players move to the next area, the current Verse automatically plays a 4-beat bar, then seamlessly transitions to Build_verse 1 to 2 (or Build_verse 2 to 3 for the transition from Verse2 to Verse3), and then transitions to the next Verse.

The tempo for the game score is 70 BPM, so it’s 3.44 seconds for every 4-beat bar. When the character reaches a triggering point, we only need 1 beat (0.86 s) ~ 3 beats (2.58 s) to make the transition between the two verses (without players even noticing).

The latency was acceptable to us, so we moved on to the next task: implementing and testing the sound engine.

Implementing the sound engine

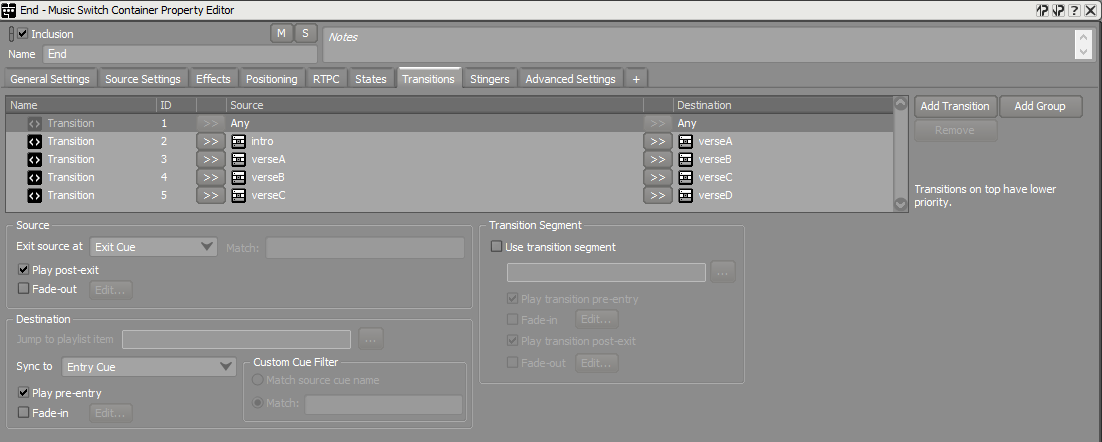

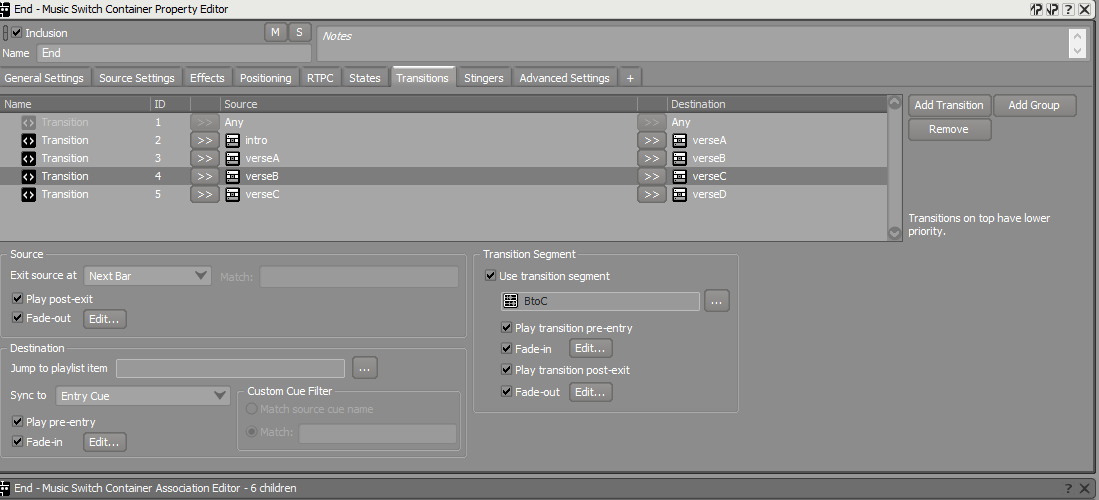

We created transitions for all music segments in our Wwise project. In the image below, you can see how we did it.

Transitions

Transitions1. For the transition from Intro to VerseA, we used "Exit Cue" as the exit point. Intro is relatively short, as is the transition, so no loops were needed.

2. For the transition from VerseA to VerseB, we used "Next Bar" as the exit point. It transitions to VerseB when that bar completes. As previously mentioned, we used a 1-3-6-1 harmony pattern to switch the music. VerseB starts with a harmony at 1. So the transition is seamless.

3. For the transition from VerseB to VerseC, we used "Next Bar" and a transition segment. VerseC is richer than VerseB in regards to instrumentation. Without a transition segment between them, it would have been awkward. We therefore used a chord at 1 and added drums to that segment, to transition the music. With the added drums, it feels more natural when transitioning to other instruments, such as a guitar.

VerseB to VerseC

VerseB to VerseC4. For the transition from VerseC to VerseD, "Next Bar" could not be used, because VerseC has two vocal lines, while VerseD is an ending segment with a strong finale. There is no way to predict when players will reach the triggering point of VerseD. Segments could be transitioned while playing the first Vocal, but this would sound unnatural. We therefore used "Next Cue" to transition to VerseD. In the image below, you can see two cue points with the same name. They are positioned right at the end of the fourth bar.

Performance Optimization

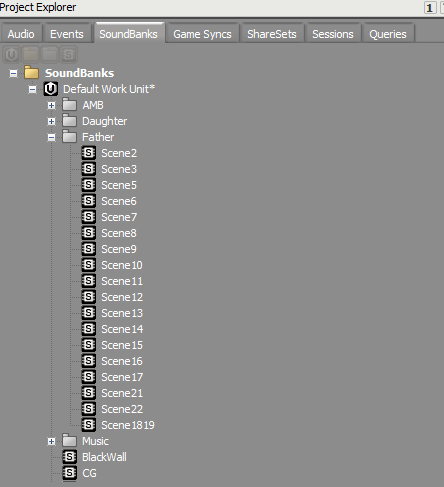

Managing SoundBanks in the project

In the game, there are 22 scenes in total. Logic lines, ambient sounds and music pieces were split into different SoundBanks, which are loaded and unloaded for the corresponding scenes. This reduces memory and improves the performance. In the image below, you can see the SoundBanks and the scenes from the project.

SoundBanks

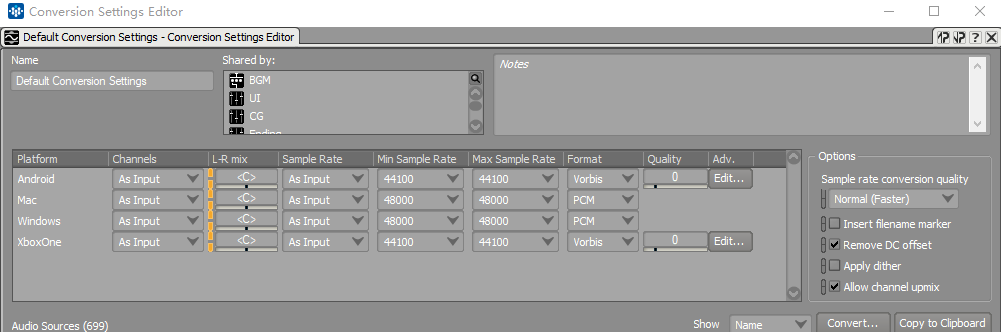

SoundBanksCompressing the audio for different platforms

This game is available on multiple platforms including Windows, Android, Mac and Xbox One. The in-game audio totals more than 1 GB. This is too big for a mobile platform. We used the Conversion Settings feature in Wwise to set the different formats for these platforms. For Android, the compressed package is only 58 MB.

Conclusion

Wwise is a very powerful sound engine. It saved us a lot of time and provided us with many more possibilities in the sound design than the stock Unity sound engine. Thanks to the Wwise community and readers. I look forward to learning from you and sharing with you from time to time.

Comments