Highly interactive and recognizable game sounds not only underscore the "unique character" of these well-made games, but they also provide a better gaming experience, and valuable help in strengthening game systems. A trend, emerging from the highly successful games of the past few years, is that many of them have become social platforms. If you are the parent of teenagers, you probably noticed that they likely prefer multiplayer games where they can chat, be it for Overwatch, Fortnite, or Minecraft. Of course, these games offer great graphics, perfectly tuned gameplay, and are tons of fun, but being able to connect players together through speech seems to be a key aspect that makes a player return to the game. Is there a better way to connect friends together than by allowing them to speak freely together?

In-game voice is becoming more popular on various gaming platforms, especially for games with a primary focus on online multiplayer modes, such as Battlefield, The Division, or League of Legends for example. Any eSport or FPS player has experienced that voice is a more natural and efficient way to communicate than text, amid intense gunfire. In addition, all the small talk that goes on provides another source of entertainment while playing.

Historical Problems Explain the Slow Adoption of Voice Chat in Game

Historically, especially on mobile devices, voice chat integration wasn't considered paramount by most AAA game creators, despite its importance and advantages. The lack of services at the OS level of mobile devices may have been responsible for its late adoption.

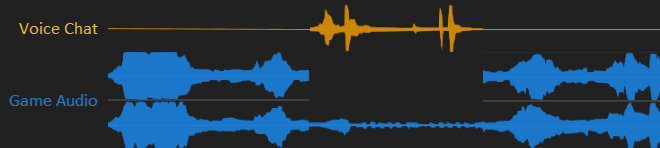

Mobile OS' makes it difficult - and sometimes impossible - to mix the ‘call volume’, used for voice chat, and the game audio, using the ‘media volume’, as it causes peculiar artifacts. For example, in configurations where the game uses ‘media volume’ for the game audio and ‘call volume’ for the chat, the audio output is systematically downmixed from stereo to mono each time a player speaks. This makes it difficult to remain immersed in the game or to keep track of your opponent's movements in this context!

Another typical problem is the sudden discrepancies in volume between the game and chat audio where, this time, the whole game audio is automatically ducked down each time voice chat occurred. Needless to say that these limitations could go from being unpleasant, in most cases, to breaking the whole game experience, in extreme cases!

Figure 1 - Representation of limitations when voice voice chat is managed using mobile OS services.

Certain games implemented voice chat playback using the media volume, which solved the channel downmix and the bouncing volume artifacts. This small victory was short lived, however, as it exposed a new set of problems: The lack of noise reduction and echo cancellation, which caused voice overlapping and echoing among players.

Game Multimedia Engine (GME) Integration in Wwise

GME integration in Wwise solves the aforementioned problems and offers an end-to-end service: from voice capture, processing, encoding, decoding, and transmission of voice chat. GME is cross platform, which enables game developers to have their players talk to each other, even if they're using different devices, such as their phones, consoles or PCs. The solution also enables low latency communication and high concurrency. On the latter point, GME provides a proven experience of providing a consistent voice chat experience for hundreds of millions of users with games such as Arena of Valor and PUBG mobile, which had an average of two million PCU (Peak Concurrent Users) every day, not so long ago.

The Wwise GME integration provides entry and exit points for voice chat to be fully integrated into the gaming experience. Individual voices can be treated with effects processing, spatial audio rendering, dynamic mixing, etc. just like any other pre-recorded audio source.

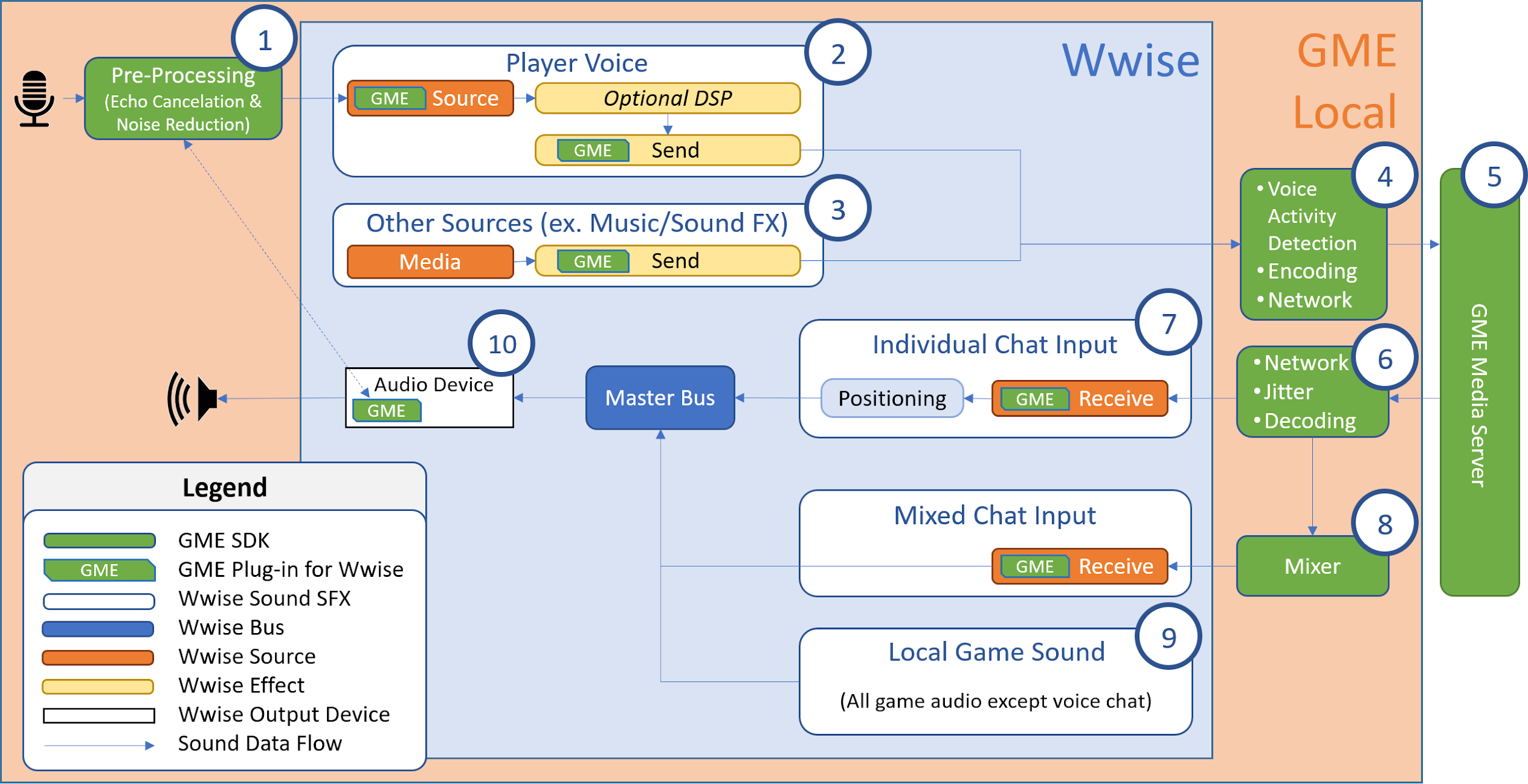

The following diagram exposes the integration of GME in Wwise. It shows both how the project is setup and how it is executed at runtime.

Figure 2 - GME integration in Wwise and runtime execution flow

1. GME captures the microphone input from the OS and applies noise reduction and echo cancellation. Echo cancellation needs the final rendered audio to operate, which is provided by the GME audio device plug-in (#10).

2. Local player voice chat source is sent to teammates through GME servers. Audio effects can optionally be applied.

3. Any other game audio source such as music, ambient sounds, SFX, etc. can also be sent to GME servers.

4. Voice activity detection and encoding optimizes network bandwidth.

5. GME media server receives voice chat and redirects individual streams to the proper receivers.

6. Reception and management of the incoming voices from GME servers and decompression into PCM audio.

7. Incoming voice chat is assigned to independent sound instances which allows additional DSP, distance attenuation, positioning, etc.

8. Voice chat can be mixed as a single audio input stream when desired.

9. All sounds, except audio received from GME servers, are locally triggered by the game.

10. Final audio mix is rendered by GME audio device plug-in, which is necessary for echo cancellation processing (#1).

Creative Use

Standard voice chat has been around for a while and is nothing new in itself. One of the interesting points with the GME integration in Wwise is that it is really easy to setup in creative ways that spice up the gameplay mechanics while enhancing the overall immersive experience.

As represented by (#2) in Figure 2, the captured voice can be treated with effects, if desired. As the player’s voice becomes a standard audio source in pipeline, it is possible to process the signal with effects such as a compressor/limiter and EQ, for example, before sending the voice to GME servers. You could think of more drastic effects, to transform the player’s voice into a robot or a monster, but this needs to be tested in advance, as too much creative processing could interfere with the codec’s ability to correctly compress the signal to be sent to the server. If this is the case, then it’s possible to simply apply these effects on the receiving end as exposed by #7 in Figure 2.

Otherwise, the player can receive a downmix of all voices (#8) or each voice as individual streams (#7). Keeping each voice separated offers interesting options that may reinforce certain aspects of the gameplay. For example, it enables the application of distance attenuation and/or positioning for players to better locate their surrounding teammates. Each individual voice can also have its own environmental reverberation (and dynamic early reflections if the application is using Wwise Reflect, for example!) which further refine the sense of physicality and presence in the room.

You can even imagine scenarios, in a small squads battle royale game, for example, where discussions are private within teams, as they are using radio communication, except when a player is close enough to be heard by his opponent as would happen in real life. Busted!

Setting up GME in Your Project

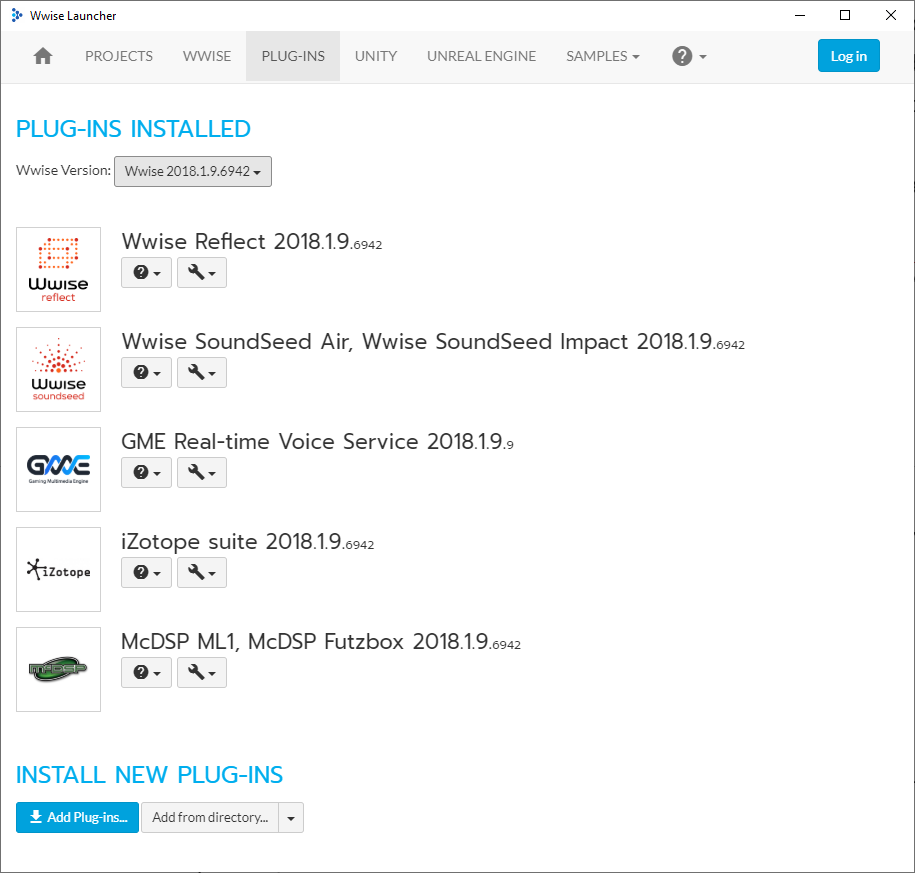

You can easily setup GME into an existing project, running on Wwise 2018.1 or 2019.1, by executing the following steps:

1. First, if it is not already installed, you must install the GME plug-in on your machine from the Plug-ins tab of the Wwise Launcher application. Click the Add Plug-ins… button at the bottom of the view.

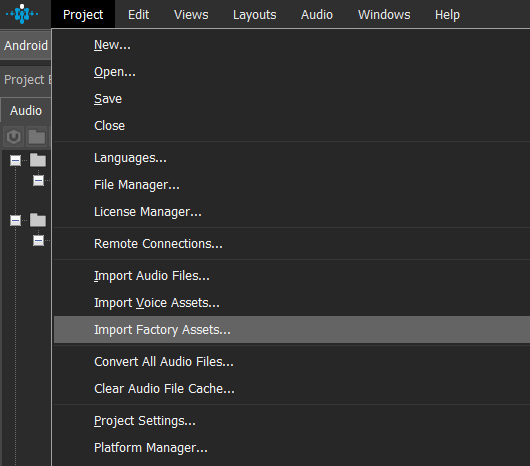

2. Open your project with the corresponding version of Wwise, select Import Factory Assets… from the Project menu, select GME, and then click OK.

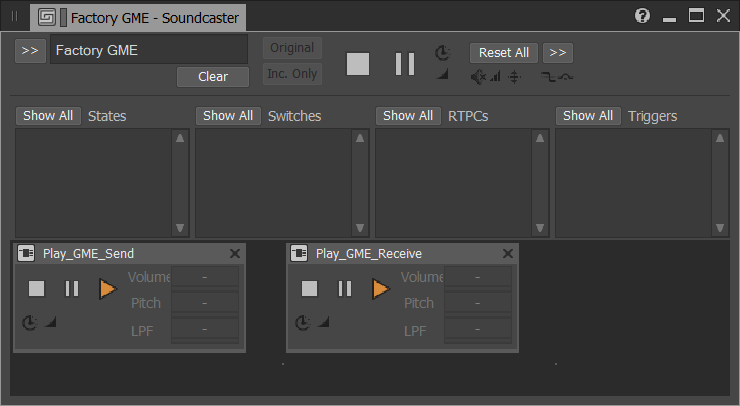

3. Importing GME factory assets, adds three work units, named Factory Tencent GME in Actor-Mixer Hierarchy, Events, and Soundcaster Sessions, to your project .

In the General Settings tab of the Master Audio Bus, replace the Audio Device (which is typically “System”), with Tencent_GME plug-in by using the selector [>>] button.

4. Open the Soundcaster session named Tencent GME and press play on both Play_GME_Send and Play_GME_Receive events to hear your own voice (assuming a microphone is connected on your PC or Mac).

5. To communicate with a teammate through the Wwise project, make sure that you both use the same App ID, Auth Key, Room ID, but a different User ID.

.png)

These few steps are simply to give you a quick “out of the box” experience, to have the system quickly setup on your machine. The integration into your game, especially if you go with a creative integration of voice chat into the gameplay, will require a few additional steps in the Wwise project and the game code.

Look at GME’s version of the Integration Demo to get an idea of how to set it up with your game engine and other neat features. If you’re developing with UE4 or Unity, there is also an integration available which should greatly speed up the evaluation and adoption of the service.

Wwise Tour Seattle 2019 - GME Presentation

Future Work

The very first iteration of GME integration in Wwise targets voice chat. That said, GME also supports “voice message” (a “push to talk” feature for sending voice messages) and “voice-to-text conversion” (which returns the text of the voice message) in its SDK through a different API. The next iteration of the GME plug-in for Wwise should include these features.

Closing Remark

GME voice chat offers a reliable, scalable, and fully-managed service. Its integration into Wwise alleviates the technical complexity and makes the integration of voice chat into your game quick and easy. Its flexibility opens up creative audio and gameplay ideas.

Games are becoming social platforms, and, in this context, it’s imperative for developers to incorporate in-game voice chat as a standard service in their game. In the fiercely competitive landscape of today's market, one needs to stand out to survive.

Comments

Lee Banyard

January 08, 2020 at 04:31 pm

Great to see this supported out of the Wwise box.