Inception

When AudioTank was first approached by Mohawk Games to create and implement the sound design for their new 4x strategy game Old World, the creative team had a specific request: produce a realistic sonic representation of world sounds driven by the camera’s distance relative to the world map. Though the request seemed simple enough, it belied its true complexity.

Upon adding initial audio for map resources, unit movement, cityscapes, and other world sounds, it quickly became clear that no amount of tweaks to 3D positioning data, lopass filtering, or convolution was going to cut it for Old World’s unique take on nation and dynasty building. Our sound design needed to be as convincing and detailed as the historical realism of the game: we needed the sound of distance.

After initial thought experiments, we realized that simply recording sounds from a distance was not going to work. No amount of noise reduction would salvage the sound of dimed preamps required to capture footsteps in grass from 30 feet. So if we wouldn’t be able to adequately amplify at the preamp stage without revealing the noise floor, we would need to find a way to amplify at the source stage. Therefore, we began preparing for an outdoor remicing session during which we would re-record the original source material at multiple distances.

Though we used this session structure to infuse the sound of distance into many different aspects of the game, we will focus on the movement sounds of player controlled units such as scouts, warriors, and workers to illustrate this process.

Preparation

Before we could go traipsing out into the forest, the session required some pre-production to best prepare our source content for playback in a remote location. Unfortunately we would likely not have AC power on site, leaving us to utilize only battery powered devices. As a result we turned to what was readily available: a cell phone, a battery powered speaker, and our trusty Zoom F8n.

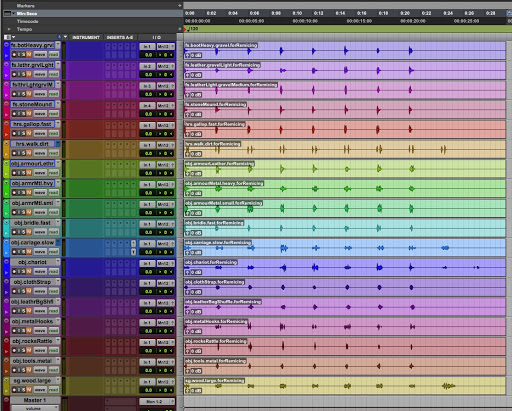

In order to make the session as seamless as possible, we consolidated all of the variations of each movement element (footstep on gravel, leather bag shuffle, metal armour rattle, tool clatter, etc...) into single audio files. For example, one audio file contained 7 different variations of footstep on gravel and another contained 8 different variations of leather straps under stress.

This would allow us to play each audio file on repeat in order to capture numerous takes for each movement element. This preparation would make it easier for us to capture enough content to account for random background noise such as excessive wind or birds.

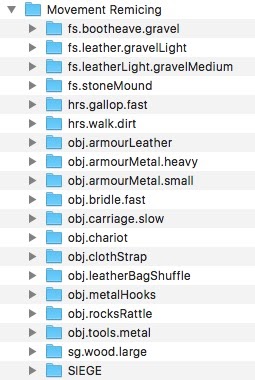

We then created folders in the F8n file structure labeled for each element we were planning to record. This would allow us to keep all of the recorded content organized by element, aiding in a smooth post production process.

Armed with our Zoom F8n, Rode NT3, Sony C-76, and Sennheiser Ambeo, all that was left was to wait for a nice day.

Creation

The most critical part of the session was finding a good location. Fortunately, our network led us to a quiet farmhouse tucked away in northern Maryland that was far enough from modern soundscapes to capture the ancient distance we were listening for. We set up outside of a satellite building with a wood exterior, using the Rode NT3 as the mid-distance mic and the Sony C-76 as the far-distance mic.

After some trial and error we discovered that simply pointing the speaker towards the microphones produced an unnatural result that was colored by the sound of the speaker. To remedy this, we decided to point the speaker (and the microphones) towards the building, allowing us to capture the reflection rather than the directly reproduced sound. This method helped place the sound in the environment, producing the sound of distance that we were hoping to capture.

Processing

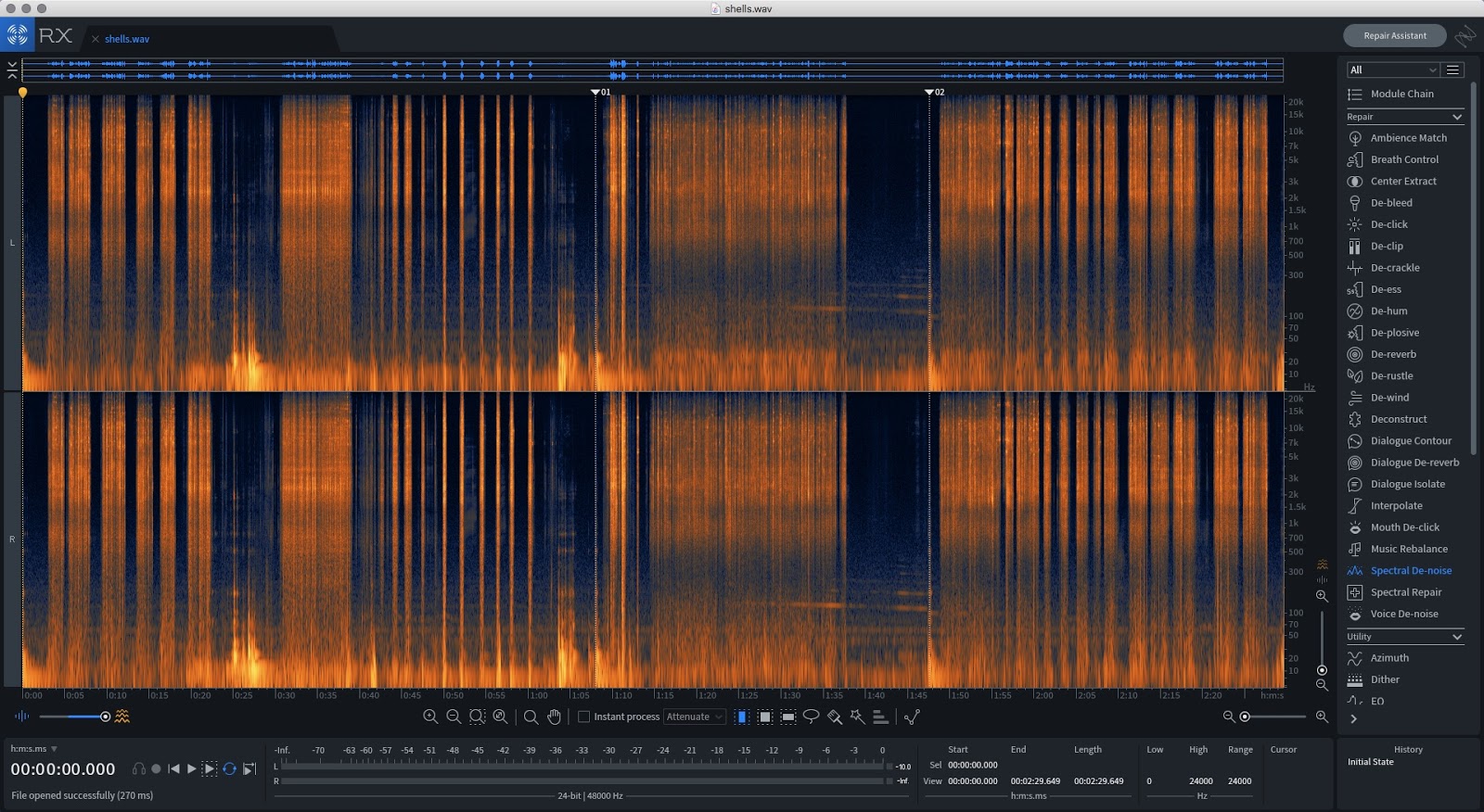

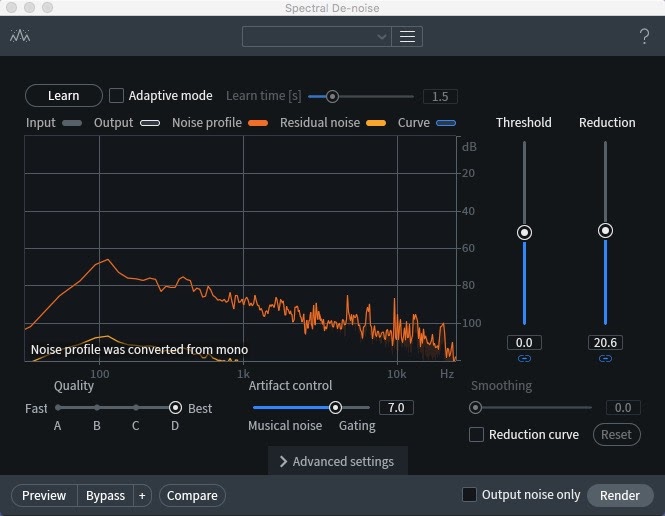

The post-production process was the most involved. After importing the recorded content, we used iZotope RX7 Advanced to pull out unnecessary sonic information leaving the ideal amount of distance.

We made good use of iZotope’s Spectral De-Noise plugin, a tool that analyzes the recorded content’s frequency spectrum in order to pull out unwanted background noise. During recording we made sure to capture at least 10 to 15 seconds of “nature tone” (or room tone) that we could use to teach the tool what background noise we wanted to remove. This gave us more control over the resulting content.

We then split these processed files into their respective variations for each element and each microphone (mid and far) all while making sure to label them accordingly for later import into Wwise.

Implementation

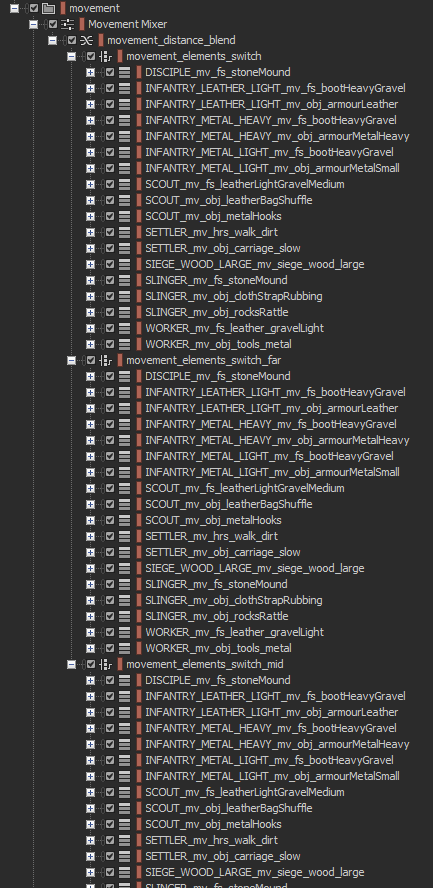

Once the files were ready, we pulled them into Wwise and set up the following structure in the Actor-Mixer hierarchy.

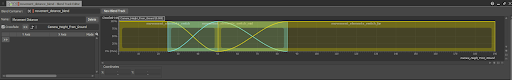

At the top of the movement hierarchy is a blend container called “movement_distance_blend”. Underneath are three switch containers, each holding the audio for one of the represented distances (close, mid, far). These switch containers are managed by the “MovementCategory” switch group that gets set to the particular unit type being moved by the player. The blend container fades between these switch containers based on the blend track (shown below) being controlled by the “Camera_Height_From_Ground” RTPC. As a result, the sound of distance gradually fades in as the camera zooms out and vice versa.

Lastly, there was one final consideration that needed to be made for all of this to work smoothly. As you can see from the image of the movement hierarchy, each switch container houses a series of sequence containers. Specifying a specific playback sequence ensured that each switch container played the same variation of movement element. If footstep_01 was playing in the close-distance switch containers, we needed to make sure that footstep_01 was also playing in the mid-distance and far-distance switch containers. This allowed seamless transitions between distances as the camera zoomed in and out. Below are videos demonstrating the movement sounds in action. (Note that the audio is not yet synced to the animations.)

Video Example Links

Axeman Movement:

Warrior Movement:

Worker Movement:

Settler Movement:

Comments