- Part 1. Scaling Ambition

- Part 2. The Crowd Soundbox System

- Part 3. Additional Layers

Planet Coaster is about building and managing the world’s greatest coaster parks and sharing your creativity with the world. It is Frontier’s most ambitious, technically advanced simulation game to date. Planet Coaster’s community has taken the game and run with it, sharing over 100,000 creations on the game’s Steam Workshop, and the game has seen multiple updates since its launch with further updates on the way.

At the centre of Planet Coaster are our park guests. They explore user-created parks in tremendous numbers and express their feelings and moods with beautifully realised animation, giving players an at-a-glance gauge of their park’s success. Not only are they the audio and visual soul of the park, they form the lifeblood of our one-to-one simulation of the coaster park experience. Without those guests, nobody is riding the rides, paying for hamburgers, visiting the bathrooms, or filling the park with life. Each one has money in their pocket, opinions and preferences, and the ability to judge your scenery and rides based on their own tastes.

In Planet Coaster, just like in the real world, a coaster park is about the human experience. Even over the roar of the rides and the pop of the fireworks, we hear when guests are exhilarated, scared, and overjoyed. Capturing the real sound of park guests in a coaster park has reshaped Frontier’s approach to audio implementation, and over three blog posts we would like to walk you through it.

Introduction

When we began writing a blog detailing the audio in Planet Coaster for Audiokinetic, we decided to concentrate on our crowd-Soundbox system. Even with such a specific focus, the blog grew to a 4,000 word article! We’re very grateful to Audiokinetic for letting us split our in-depth explainer into multiple parts, and we hope you find it useful for your present and future projects.

- Part 1. Scaling Ambition

- Part 2. The Crowd Soundbox System

- Part 3. Additional Layers

PLANET COASTER - CROWD AUDIO : PART 1

Scaling Ambitions

Planet Coaster is a true ‘Triple A’ simulation game, built on Frontier’s own COBRA game development technology. One of Planet Coaster’s highlights was always going to be the lively and lifelike crowds, and Frontier’s animation team planned to create intricate and detailed reactions for the guests who would make up the huge crowds in Planet Coaster, all the while hitting that Frontier benchmark for quality. We wanted Planet Coaster’s crowds to sound as lively as they looked, so naturally the audio team’s first instincts were to sync up to all those wonderful visuals and match the benchmark set by the rest of the team.

However, the sheer number of guests to support and the need to manage sounds efficiently ruled out using an animation-frame triggered solution and a one-to-one relationship between guests and emitters.

Lead Programmer Andrew Chappell put this quite frankly during pre-production: “The way you are used to working in audio at the moment… that won’t be possible with the crowds we’re planning for Planet Coaster.”

We took it as an opportunity to rethink the audio systems we had become so familiar with on our previous games and create something a little more forward-thinking with regards to emitter placement and making sure Wwise only does work that is audible to the player.

Planet Coaster required a different approach to audio indeed!

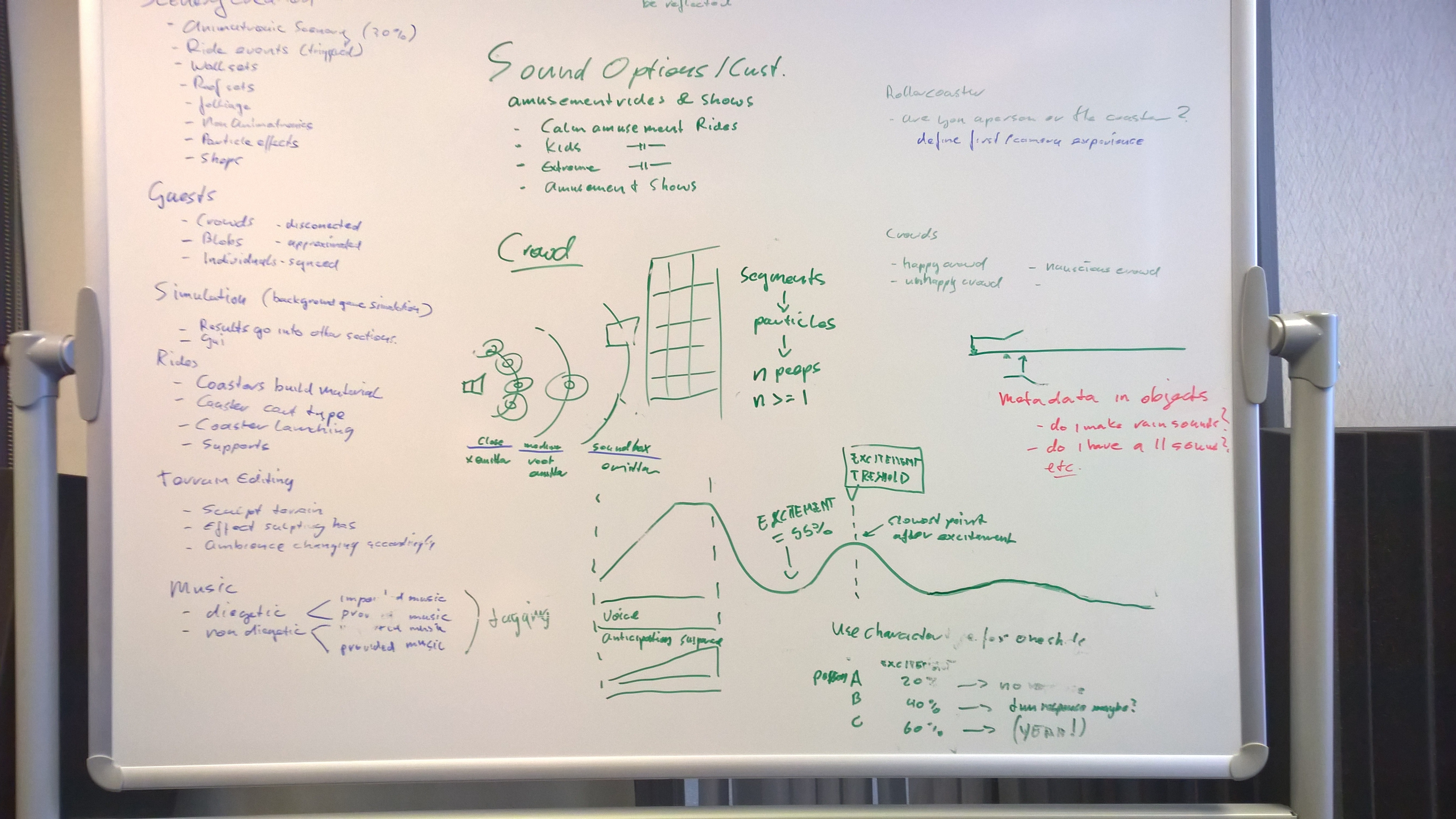

Pre-production whiteboard. Frontier (audio) projects usually begin life on a white board. Listed are project needs, practical examples and brainstorming sessions from which a set of guidelines was derived.

During audio-pre production, we talked a lot about the game and the problem of dealing with large amounts of objects requiring sound. We talked about what we would want to hear in different situations, but perhaps most importantly we talked to the other departments and listened carefully.

From our findings we abstracted our own guidelines to help us formulate what the soundscape of a theme park needs to do:

- The soundscape (music, audio) had to be informed by the park build (in support of the user’s creativity).

- The soundscape had to be dynamic, adaptive, and interactive.

- The soundscape had to be diegetic unless a situation arises where the first two rules cannot be applied.

- And any implementation we did in code or in Wwise needed to be able to scale.

These guidelines influenced our approach with all the systems we worked on. On coasters for instance, using just a simple ‘coaster loop’ would not adequately cover the ‘dynamic, adaptive and interactive’ guideline for the wide variety of coasters users can create. ‘Dynamic, adaptive and interactive’ also guided us in recognizing the relationship between a sound source and the environment a user has crafted around that source.

The same rules applied to crowds, and our guidelines demanded that we acknowledge:

- Where our park guests are

- How many park guests there are

- What our park guests are currently doing

For crowds, there would be no ‘magic bullet’ solution, as any hypothetical situation couldn’t be resolved to our satisfaction using just one system. To make matters more complex, our solutions would always have to take in quick camera movements from the player as they traverse colossal parks with a quick sweep.

Managing a large number of potential audio sources, varying densities of user generated content and the need to add and remove detail quickly on camera movement, all the while keeping the mix clean, dynamic, and satisfying? This was not to be an easy or dull project for our audio coders and designers!

From far away to close-up. Scaling audio to meet a wide variety of situations. Top Left: An empty sandbox. Top Right: Complex theme park with many rides close together. Bottom Left: Close-up detail. Bottom Right: Sweeping vista

Taking camera and performance into account, we began thinking of ‘stages’ for the audio. Like in a play, the lead actors are the most important characters and they would need to be in sync and clearly audible. The lead actors could be grounded by the rest of the cast and stage around them, which wouldn’t require the same level of sync or detail.

This thinking lead to an ordering of audio in dynamic foreground and background layers, where foreground sounds represent synced detail and background layers create a ‘virtual soundscape’ that doesn’t need close animation syncing but is still informed by what the current park contains. When the project is set up (in code and in Wwise) to support this thinking around the camera, the foreground and background stage can dynamically change.

Planet Coaster’s crowds are central to all these challenges. They can be dispersed or closely packed. They can be far away, or a few meters from the camera. Their screams can even reach from beyond the screen frustum. Since our goal was a soundscape that is dynamically generated by a player’s handmade park (recognizing and respecting the densities and activities of placed rides), real-time information would always have to play a role in the audio that represents them.

In the end, Planet Coaster’s crowd audio would combine two solutions to handle quick camera transitions, the scaling number of park guests, their distribution, and their current behaviors (their moods):

- The Crowd Soundbox System creates a data-inform layer which roughly describes the density, location, and mood of the entire crowd in a ‘virtual soundscape’. In the ‘stage’ example, it is our background layer which dynamically scales to the camera position and is managed by a set amount of emitters.

- The Close-Up Sounds System creates individually-synced emitters for foreground guests, and activates only when the camera is near enough to make out such detail.

In the next blog post we’ll go in-depth with the system that powers the crowd: the Soundbox.

Comments