Little Orpheus is a side-scrolling adventure game about one comrade’s journey to the centre of the Earth from The Chinese Room, the BAFTA-winning team behind Everybody’s Gone to the Rapture and Dear Esther.

The year is 1962. NASA is trying to put a man on the moon; but in a remote corner of Siberia, a Soviet cosmonaut is heading in the other direction. Ivan Ivanovich is dropped into an extinct volcano in his exploration capsule, Little Orpheus, to explore the centre of the earth. Ivan vanishes and emerges three years later, claiming to have saved the world. Little Orpheus casts players as Ivan as he recounts an adventure beyond belief: a tale of lost civilizations, undersea kingdoms, and prehistoric jungles deep below the Earth’s crust.

Brought to you in glorious technicolour with visuals inspired by a bygone era of adventure, Little Orpheus is a serialised adventure inspired by classic movies like Flash Gordon, Sinbad and The Land That Time Forgot.

Hi, I’m Jim Fowler, co-composer with Jessica Curry of the score to Little Orpheus. This was a really fun project to be a part of – colourful, funny, optimistic, and it called for music to support that.

Before the Music

Early on in development, Jess and I sat down with Dan Pinchbeck (Creative Director at The Chinese Room), who ran through Little Orpheus’ story and talked about plans for the game. The aim of this meeting was to begin thinking about the music; what it might be trying to achieve and how it might function - creatively and technically. These early get-togethers on a project are always exciting; there’s a feeling of discovery as you begin to get a sense of what the music will be.

With Little Orpheus, we would be taking care of implementation ourselves, which meant we could plan the music system and composition at the same time. For me, understanding how the music will playback in-game is important, because I feel like the music’s final performance is when the game is being played. Taking this idea into account at the composition stage informs the music in the same way that storytelling, emotional intent and ensemble do.

Talking with Dan about the game’s 1960s adventure serial inspirations and seeing the vibrant concept art, it was clear to us that there would be moments where the music would closely tie into the player’s actions. We could even already see moments where the music would ‘Mickey Mouse’ Ivan’s movements as if the game were a cartoon.

It was important to know this early on, since Little Orpheus was to be a mobile game, meaning that we had to take any technical limitations into account. We couldn’t go gung-ho about the music system - with layers upon layers and overlapping transitions - since that wouldn’t be achievable. There was plenty of potential for interactivity, though; and knowing what was needed to achieve that potential was one of the informing factors of the composition process.

Cartoonifying the Music

In that first meeting with Dan, we saw an early version of the game’s first episode, which includes a sequence where Ivan hides from a T-Rex by sneaking along in a giant egg shell. As Ivan, you take a few steps forward and then crouch down inside the egg whenever the dinosaur looks your way. It immediately felt like an opportunity to include some cartoon-style music that synced to Ivan’s footsteps. You could almost hear the pizzicato strings as he crept along.

Compositionally, we arranged one of the themes as a pizzicato strings piece, with the notes and chords moving around the orchestra; so that when played in its entirety, it was a satisfying and interesting listen. We first recorded the piece ‘properly’ so that we had a fully-played version and so that the orchestra understood the piece, and then we recorded it note-by-note. This latter version gave us nice clean ‘footsteps’, with neat articulations and tails for each, rather than having to edit a full performance and trying to neaten it up with reverbs and the like. Now we essentially had the entire piece as individual samples that could be played back by the user through the medium of Ivan’s sneaking.

The key thing here was for the music to feel like it was ‘Mickey Mousing’; as if it were a cartoon score, rather than just pizz. footsteps. To do this, the melody needed to play through in the correct order, and so each footstep was put into a Playlist Container and set up to play as sequence step. A nice & simple set up, except… what if the player walks backwards? The melody would continue to play for each footstep and we’d end up running out of notes before Ivan reaches safety!

What we needed was a system where a footstep note would play only if Ivan is walking towards the goal. Relatively straightforward, but we also wanted the melody to play out properly; ideally the footsteps wouldn’t repeat, so if the player walked away from the goal, they’d hear nothing. And if they continued walking in the correct direction, they wouldn’t hear a footstep note until they caught up to where they’d been. This helps give a sense of the tune playing through and also acts as a little gameplay reinforcement, telling the player that they’re going in the right direction and making progress.

Making separate events and sounds for each specific footstep and tagging them in-game would have been a lot of manual work, error prone, hard to debug, and possibly not even possible without building a whole new system. Hooray, then, for programmers. Fourie Du Preeze implemented a system that kept track of how many notes had been played; and each time a footstep was called, he went directly to the correct playlist member (if appropriate). The result is a memorable sequence early on in the game that really draws the player in. They feel an extra connection to Ivan and instantly recognise what this world is – we all know cartoons where music plays in time with sneaking, and that association was now brought into the world of Little Orpheus.

Music Influences

The music of Little Orpheus is informed by Russian music of the early twentieth century, especially the film music of Prokofiev and Shostakovich in his Tahiti Trot and Jazz Waltz mode (rather than his symphonic works). It’s also influenced by Hollywood musicals like Pinocchio and The Wizard of Oz, and the kind of music used in Saturday morning adventure serials like Land of the Giants.

We made decisions early on about what the ensemble would be. We wanted a recognisable Little Orpheus sound, something with a hint of Russian, but not exactly Red Army Choir bombast. Something a little quirky that could have been a small TV orchestra. What we decided on was a small string section (6 violins, 3 violas, 2 celli and a bass), harp and a number of wind instruments: cornet, horn, flute, oboe, clarinet, bassoon, and tenor sax.

The idea that evolved as we were writing was that the winds weren’t taking the role of supporting and colouring the strings, as they might in a larger ensemble; but rather, they were like a wind band, or even a big band in terms of arrangement. All the winds had their own role in the music, interweaving with each other, with the strings acting as a kind of glue that connects everything together like mortar in a stone wall.

Taking Trade-offs Into Consideration

As the music began to come together and themes started to emerge, we realised that the music needed a certain amount of freedom in tempo. There were moments that had to include rallentandos to sound correct, and others that needed accelerandos (i.e., the snowball chase). The music just felt too rigid and forced without them. This was early on in the process and something that we took very seriously because Wwise doesn’t allow for music that changes tempo within a piece like that. Of course, you can cut the music up and have it as separate elements in a playlist, but only for più mosso-type moments. We knew that if we were going to have these variable tempos that it would add extra work in the implementation stage. For sections of music that need to transition at the end to something else, we’d need to manually place the end marker; and for music that could transition at any point, we’d have to place custom cues in place of bar lines. While this isn’t an enormous amount of extra work, it’s important to take into consideration the extra time required. We discussed whether the win in having less rigid music was worth the trade-off in ease of implementation. We felt that it was and, because we’d considered this early on, we were able to account for the extra time when scheduling and planning the implementation work.

Additionally, we were able to manage the music in such a way that these instances were limited. For a section that seemed to need a variable intro followed by a loop, we could split that out during composition and recording to create an intro with tempo changes (which may be up to two minutes), followed by a strict tempo loop.

By being aware of how the music will be implemented and what the tools can achieve early on in the composition process, we could make decisions to mitigate problems further down the line. The tools should never dictate what you want to achieve creatively, but thinking about whether what you’re trying to do is worth any extra work is a valuable part of self-editing.

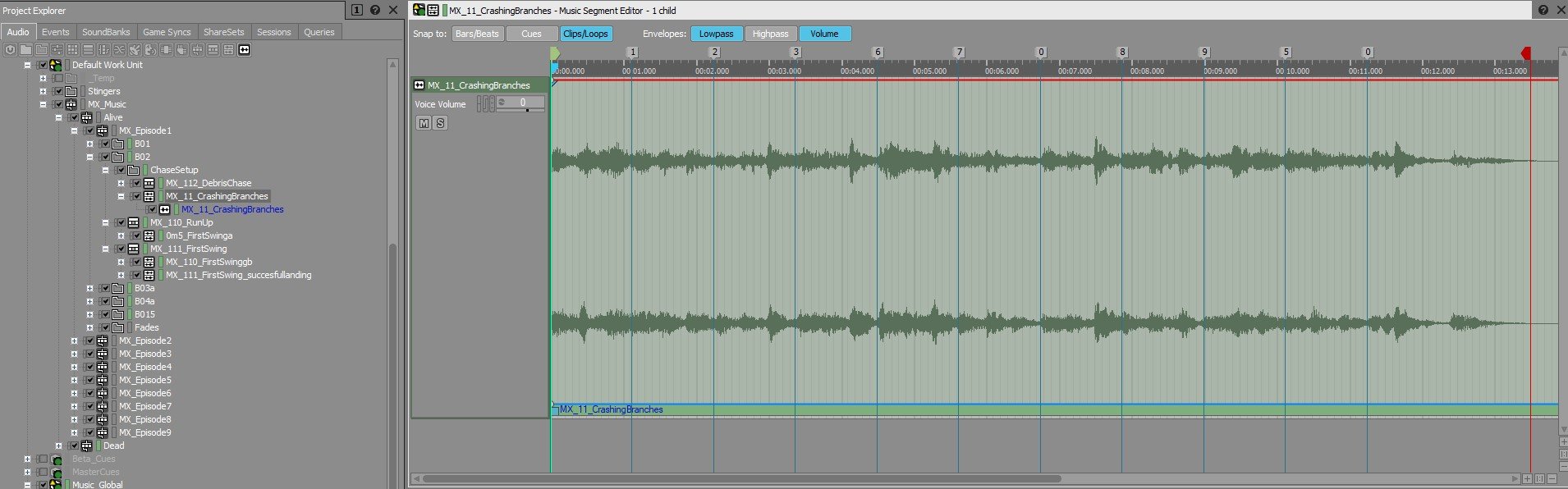

Rocket Debris Stingers

Another area that required some assistance from Fourie involved pieces of rocket debris raining down on Ivan as he ran through the branches of a giant tree. We wanted each debris hit to have a stinger chord to emphasise it and to tie the score to the image. The problem here was that the player could wait partway through the sequence to catch their breath or look around, meaning we could never know where in the exciting chase loop the debris stings would occur. Stylistically the music needed to move around harmonically (keeping it on a single chord for the duration jumped out as ‘wrong’ compared to the other music in the game). The solution was to have a sting for each chord of the loop. The loop was tagged up in Wwise using custom cues with numbered names corresponding to the chord sequence (1 = Eb, 2 = Cm7b5, 3 = Fdim, etc.) Fourie then set up a call-back game side that would get the name of the custom cue and use that to set a Debris_Chord state. This state controls a Switch Container filled with the various stinger chords; when the game calls the Debris_Hit Event, the Switch Container is played and the chord is correct for the underlying music. This is a nice seamless moment in-game and the player shouldn’t notice the interactivity at all; the music just seems ‘right’. By planning this in advance we were able to include all the appropriate stings in the recording session, rather than having to try and create them during implementation later on.

Ivan’s ‘Hero Moment’

Some more simple interactivity that helps pull players into the world is the moment when Ivan first leaps onto a vine and swings across a terrifying gap. Dan really wanted a ‘hero moment’ there, a fanfare in the Luke and Leia Star Wars mould. Something really heroic that says, ‘hooray, Ivan’s going to make it, he’s going to get through, he can use the environment’. From a composition point of view, we knew what was needed and the implementation could be very simple: Ivan successfully jumps onto vine = play vine fanfare.

Music can really reinforce that kind of ‘moment of cool’; but it often works even better if it doesn’t just appear out of nowhere, and there’s an anxious build-up that resolves into the positive fanfare and we feel an even stronger sense of accomplishment. That can be difficult to achieve in a game where we don’t know how long a player will take to do something. In this case, the player could decide to wander off back from where they came, or hang around near the vine but not jump, or jump but change direction before grabbing the vine. What this meant was that any introductory music needed to be a loop that built tension and could be transitioned from when Ivan grabbed the vine. The problem here was that, to keep things seamless and musical, the transition would have to be on a bar line and we wouldn’t get that satisfaction of the resolution being exactly timed to the vine grab. The solution to this was to create a timpani roll loop. This begins to play once the vine is in view but, crucially, its volume is controlled by an RTPC linked to Ivan’s distance from the vine. At first you don’t hear it, and as you get closer, the drum crescendos, and when the jump is successfully made, the fanfare starts immediately. This means that however slowly or quickly you approach the jump, the music will ramp up in sync with you, giving a real sense of building tension and then resolution. Plus, if you want, you can trot back and forth playing with the volume of the timpani roll! This ended up working so effectively that we used it in a few other places in the game as well.

n.b SFX are muted to make the timpani roll obvious

Loops, Loops, Loops

One way we used interactivity in Little Orpheus is less obvious to the player but is just as important in selling the world and keeping the gameplay and music experience seamless.

In the chases that end the episodes, the music is tightly tied to the sequence of events. For example, in the snowball chase scene, the music reflects where you jump up into the air, where you’re picking up speed, and builds towards the moment Ivan arrives (with a crash) on an ice floe. As with the vine fanfare, we didn’t necessarily want the music to start at the exact same time as the chase; it was often more appropriate for the music to start with the introductory cutscene. The difficulty we faced here was, although the music can be synced to the cutscene and played back correctly, and can be synced to the chase gameplay and played back correctly, there is some load time between the two. And because Little Orpheus was originally released as a mobile game supporting multiple devices of differing age and power, there was no way to know how long that load time would be. Rather than have the music stop and start again (which would break the flow and take you out of the world) or let it run through and be out of sync during the chase, we designed each intro to end on a short loop. This loop can then be used to cover any length of load time and the subsequent finale of the episode would be synced with the music. It’s like safety bars in musical theatre, where the score will have a short section of repeated bars for the orchestra to play until the performers are ready to start singing. This isn’t something that’s obvious to the player; in fact its purpose is to hide the joins. By taking this into account at the start of composing, we were able to incorporate the load time requirements into the music and come up with a solution rather than be taken by surprise later on. This may seem like a more utilitarian problem-solving approach to interactivity, but I think it’s as important as other more ‘creative’ music implementation decisions, like the T-Rex sequence. Ultimately, you want the player to have a seamless experience; it should feel like the music has been scored to their playthrough of the game and you don't want them to hear the joins. If the game stops to load and so does the music, the engagement and involvement is temporarily broken because the world of the game has stopped existing. If the music continues over the load time, the world continues to exist; it may have gone briefly black but it’s still there carrying on and waiting for us.

Opening and Closing Music

A last example of interactivity was the opening and closing of each episode. These feature ‘last time on…’ at the opening and a ‘next time on…’ at the resolution, prior to the title and credits music, respectively. This gives it the vibe of an old TV programme and those segments needed to have the same music each time, just as a TV show would. The nature of games, of course, is that there isn’t really any post-production time, meaning that music needs to be prepared and ready to go at the same time as everything else. In this case, that meant we couldn’t wait until all the episodes were finished and finessed to write bespoke versions of the in and out music. These sections had scripts and the voices were recorded, they had some animation done, but things were always being tweaked to get the right comic timing. Perhaps a little extra animation really sells the gag, but will require a pickup dialogue line that changes the timing.

To allow for this, the ‘last time on…’/‘next time on…’ music is written with a looping middle section. This means that the intro to the music is always the same, and the end flourish leading into the titles or credits is always the same, but the middle can be a variable length to allow for variances in the length of the sequence. This kind of interactivity isn’t really for the player’s benefit at all - it’s for the rest of the development team. By doing this, the music can go in early on and everyone gets a sense of how these sequences feel. Artists, animators, writers, and designers can all make tweaks and adjustments and the music will follow along, keeping everything in sync. This can really help with iteration; a change made doesn’t cause the music to be wrong, which can colour perceptions of how the scene is working or cause the music to be turned off until it’s fixed, again changing the way people feel about the scene. There’s also the benefit of not having to keep making small tweaks to the same piece of music, saving time for composition and other areas of implementation.

Final Thoughts

Little Orpheus was originally planned to be released on mobile, which came with some technical limitations; despite this, we were able to make various interactive music decisions to help sell Ivan’s colourful and adventurous world. By taking into account potential pitfalls at the start of composition, we were able to come up with creative musical decisions about how the soundtrack would work, which ultimately helped the music feel seamless and to become an integral part of the game experience, helping to sell the story to the player.

Comments