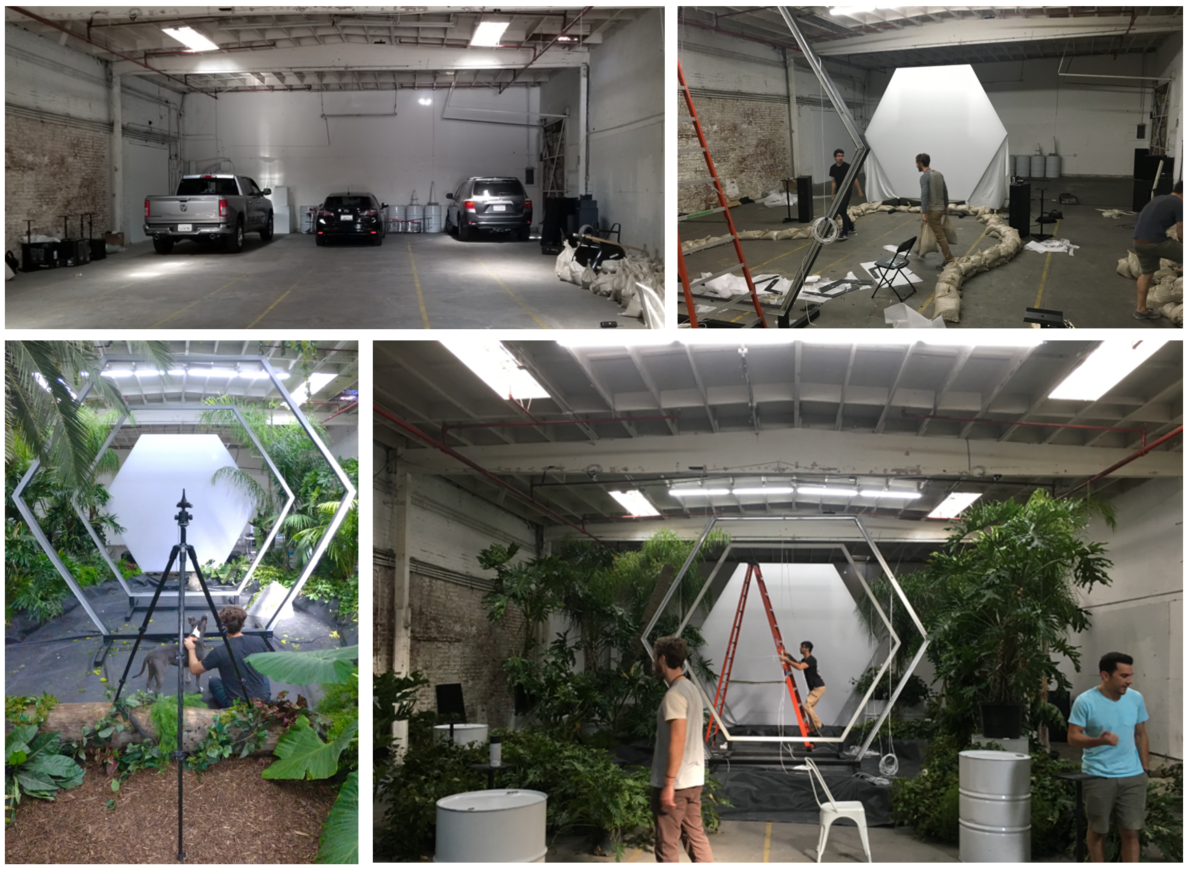

In October of last year, my team and I helped put together an amazing experience for Intel in collaboration with Master of Shapes, an LA based tech/creative jedi and friend of ours. We’d known about the project, more-or-less, for about a month and a half, but the variables of the project were changing every few days. When the final form of the project was set in stone with Intel, we had about three weeks to work on sound design, composition, implementation and a build-out. On top of that, we needed to decide on a venue - so the acoustic situation was still a question mark for us too. What we did know was that we were building an indoor forest and using Dolby Atmos to create an immersive, interactive experience...and we would have one day to show it to the public.

So what exactly are we working on?

When this project was first proposed, it was slated to use motion tracking and a stereo setup. The idea was that the player would be flying on the back of a dragon and wreaking havoc on the fictional world below. We started designing dragon vocalisations at this point, but after a week or so, it was hinted to us by Master of Shapes that the project would be changing. He asked if we wanted to try using Dolby Atmos, and we told him it would be the perfect opportunity. We hadn’t used Atmos before, but we knew about the Microsoft Spatial Sound Platform thanks to a demo by Kristoffer Larson at GDC 2018.

When we hit the two week mark we were able to start designing different systems in Wwise. For example, sounds related to flight speed and controller rotation. At this point we were able to start refining this pipeline which would come in handy during the final crunch. Another thing we found out about at this time was the final aesthetic and tone of the experience. As the player moves through the virtual world, they would be driving changes that cause them to dip between dimensions - between “grounded” and “psychedelic/ominous”. We were stoked about this and were pretty inspired by the idea.

One really fun bit of design we did involved a seaboard, omnisphere, and field recordings we had recently captured in the rainforests of the pacific northwest. In short, we loaded our recordings into the sampling engine and layered them in ways that sounded dense, but realistic. Once we had a good foundation, we started placing unique effects onto each layer. What made this approach special in our minds was our use of the seaboard to expressively play these samples fading between affected and dry. The result was a beautifully smooth transition between dimensions. It was this bit of sound design that became one of the pillars of the experience - the other being the musical score.

Crunch time

It’s one week before the deadline, which involves an Intel rep coming through to experience the project. What’s left to do: build the actual forest (which includes a pond), build the speaker array and hang the tops from the warehouse ceiling...also sound design and score the entire experience.. It’s safe to say at this point we were totally buzzing with stress and excitement.

It ends up taking two full days to build the experience to a point where we could begin working from player's perspective. Once we got our computers setup we began tuning the assets we’d built up to that point to the environment and adding acoustic treatment wherever we could. At four days to delivery, we received a complete level with landscapes and some prominent landmarks. We didn’t have any assets to design sounds for yet, but at this point, we knew the area the player would move through, and the zones they would see. With this information we took to refining our ambiance loops and designing possible spot effects for objects we felt matched the aesthetic (this was super useful). Something that Wwise allowed us to do ahead of time was play around with these ambiances and how the player should experience them. We ended up settling on a pretty cool method whereby we drove the rotation of the ambiance with player rotation. It took a while to fine tune, but it turned out surprisingly well and allowed us to avoid using a static 2D ambiance or relying solely on spot effects.

Now, we had three days left. We began to place fly-by effects throughout the course and debris impacts. For the most part, we had these effects already cued up in random containers with decent attenuations, which made it possible for us to place and forget these emitters until a later mix pass. Also, it was at this time when the rest of the dev team moved into the space and began to work alongside us at breakneck speed. At midnight that night, we’d caught up to all of their new content. Due to noise regulations, we couldn’t really mix after dark, so we decided to get one final night of rest before the final push.

When we arrived the next morning, almost all of the assets that would be in the final experience were implemented. We also decided that we wanted to include a cool intro sequence that immediately immersed the player in this incredible environment - SoundCaster was essential in making this happen in a timely manner. Using SoundCaster we were able to create discrete events that we could reorder and modify without having to destructively edit a larger file in an external editor. Most of the day was spent on refining this intro experience and it really paid off. We had caught up to the dev team and done all the mixing we could. We stuck around a bit later to help with bug smashing and then took off to get one last bit of rest before the inevitable all-nighter.

The final hours

On the final day, the whole team really came together to knock it out of the park. We were adding and placing new effects as more visual assets came in, we refined the intro sequence even more to get under sixty seconds, and we finalized event triggers throughout the map and ensured everything in Wwise matched up correctly. It was also this day that I discovered how incredibly useful the Wwise envelopes, LFOs and tone generators were for a project like this.

When it came to creating impactful and utterly strange effects, we were running out of time and couldn’t afford to get stuck on versioning these effects outside of Wwise. We decided to see how effective these Wwise modulation tools were, and we were pleasantly surprised by how perfectly they behaved. For example, at the end of the intro sequence we needed to create the feeling of the ground you are standing on being torn from the earth. To do this, we used Events to trigger an RTPC value sweep, which in turn controlled the frequency of a tone generator and the rate and depth of an LFO tied to amplitude. As the tone got lower, the LFO increased in depth and slowed down in rate until we reached a final impact thump and groan layered on some debris sounds. We thought this was an incredible experience, but it may have been because we tuned the placement of two 1000 watt sub-woofers. Nevertheless, it was insane.

By night, we still weren’t thrilled with the mix, but when is anyone ever really “done” with a mix? We spent most of the all-nighter implementing new triggers and emitters, and at about midnight we decided to rearrange the level and shorten the experience down to five minutes from about 7-10 min. We realized that we needed to control the speed and direction of the player’s flight a bit more in order to get a consistent 5 minute runtime to be able to fit in the overwhelming amount of people who wanted to try the experience. This change meant that our score needed to change as well. Earlier, we had created a rather sparse, relaxed score that complimented the player's ability to explore the strange environment a bit, but now we felt the need to create something that would properly contour the player's experience and build in energy as they got closer to the final encounter.

At about 7am the music was in, and everything was working besides the wireless tracker on our controller. Luckily, a bit of sweat and duct tape solved that for literally just enough time so Intel was able to enjoy their play-through. Between the client delivery time and our public exhibition, we continued to squash bugs and polish certain features, and we continued to polish it all the way up to the last play-through.

I can point to very specific factors that made this project the huge success that it was:

The Master of Shapes team including Adam Amaral, Rob Meza and Jon LaViola - Adam and Rob are masters at the dev end of things, and Jon, our executive producer was essential in keeping everything on track and everyone focused and relaxed.

Jordan Halsey - our resident Houdini wizard made it possible to fit so many exciting and interesting visual assets into the experience.

Jonathan Moore, our jack of all trades engineer, was able to come in and ensure that as we sprinted toward the finish our game mechanics and hardware would be stable and efficient.

Then there is Wwise. If any one feature that we ended up using was not there, it would have directly affected the amount of great material we had time to fit in. This project was unprecedented for us - a location based interactive piece that was constantly evolving until the last day. Wwise allowed us to jump in and immediately get creative, and that probably had the biggest impact on how much people enjoyed the experience.

댓글