VR is the new black. That is what everyone is saying. VR is certainly an interesting new technology, and the potential for the experiences we can create, I think, is still something we are all learning. I have already enjoyed some really excellent VR experiences and I think when the subject matter is appropriate, VR can be amazingly immersive and lots of fun. But I also think we need to continue to push both the technology and our creative approach to content. A project is not good just because it uses VR. It takes significant design and implementation to make the most of this new technology.

For me, VR is interesting exactly because it requires us to think in new ways and to approach design issues with a different mindset.

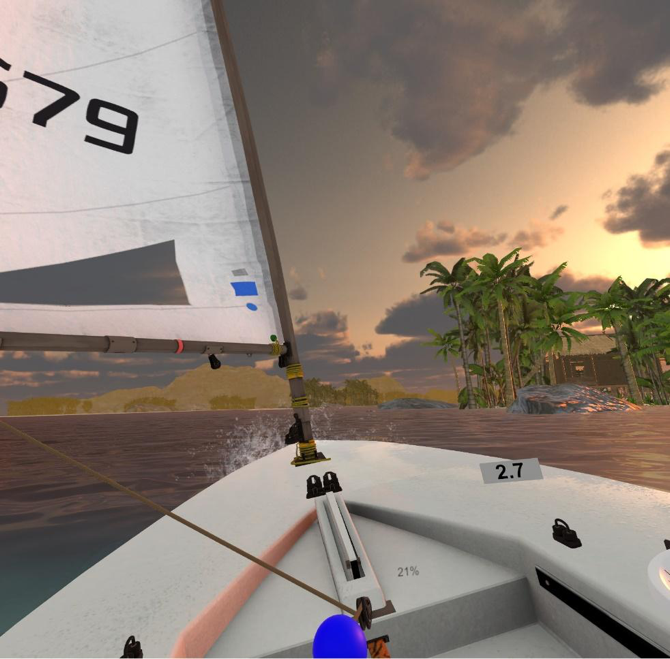

So, when I was asked to work on a small indie game called VR Regatta, I wanted to examine what was special about this project and how the audio needed to work to bring out the best in the experience.

A simple, but elegant concept makes VR Regatta enjoyable and engaging

VR Regatta is a sailing game. The name pretty much says it all, but from the instant I tried it I was surprised at how engaging and enjoyable a relatively simple concept could be in VR. I started in a small single person boat, with one controller for the tiller (rudder) and the other to control the tension in the sail via a rope and pulley. Manipulation of these two controls makes the boat move in the desired manner. I have never sailed a boat in my life and yet I found the game tons of fun and surprisingly immersive. You barely change your position as you sail. You can lean back and forth, you can move your arms, but you’re otherwise static in the smaller boat. In the 40-foot yacht version however, you can teleport around different locations on the boat while sailing.

From a sound point of view, there were a series of specific noises that each boat made during operation, but basically the entire audio for the experience came down to a combination of wind and water. So, on the surface it might seem as though this game could have its audio produced in a lazy afternoon with a handful of noise loops to represent the two main elements. The VR nature of the project, however, allowed the audio to be so much more, and to provide significantly more presence than a few stereo ambiences would have provided. Wwise was core to creating an audio system that would allow VR Regatta to not just become more immersive, but to also allow sound to guide the player.

I have been doing research and project design work in spatial audio for several years now, working with Magic Leap, the Facebook Spatial Audio team, as well as several VR developers. There are many aspects to spatial audio that we are still getting to grips with. Some, I have discovered, can make a significant impact on how a world feels when an audience enters an experience. The first thing we are likely to notice when we enter a world is the sense of space. This is where I start with all VR projects. I create a spatial “world-tone”. This is usually an open space wind presence for outdoor worlds, or a room hum for internal locations. The exact content I use may alter but the process is usually very similar.

I select appropriate sound content to use for the project, in some cases this might be a barely audible whisper of a wind, in the case of VR Regatta the wind was generally at a higher level, as ‘no wind’ is the worst time to be sailing. I would then create a simple sound object for the wind sound file and set it to loop. This provided me with a single mono wind loop. Then, by either editing the existing sound file or sourcing 3 other suitable files, I would create a total of four looping wind sources. These would become my spatial world-tone.

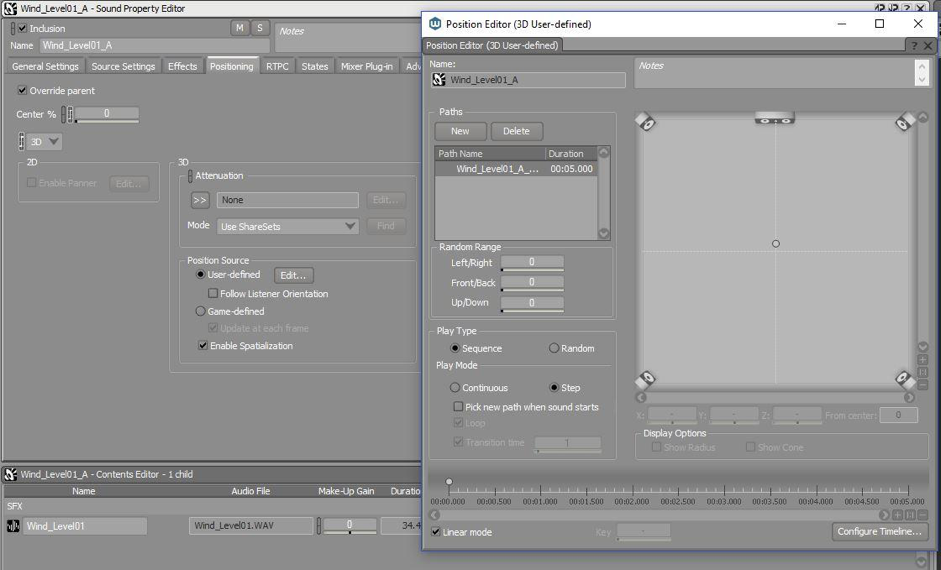

All four wind loops would be placed into the same sound Event and that Event was then attached to the listener in game. I would often get the programmer to do this to ensure it was set up right for the particular project; but in general, this Event needs to emit from the exact same location as the Wwise listener, which is almost always attached to the VR camera rig. I would usually refer to this Event as Camera_Ambience. All four wind loops would play and loop continuously. But, it was because of the position source editor in Wwise that I was able to achieve the result I needed.

Note that Listener Orientation is unchecked

Each wind sound was set to 3D, with no attenuation. Under the Position Source controls, you must untick Follow Listener Orientation. This means that when the VR head tracking tracks the head movement of the audience, the orientation of the wind loops does not alter. They stay locked to their world position. In the 3D User-defined Position Editor, I created a unique location for each of the four winds, one at each of north, south, east, and west of the central listener position. So, now the audience has four wind sounds enveloping their virtual heads, and as they rotate their head back and forth they will hear a changing perspective of these four wind sources. To further enhance this effect, I offset the distance of each compass point. So north might be set at 5 meters, south at 7, east at 4, and west at 6. This provides very subtle differences between each layer. These can be further enhanced by altering the output volume of each of the individual wind sources. The aim is to create a “point of difference.” As the audience rotates their head, they can hear that their perspective in relation to the virtual world changes, and this is the key. We are highlighting and supporting the act of head rotation in a subtle but noticeable manner.

Most of us, upon entering a VR experience, will turn our heads, look back and forth, and acclimatize ourselves to our new environments. The above spatial world-tone immediately enhances the act of head rotation just as we experience in the real world. As we turn our heads, our relation to the world around us shifts and we feel like we are out in the open world. This technique in Wwise can emulate this real-world sense of space. For VR Regatta I did a similar thing with the sounds of the ocean. As the audience’s perspective is locked inside the boat, I could create the same sort of effect with the sound of the ocean. Emitters positioned around the boat provide the gentle lapping sounds of the water against the boat and give more support to the idea that the audience is surrounded by both wind and water. Once the boat starts to move, additional sounds are added to enhance the sense of movement.

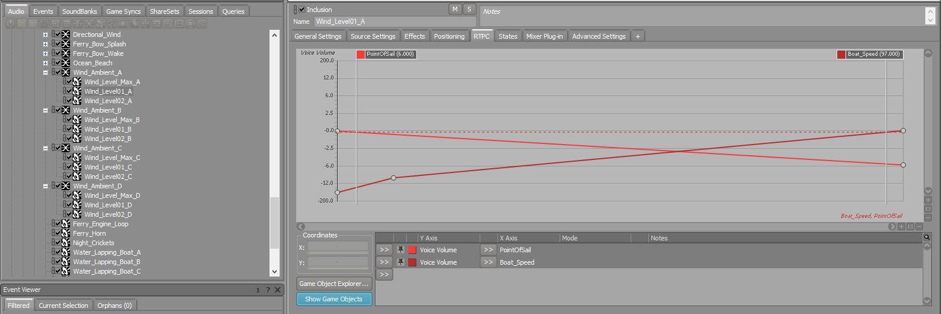

For a sailing experience, the wind needed to be much more than just an enveloping loop of wind sounds. While these provided a sense of space in the world, wind is a critical aspect of sailing. So, the Camera_Ambience wind objects actually have a range of states controlled by RTPC to increase and decrease the magnitude of the wind as needed. So, as the wind speed increases, the wind surrounding the audience would increase from a gentle breeze up to a significant and blustery gale. Added on top of that is a directional wind object that is positioned exactly where the wind is blowing from in relation to the audience. So, they would have wind all around them, but a directional source audible from a specific point. As they turn their head back and forth, the audience should be able to hear the wind coming from a specific direction. This allows the audience to listen and locate the wind direction and steer appropriately while sailing.

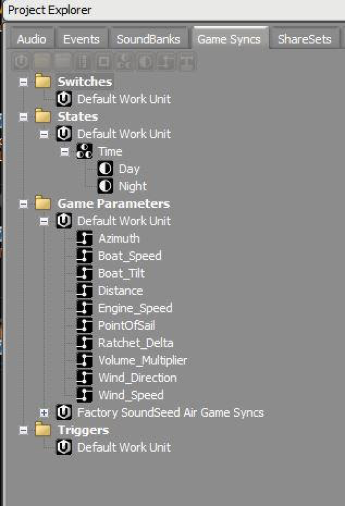

Each of the four compass point winds has 3 intensities and each intensity has two RTPC controllers

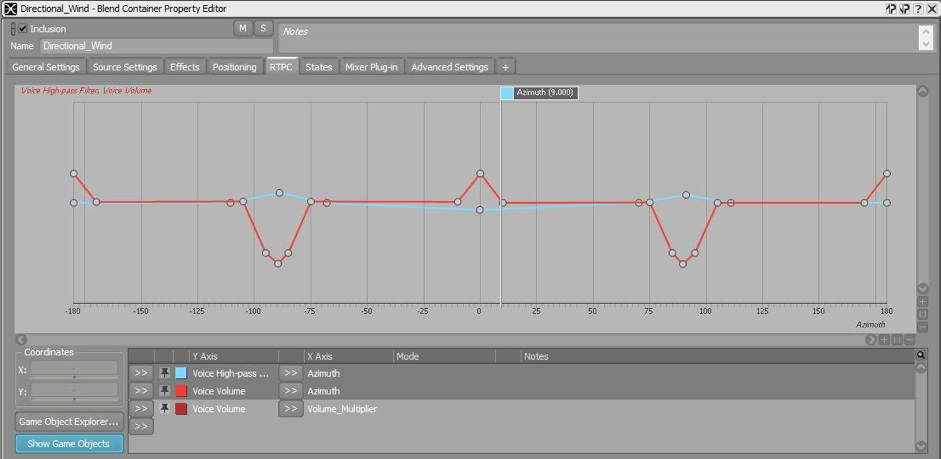

During development, we realized that wind in the real world sounded different depending on a human’s orientation to it. If you are facing a strong wind directly, it whistles past your ears and can be quite noisy. But if you turn you head 90 degrees so the wind is blowing directly into your ear, it can become strangely soft and quiet. It is the movement past our ears that magnifies the effect of the wind and produces this whistling effect. Adding another parameter to the project, we tracked the audiences head position in relation to the positional wind source and I added filters so that the wind is at its loudest when you are directly facing it. It would become softer as you turn 90 degrees on. This enhances the audience’s ability to figure out the exact direction the wind is coming from. So, now you could theoretically sail the boat relative to the wind direction without looking.

Via RTPC controllers, the directional wind behaviour alters depending on head rotation

Related to the above, we also realized that when sailing into the wind you hear the wind quite loudly as you move against it. But, when sailing with the wind, the wind can be practically silent. So, yet another RTPC and more controls for the wind allowed me to simulate this behaviour as well. In all, VR Regatta has 7 parameters just to control how the wind is heard by the audience during sailing. The importance of the wind in such an experience meant it was essentially the star of the show audio-wise.

Bow, Port, Starboard and Stern wake sounds all respond to boat speed and tilt

Other parameters were used to control the output levels for the boat wake as it cut through the water. But again, because it is a VR experience it was worth adding wake sounds to both the front and rear of the boat, but then also to the sides. As you steer the boat and the system detects your body movement, you can lean yourself and the boat closer to the water, on either sides. At this point, the sound of water movement on the lowest side becomes louder as the water washes over the edge of the boat. Right the boat back to level and these side wakes reduce. All of these elements have added to the sense of realism within the experience.

10 RTPCs are used to control the playback behaviour of the wind and water audio

So, what essentially is a fairly simple experience, clearly has a significant level of complexity to control the various audio elements and provide a convincing and enjoyable atmosphere for the audience. Environmental elements such as birds in trees, water breaking on the beach, and insects at night all enhance the world but are simple set dressing when compared to the fairly complex implementation that was required to achieve the best sounds for the wind and the water.

It is worth mentioning that this project utilized the Auro®-HeadPhones™ plug-in, a spatial audio solution recommended by Audiokinetic. I honestly do not have much to say about this for the simple reason that it was easy to activate, set up, and essentially worked with little or no additional tuning required. Considering that many of the current spatial audio solutions right now are complex to set up and are even sometimes unreliable in their functionality, the Auro®-HeadPhones™ plug-in was excellent to work with. Like many aspects of audio, when it works well it can often become invisible, and for this project, the spatial plug-in just worked. I had it set to the maximum number of surround channels to provide the highest spatial presence and it worked very well to enliven the world space of VR Regatta.

A simple concept does not mean we can just throw in some basic sound design and be done with it. The very reason why VR Regatta works so well is that the team has taken a simple concept but refined it with excellent graphics, intuitive controls, and an overall level of polish that makes the simplicity shine. The audio for VR Regatta is designed to enhance all aspects of the experience and make the audience’s experience as engaging as possible. As is often the case with audio, if we do our job really well, most of the audience will never even notice, but we can bring worlds to life and make the experiences we create so much more enjoyable because of the effort we put in.

댓글

Corentin Jouet

September 07, 2017 at 03:39 am

Interesting read, thanks for sharing.

Gerhard Lourens

April 09, 2018 at 02:23 am

Thanks for sharing your insights and experience with us! After I read your blog the first time, I bought VR Regatta and did some sailing. Subsequently I did my RYA Level 1 sailing course and now have come back with some more context. I agree with you that the MarineVerse team have done a great job of polishing a simple concept into an enjoyable product and the ambient sounds greatly contribute. Kind regards, Gerry, South Africa