幽霊のような謎の危険な生き物は、一体どんな音を出すと思いますか?そいつが周りの音に与える影響は?意外にも、壊れたコーヒーメーカーの音が幽霊音にぴったりだったりします。周辺の音を吸い込んでしまい、残されるのは、冷たく生気の無い「雰囲気」だけ。いざ幽霊が迫ってくると、急き立てるような音楽フレーズが再生され音は次第に大きくなり...。必死で逃げても、振り切れない。あせって振り返る余裕もなく、つかまりそうになると空気は凍てつき、音楽フレーズも激しくなる!次の瞬間、身動きできなくなり、すべての音が、消える。息を吸おうともがくと、氷のように冷たい空気が肺に入り込んでくる。そして、周囲が真っ暗に。

私は、Aporia: Beyond the Valley開発チームのトゥルルス(Troels)です。このゲームの制作最後まで携わり、サウンドデザイナー兼コンポーザー兼Wwiseインテグレーション・最適化担当を務めました(要は、サウンドガイ)。

ストーリー

まずゲームの背景ですが、ファーストパーソンの探検・アドベンチャー・パズルゲームで、場所は古代の谷底、ストーリーは文章もセリフもなく展開されます。開発には広大で美しい環境をつくるのにとくに優れているCryEngineを使いました。遺跡や昔の物がゲームワールドのいたるところに残され、プレイヤーはこのすたれた土地を探検しますが、そんな様子を暗闇からじっと観察し、森を巡回するモノがいます。幽霊の姿をしてプレイヤーの敵にあたる生き物です。この幽霊が実はゲームの中心的な存在で、物語の流れに深く結びついています。ところで、怖い場面はありますが、ホラーゲームとしてデザインされたわけでも、計画されたわけでもありません。

このゲームには大量のオーディオが投入されていて、ところどころでオーディオがかなり複雑な動きをするので、Wwiseが選択肢の1つになるのも当然で、ゲームにリモート接続してプレイ中でも柔軟にミキシングしたりモニタリングしたりパフォーマンスの最適化を行ったりできるので、オーディオインテグレーションのソフトとして採用することに決まりました。さらに、サウンドデザイナー兼コンポーザーである私がオーディオプログラマーに頼ることなく、ものすごく広い範囲を自分でコントロールできるのもWwiseの大きな魅力の1つでした。欲しい機能がWwiseにすべて揃っているうえ、プログラマーは私が作成したイベントを素早くインテグレートできます。また、一旦イベントをインテグレートすれば、そのイベントがトリガーするオーディオをあとからアップデートするのも簡単です。

今回のブログでは前述「幽霊」のサウンドデザインや、サウンドインテグレーションに関する技術的な話をしたいと思います。

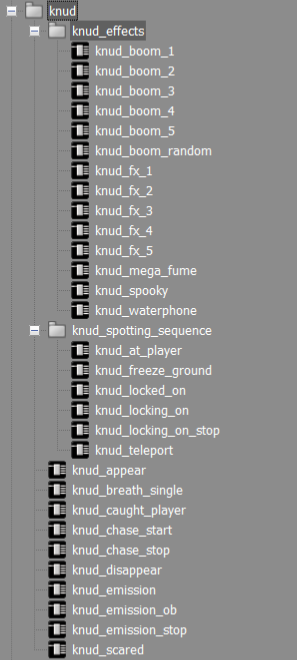

「幽霊」関連のイベント一覧(伝説によると幽霊に正式な名前があるのですが、開発チームではスカンジナビアの伝統的な名前であるKnud(クヌード)と呼んでいました。理由は聞かないで。)

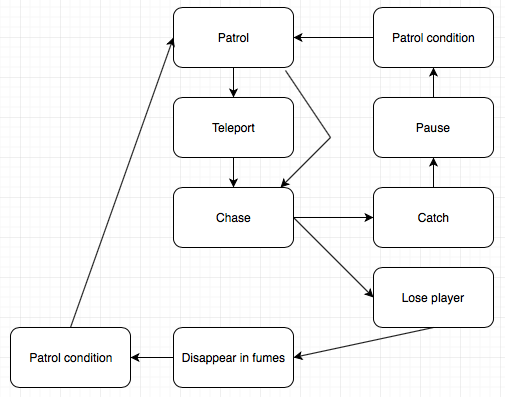

イベントがゲーム内でどのような動きをするのかを分かりやすくするために、私たちはこの幽霊のAIの仕組みをビヘイビアツリーにしました。

幽霊=敵の雰囲気として目指したもの

音を工夫して、私たちはこの幽霊を恐ろしくも哀しい存在にしたいと考えました。ゲームディレクターのセバスチャン(Sebastian Bevense)は、早い段階で「クリック音」をリクエストしました。そう言われて私が思いついたのは、映画The Grudgeの幽霊の印象的な喉の音でした。そこで、同じ感触を、余計な連想をできるだけ排除して達成することを目標にしました。上図のリストのとおり、クリック音以外に、幽霊Knudにつながっているサウンドやイベントが沢山あります。それぞれのイベントの役目についてはあとで詳しく説明します。抽象的な物体の音をデザインするにあたり、どんな音を出せばいいのか、具体的な期待値がないことが多いのがいつも難しいと感じます。さて、大きくて暗くて浮遊する幽霊のサウンドデザインなんて、一体どこから手を付ければいいのだろう...。私はこんなときによく、以前のレコーディングやシンセサイザーの様々な音で実験して、幽霊のどの部分が音を出すのかを想像しながら考えます。

幽霊が持つ要素として唯一分かりやすかったのは、動くときにはためく何らかの布があることでした。ということで、はためく布と、クリック音。これは、実は結構参考になる要件だと思います!

どう実現させたか

セバスチャンが幽霊にクリック音をリクエストした瞬間に頭に浮かんだサンプルがちょうど一週間前に耳にした古いコーヒーメーカーのサンプルでした。このサンプルをどこでみつけたのかはちょっと覚えていないけれど、きっと誰でも聞き覚えのある音です。

コーヒーメーカーのサンプル、オリジナル版

これにいくつかの処理を順に施し、合うかもしれないと思った様々なサウンドとブレンドしてみました。サウンドデザイナーというのは、よくサウンドレイヤーを重ねがちで、やり過ぎてしまうと重なる要素が多すぎてエフェクト自体が台無しになり、全体に濁ってしまいます(私だけかな)。私はWwiseにサウンドエフェクトをエクスポートするときに、レイヤーでエクスポートするのが好きです。例えば、レイヤーをコンテンツ別にLow、Mid、Highに分けたりします(サウンドの種類にもよる)。そしてWwiseにインポートしてから、様々なレイヤーを入れたBlend Containerにイベントを結びつけます。

そうすれば、たった1つの音を調整するのではなく、個々のレイヤーを一緒にミキシングしたりランダマイズしたりできます。経験上、このアプローチの方がよい結果をもたらし、サウンドを細かく調整してミキシングできるので、特定の状況でプレイヤーに感じ取ってほしい音に、より正確に近づけます。私はこうしてミキシングしたほうが、決まったイベントやアニメーションやインタラクションの動画だけを見てサウンドをつくるより、絶対に効率的だと感じます。もちろん最初は動画を元にサウンドをデザインするところから始めますが、ゲームの動画を見るのとゲームをプレイするのとでは感じが違うのを経験で知っています。だから、レイヤーをエクスポートする、というアプローチが理にかなっていると思います。

当然、このアプローチではWwiseのボイスカウントがグッと増えてしまいます。それをうまくかわしてパフォーマンスを最適化するために、開発の最後にすべてのサウンドを手作業でベイクする必要があり、親Blend Containerを使って何回かレコーディングをして、いくつかのワンショットにカットして、Random Containerの中に入れていくことで、トリガーするのが最終的に複数のサウンドではなく1つだけになるように工夫します。疲れる作業なので、もっと効率的なやり方はないのか、といつも思います。

処理したコーヒーメーカー音。

幽霊のイベント、能力

それでは、この幽霊に関連する様々なイベントをみていきます。すべてを細かく説明しないで、大事なサウンドに的を絞ることにします。

- emission(幽霊が放出する音)

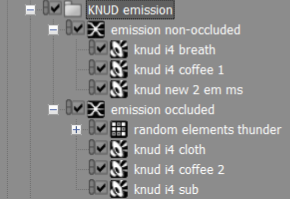

幽霊のサウンドとして最初に検討したものの1つが幽霊の放出するサウンドemissionです。

幽霊が中核的な役割をなすので、プレイヤーにその存在を感じ取ってほしいと思いました。それにぴったりの方法が、幽霊の周りで3Dサウンドを360度放出することでした。

インテグレーションの初期段階に幽霊との距離関係を定義するRTPCを設定しました。これで、複数のレイヤーのボリュームを簡単に制御できます。例えば、幽霊のクリック音はサブレイヤーよりも早く聞こえるようにしました。RTPCに基づいて各レイヤーが違う反応をするので、1つのレイヤーがループするのよりも動的なエクスペリエンスを、幽霊が近づいてくるときのために設定しました。RTPCでレイヤーを制御する以外にも、RTPCでWwiseのマルチバンドディストーション(multiband distortion)のうちの1つのバンドのゲインを制御して、音が近づくと存在感が増して壊れた感じがするようにしました。RTPCでバスのパラメータもコントロールしました。アンビエンスやフットステップのボリュームを細かく制御できるので、幽霊が近づいてプレイヤーをつかもうとするときにこれらの音が徐々に小さくなります。Wwise Meter Effectを使って信号出力値をRTPCに送ることができるのも、私がWwiseで気に入っている機能の1つです。音楽制作と同じように、複数のバスを対象に、信号の送信先をその都度変えてパラメータ(外部サイドチェイン)を制御できるのは、ミックス制御のための非常に便利で強力な手段です。オーディオのノンリニア再生ですっきりしたミックスを達成するには欠かせないツールです。

何回か繰り返すうちに、自分たちが満足できる仕上がりになりました。

今回のような抽象的なキャラクターのサウンドをつくるうえで難しいのは、同じオブジェクトから出ていると分かるように、そのオブジェクトのサウンドの一貫性を保つことだと思います。私はつい複数のサウンドを重ねすぎてしまい、全体が濁って何も目立たなくなってしまいます。少ない方が効果的という考え方を、いつかは実践できるようになりたい!音をシンプルでミニマルで効果的にした方が、95%のケースでより良い結果になると思います。

幽霊発の音(つまりemission)が遠くから近づく様子のレコーディング

- オクルージョン

テスト中に気付いた問題の1つは、幽霊が壁の向こう側にいるのに、幽霊の出すemission音がフルボリュームで聞こえてしまうことでした。幽霊AIがプレイヤーを検知できないはずなのに、まるで危険が押し迫るような音になってしまいます。ここではプレイヤーに、幽霊が近くにいることを、激しいレイヤーの再生なしで感じてもらいたいのです。解決策として、幽霊が放出するサウンドを2つのBlend Containerに分けて、1つはオクルージョンの影響を受けるようにしました。レコーディングを聞くとローエンドがあり、それがオクルージョンに反応することで、恐怖感が表現されてプレイヤーに危機感を与えるのが分かります。

オクルージョンに反応しないemission音のレコーディング(1メートル先)。

オクルージョンに反応するコンテナのレコーディング(1メートル先)。

spotting sequence(発見時のシーケンス)

プレイヤーが幽霊をジッと見続けると、幽霊が気配を感じて、徐々にプレイヤーの存在に気付きます。そのうち幽霊は怒り出し、エネルギーをチャージしてプレイヤーめがけてテレポートします。そこから恐怖の追跡が始まり、音楽の緊張感が増し、プレイヤーに迫るにつれ強烈になります。ここで、幽霊に見つかるspottingシーケンスのイベントをいくつか説明します。幽霊から出るシグナルはすべて、ghostバスまたはcritical sfxバスのどちらかに入ります。ほかのバス(music、non-critical sfx、ambience、footstepsなど)は、重要な信号を受信するたびに、この2つのバスに影響されてボリュームを下げます。こうすれば、重要なサウンドを前面に保ちながらすっきりしたミックスになります。

- locking on(プレイヤーにくぎ付け)

説明した通り、プレイヤーが幽霊を見ると、幽霊は徐々にプレイヤーに気付きます。プレイヤーに警告するために、発見される確率を表現するライザーを取り入れました。ライザー中にプレイヤーが目をそらせば、ライザーが停止します。ただそれでは、サウンドが急に中断されてしまいます。フェードアウトを使ってみましたが、それでも停止時に違和感がありました。そこでコンテナの信号を、長いテイルを設定したaux reverbバスに送信して解決しようと考えました。幽霊から目をそらすとコンテナの再生が停止して音がすぐにフェードアウトしますが、リバーブテイルは数秒続いてもっと自然で聞こえの良いフェードアウトになります。実は幽霊関連のすべてのサウンドがこのauxバスに送られていて、それで様々な幽霊サウンドの一貫性を保っています。

- locked on(目が合う)> freeze ground(地面が凍る)> teleport(テレポート)> at player(プレイヤー近くに到着)

幽霊を凝視すると、大変なことに。プレイヤーの存在に気付いた幽霊は、エネルギーをチャージして突進の準備をします。プレイヤーのボディに幽霊の焦点が定まると、周りの地面が凍り付いて、氷のきしむ音が聞こえてきます。数秒後には、テレポート(素早い移動)で向かってきます。テレポート時の距離感を示す情報として、幽霊は飛びながらハウリングする3Dサウンドを出します。幽霊は目的地に着くとプレイヤーのすぐ近くで姿を現し、そして追跡を開始します。その時点でプレイヤーにプレッシャーを与えるために、大きな衝撃音と、続いてシェパードトーン的な音楽ループが、追跡音として再生されます。追跡ループは、3つか4つの違うレイヤーから成る徐々に激しくなるループです。レイヤーはBlend Containerに入っていて、先ほどの幽霊との距離で設定されるRTPCに基づいて、レイヤーはクロスフェードしながら次から次へと変化します。

目が合ってから地面が凍るまでの様子。

テレポートして近づき、プレイヤーの近くに到着

遠くから近くまで来る追跡ループ、そしてプレイヤーの捕獲

このブログは以上です!読者のお役に立てたでしょうか。抽象的で暗い登場人物のサウンドデザインをするときは、参考にしてください!

最後にゲームの音楽を少しお聞きください。これは怖くないよ。

ゲームのサイト: aporiathegame.com

デベロッパーのサイト: investigatenorth.dk

Troelsのサイト: ubertones.com

コメント