This could be both the dream and nightmare of a Foley artist: walking a character whose look, attitude, and composition can be endlessly renewed at the press of a button.

Dream or nightmare, this is what I got involved with: more than 300 million combinations can be achieved in Benjamin Vedrenne's Random Access Character project. All I had was 12 days and an email from its creator saying he would probably not be able to help me during that period of time. This blog will present an approach using Wwise and Unity that can hopefully be a source of useful information for anyone interested in the problem of Foley for games. Neither ultimate nor absolute, I invite anybody to make suggestions to further push the particular designs presented here.

Day 1 & 2: Wrapping my head around a limited infinity, doing the math, and drawing waterfalls

From the very beginning, I interpreted Random Access Character as a generator of accidental micro narratives. Each new generation was a unique character in a unique situation: a tree man escaping a forest, a raspberry girl skipping in the rain, a drunk coke boy staggering at the entrance of a stadium, a bunch of eggs crawling out of their nest...and the list goes on.

The first important thing to consider was how to reduce the number of needed recording assets to the minimum while retaining maximum expressiveness and variety. The generation script in Unity was working as follows: each new generation would pick up one of the 50 walk animations available and use between 1 and 4 different objects out of 52 unique objects to assemble a character. The selected objects would then be randomly scaled. And according to a mathematician friend, this can generate up to 331,651,200 different characters without counting the duplicates and without taking into account colors and textures.

After playing the game for a little while, I noted down how many footstep types would be able to cover most of the animations. I retained the following set of footsteps: soft, normal, heavy, short drag, long drag, and tiptoe. I had initially planned to record "drunk" footsteps but quickly realized that those were just a disorganized sequence of all the former. But footstep assets wouldn't be enough. I also needed the swaying sound of the body. Initially I had planned to make three different loops to better adapt to different walking speeds, but ultimately they were too time consuming to get right for the added benefit. So they were reduced to one single loop for each object.

Then a few additional questions arose: "Should I have different floor surfaces? Should I differentiate left and right feet? Should I have a differentiation between tip and heel?" That's typically the moment where one thinks they grasped the project and start to make everything more complex. But all these questions need to be put against the reality of the timeline and the project's true nature and scope. RAC was much simpler than it looked. The simpler the end-design would be, the more I would have time to fine-tune it.

The current math was as follows:

52 objects * 6 footsteps type * 7 variations each = 2,184 unique footsteps

+

52 objects * 1 presence loop at different speed = 52 loops

= 2,236 assets needed.

To further reduce this number, I figured out that some of those objects could in fact be imitated by just playing with the Pitch parameter in Wwise. For example, the recordings of a small plastic box could also fit for a bigger one with a lower resonant frequency by simply copying their hierarchy in Wwise and pitching them down. Ultimately, I figured 35 unique objects needed to be recorded, which would make a total of 1,560 assets in the end.

Then I started to draw possible Wwise hierarchies on paper and see how I could attach them to Unity. I finally decided to go with the following hierarchy, and Unity would simply concatenate the Event's string names the following way:

Each footstep tagged on the animations would trigger a specific function depending on the footstep type. Then I would gather the name of the objects randomly picked up for the current character generation.

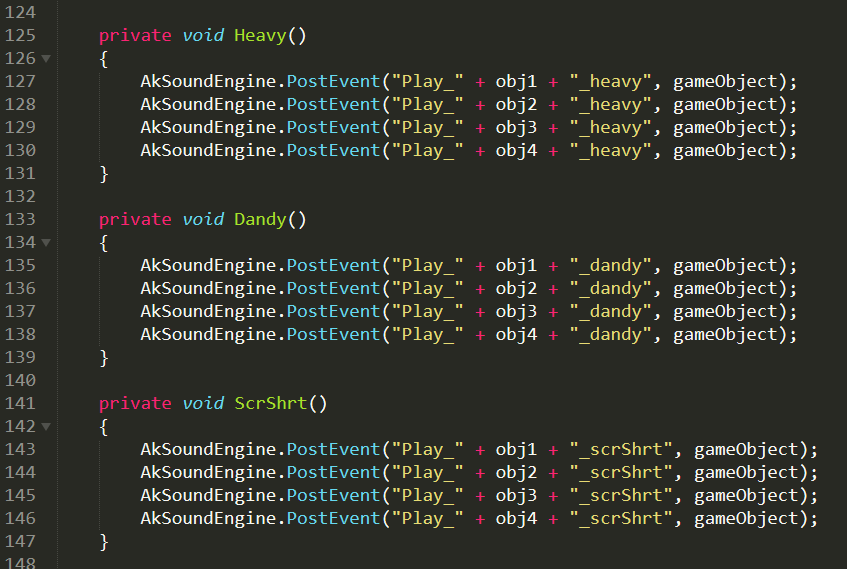

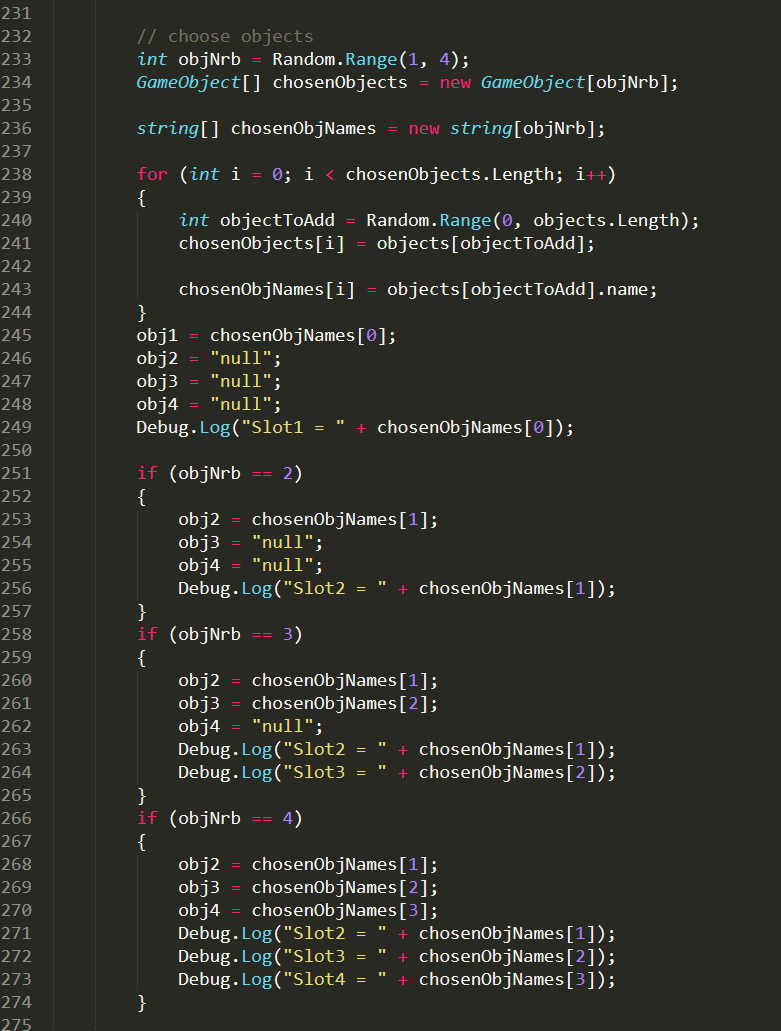

Extract of a few lines of code taking care of the communication between Wwise and Unity

Extract of a few lines of code taking care of the communication between Wwise and Unity

End of day 2: I added the necessary line to the main script of the game, tested the system with sample sounds of bongos – it is working !

Day 3, 4, 5, & 6: Gathering props, improvising a foley stage in Paris and breaking a few eggs

Commercial footstep libraries were of no help here considering that not too many of them feature walking eggs, raspberries, or rattling coke glass bottles. Out of absolute interactive masochism I chose to perform the foley without any video or picture of the game. I really wanted to see the whole thing come together in Unity. But, oh, the amount of pleasure I got the first time I heard the foley assets playing together in real time!

Days 3 : first set of foley props

Days 3 : first set of foley props

The recordings were made with a double mono setup with a hypercardioid and an omnidirectional microphone in phase. Then I had 4 full days of frantic shaking, smashing, rubbing with leaves, empty beer cans, kettlebells, fruits, tree branches...etc.

Half of the days were spent recording and the other half editing in Reaper.

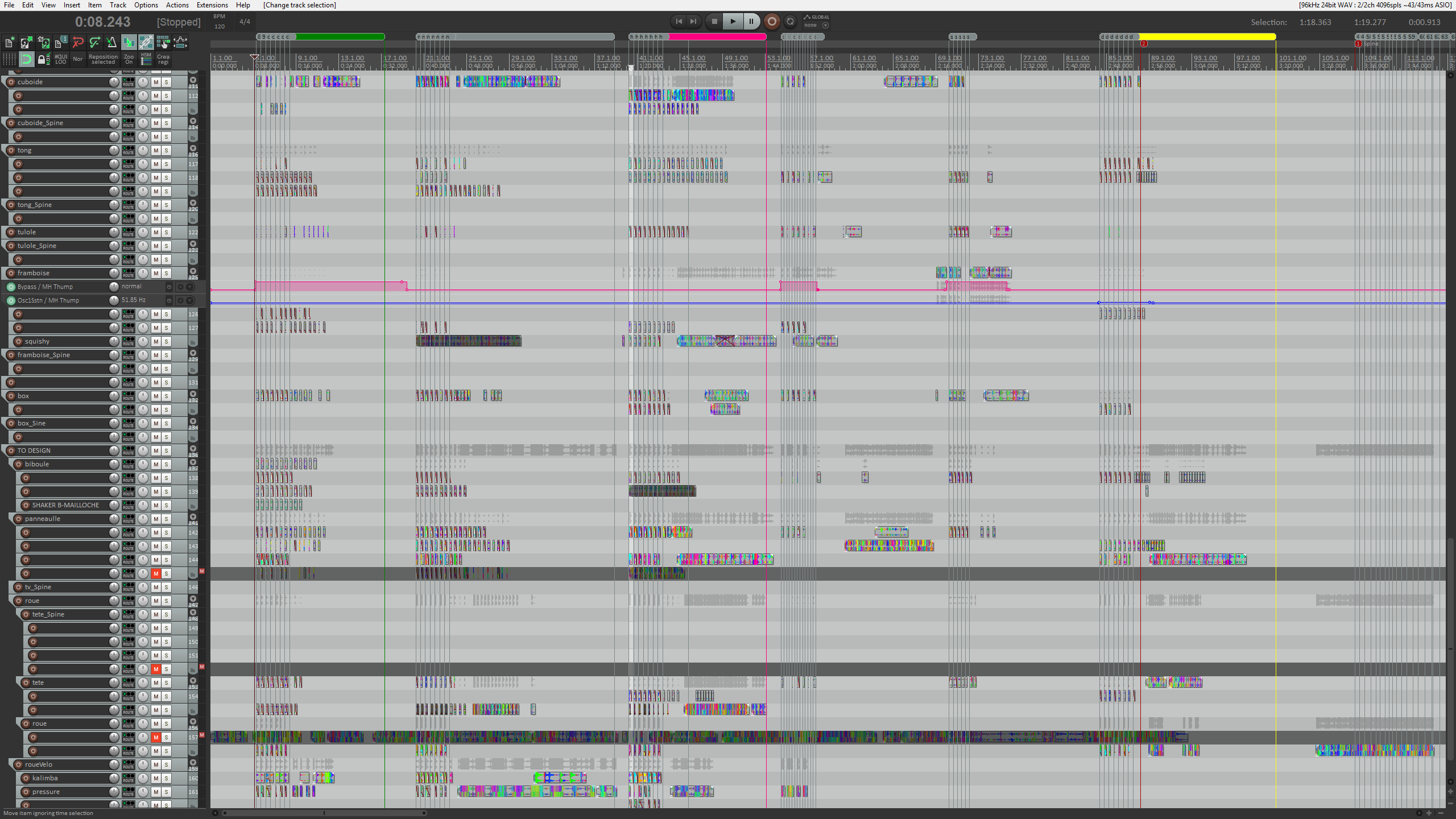

All the foley editing was done in Reaper

All the foley editing was done in Reaper

Day 7, 8, 9, 10 : Routine of an audio designer and reaching a better expressiveness through simple dynamic techniques

Slowly the characters came alive in Unity, with every new prop adding its unique expressiveness and sonic footprint.

To give more life and rhythm to the overall sound, I added some ducking compression to the bus of the spine movements in Wwise, so that each footstep would shortly dim the body sound loops. I also used other special events that were making the spine movements louder or softer for a very short period of time in some animations (as in the drunk animation when the character almost falls down). Other animations would also specifically adjust the volume between the feet and body sound to fit better with the different pacing of the characters.

Day 11, 12: Ambiances, timezones, and taking into account the players' typical gameplay time

On day 11, I had almost reached the content complete state. Now I needed ambiances to further enhance the “accidental narratives” of the game.

Global consideration must be drawn directly between how long players would play with this experience, as this should directly affect the content and design of the ambiances. How long should they be? How many do we need? How to create bonds between the different characters a player will generate?

Ambiances in games are generally designed so that they don't draw too much attention to themselves. Otherwise, they run the risk of exposing repetitiveness (either on a micro level, a WAV file looping, or on the macro level, the system being finite). Therefore, “backgrounds”, or “air” tracks in their simpler form, generally boast a flat content where most of the singularities in files are edited out. As the average player on RAC would not play for hours, I could afford to "give away" all content in a few minutes with some prominent events (like the PA voice in the French train station) to further increase the storytelling.

The idea was to create parallel stories happening at the same time, a bit like in the movie Night On Earth from Jim Jarmusch where you follow the night shifts of 5 taxi drivers in different time zones. The ambiances would be picked up randomly and, when switching back to an already heard ambiance, the playback would follow the exact elapsed time. It ended up giving RAC a magical feeling and a time realism, as if something had happened and all those creatures had just come alive at the same time in different locations.

***

Those were 12 intense days, and I am still amazed at the amount of work one can achieve with the technologies that are currently available. This project has merged together one of the most primitive sound activities, foley, with some of the most recent interactive tools. I hope you enjoy the incongruent bestiary of RAC as much as I did, and that we will see more and more interactive projects pushing foley in the future!

Random Access Character was originally created by Benjamin Vedrenne for the ProcJam 2017.

It was showcased in 2018 at Pictoplasma in Berlin in the form of an interactive installation.

More information and projects can be found on Ben's website (http://glkitty.com/).

Commentaires