When I write music for video games, I always wonder about how I can make it more meaningful for the players. As a composer, you typically discuss the story, the emotions, thematics, colors, and other creative elements related to projects you work on, with the Creative Director and Audio Director. I also tend to ask questions about how music will interact with the players.

I am a classically trained composer who loves electronic music. I love to blend live musicians with digital processing in unique ways, and one of my previous soundtracks, Remember Me, got me a lot of awards for its 'groundbreaking musical approach'. However, one thing that was equally important to me as the composition in itself, was the interactive approach.

In short, for Remember Me, we recorded a 70-piece orchestra in London and had the musicians follow the player’s actions at 140 bpm on the next beat. If you are a Composer or Audio Director, or have any experience in music for games, you should know that this is not easy. And, although the result was amazing, I swore to myself that I would never ever do something as complex as this...until I was introduced to Get Even.

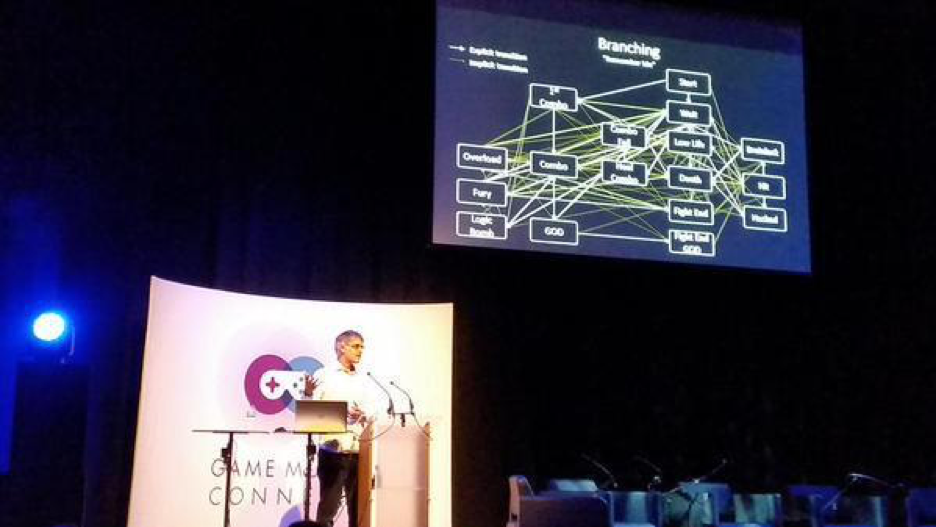

Simon Ashby, VP Products at Audiokinetic - sharing Remember Me Interactive Music Branching at Game Music Connect (London)

Get Even

Get Even was developed by The Farm 51, a Polish developer, and published by Bandai Namco. When they explained to me the concept of the game, I was very surprised. They wanted to make a VR game that is not in VR. They wanted to make a shooter / walking simulator / detective / story-driven game. They wanted players to cry when they ended the game. This to me sounded so amazing, so I embraced the work with all my heart and passion.

Get Even : Real-Time Generated Interactive Music Introduction

What is Interactive Music?

I’ve been travelling the whole world advocating interactive music to composers, developers, and publishers, and sharing my vision while enhancing it from the experiences of industry professionals I meet. Most of my exchanges showed me that as long as the music can change depending on the player’s actions, it becomes 'interactive'. Fair enough, writing and producing some (amazing) music, and delivering stems towards creating layers seems enough, and while it may certainly seem very efficient, should we stop here, forever?! I doubt it. Our medium is in constant evolution. We have improved on it so much: AI, lighting, physics, shaders, and more. Yet, not much when it comes to music. Interactive music is at its beginning and I am hopeful that in the next 10 years, we will have a much wider range of use for music.

Video games happen in real-time, why not music?

I’ve always had a fascination for video games because the pixel that is moving on my screen is rendered as I’m watching it. It is alive only here and now because of my actions. The best analogy for this in music would be electronic music. When you are composing using synths (analog) or computers (digital), the sound is generated in real-time by electricity, CPU, or DSP. This is as fascinating to me as the moving pixel. We were used to music generated in real-time in the very first games. With the use of the chipset included in the console systems, it would follow the action bit by bit if needed. When PlayStation came out with the CD drive, we lost something. Music became a passive track played in the background, yet it was now performed by live musicians and it sounded amazing. Years later, we started to create layers of music that could change in real-time according to the player’s actions, mostly using volume. Today, I am happy to say that we can do both interactive live recording and real-time generated music. I will call this Hybrid Interactive Music (HIM).

Jerobeam Fenderson - Reconstruct - the audiovisual album Oscilloscope Music

Hybrid Interactive Music (HIM)?

Today, we digitize music. A live performance, a synth or sample libraries become a waveform in a file (such as an MP3 or WAV). Over the years, we gained more control and started to edit these files to create multiple sections (horizontal) and layering (vertical), such as an 'introduction', a 'loop', and an 'end loop' to gain more flexibility and musicality for games. This horizontal and/or vertical approach is still the general approach for interactive music, and, if you are curious, I can easily say that Wwise allows you to get incredible results like in Remember Me, Get Even, and many more games out there.

Wwise introduced MIDI to its engine a few years ago and thanks to its incredible versatility we can use it in two different ways. The first one is for playing sounds generated by Wwise. Audiokinetic provides you with some basic synths that allows you to create music in real-time (nothing is recorded, rather generated by the processor). The second way is equally interesting, you can use Wwise as a sampler triggered by MIDI with a lot of control (ADSR, velocity, key mapping). This is really amazing!

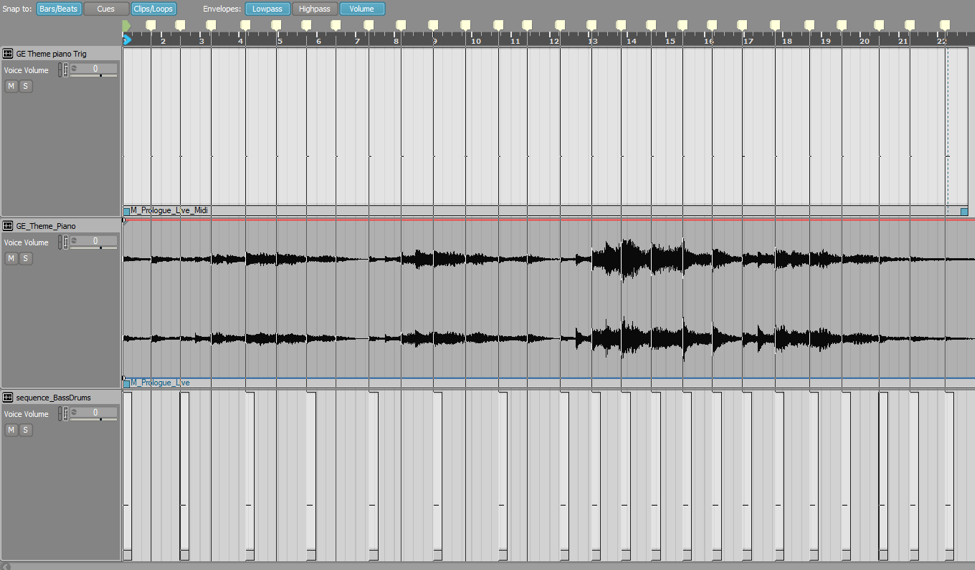

Now, what is groundbreaking in Wwise is the relationship between the prerecorded files and the MIDI. You can blend them freely in the Music Editor. This means that you can record an orchestra and create an instrument based on a synth that would play a bass line in MIDI, and a drum kit created as a sampler and triggered in MIDI along with the orchestra. This is what I call Hybrid Interactive Music, when real-time generated music plays alongside prerecorded music segments.

Get Even - Hybrid Interactive Music example

Why is HIM so interesting?

Let’s look at tempo. We all know that with digitized tracks we can’t change the tempo without changing its pitch (entertainment systems are not ready for real-time pitch correction). So, this means you can’t vary the tempo at all. But what if in a game you are looking to provide the player with feedback and the best way to do that from a creative standpoint would be to accelerate the tempo of the music step by step depending on the distance to one objective or your health status, for example? This is super easy using MIDI + sampler, and RTPC.

A second example would be the dynamics of your instruments. You can create an instrument, let’s say a 'piano', and make it play softer or louder with every note performed. It means that you can create real-time dynamics depending on your game’s parameters in a very controlled yet seamless way.

These two examples represent a small fraction of the many possibilities Wwise offers to create Hybrid Interactive Music. You can get so creative with it, that at times you feel the possibilities are endless.

Get Even - MIDI Kick Drum with Tempo variable, change of pattern and velocity depending on RTPC

But HIM was not enough for Get Even…

Now that we discussed the technical capacities provided by Wwise, let’s get back to Get Even. As I mentioned earlier, Get Even is a VR game that is not in VR. Your character is wearing a VR device throughout the whole game. Therefore, the first question that came to me was 'How do we do music in VR?' This was really disturbing because it felt like music could not fit this new experience. Get Even gives you a very realistic feel that is grounded in the world, and music would feel like something abstract coming from nowhere.

After thinking about it for some time, I established one rule: 'Any music should start with a diegetic element and then become abstract'. This will give the players the impression that music is connected to the world and is not just a background illustration.

As a composer, I always wanted to write unexpected and meaningful colors. Each one of my soundtracks has a unique sound with a 'twist'. I love to create the unexpected to serve what a game needs. Such is the case with my use of Wwise. I love to use it in an unexpected way, to create something unique and meaningful for the game. Here I must stop and say how incredible Audiokinetic is. When they add a feature to their software, they don’t just do that to serve its unique purpose, but rather they connect it to anything else provided in Wwise. For instance, anything you are doing with Interactive Music can be connected to sound emitters in your own game. This is just amazing! I can play a music sequence using HIM into the 3D world of the game, synced with a 2D prerecorded track. It opened so many possibilities and such a meaningful approach for Get Even.

The final result

So, it was decided that every situation in the game should be supported with its unique use of music. This led me to create 17 different ways of using interactive music throughout the whole game, such as the use of diegetic sounds synced with prerecorded music, drones processed in real-time by the console, drums sequenced in MIDI, going from 3D to 2D layers, even better visuals links with the music, and so on. Here is a quote from the sixth Axis review of the game: "Olivier Deriviere’s soundtrack is easily one of the best we’ve heard this year, transitioning between moments of dread, wonder, and raw emotion. Listen closely and you’ll hear how environmental noises – like Cole’s breathing, creaking floorboards, and gas pipes – intertwine with the music. It’s cleverly composed and feels baked into the game itself, instead of being layered on top like the majority of soundtracks.” The Sixth Axis

Is Hybrid Interactive Music the Future? PART II - Technical Demonstrations

Comments