This interview was originally published on A Sound Effect

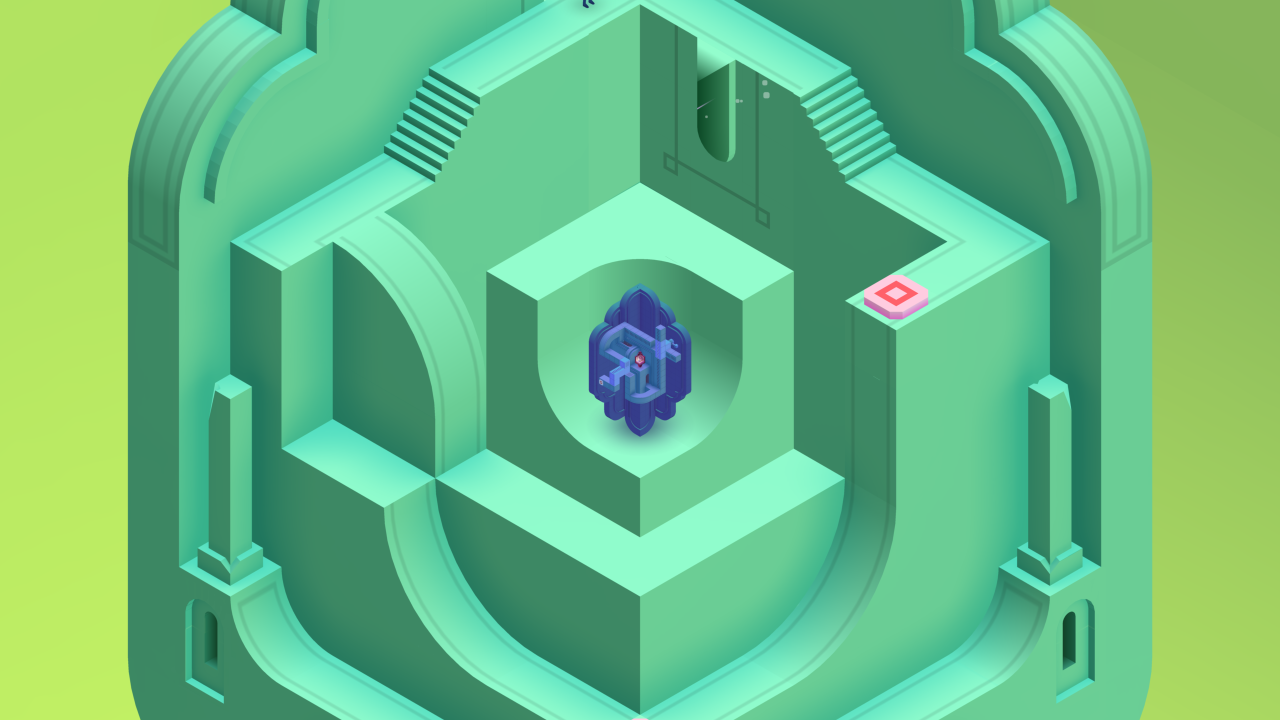

With Monument Valley 2, Ustwo Games not only offer players striking visuals and intricate architectural puzzles up to their previously established standards; they also excel in portraying a beautifully growing relationship between a mother and her daughter in a colorful, eye-catching environment. Here, sound artist Todd Baker shares how he managed to deliver the game's equally immersive soundtrack, what the scope of his work was as a one-man band working on both music and sound effects, and reveals some secrets about his success as a game audio freelancer.

Hi Todd, could you introduce yourself and describe briefly your contribution to the Ustwo games?

Hello! So I’ve been working in music and audio design for over 10 years, mainly on games and interactive projects, but also in other media, and also more recently as a music artist / band member. I worked in-house for a couple of big games studios, and switched to freelance about 5 years ago, forming close relationships with some amazing teams such as Ustwo Games, Media Molecule, Tarsier, and The Chinese Room.

I started working with Ustwo on Lands End VR, shortly after the release of the original Monument Valley. This led to the work on Monument Valley 2, for which I created all of the music and audio design.

I’ve been trying to cultivate an ‘artist’ oriented approach to my work in recent years, thinking less in terms of a jack of all trades composer / sound designer for hire, and instead seeking projects that will suit my stylistic strengths, interests, and skillset.

On Monument Valley 2, you were the only audio person working on the project, taking on both sound and music, as well as their implementation into the game, can you describe in more details what that actually involves?

Essentially it means taking on responsibility for creating and shaping every aspect of the game’s audio. So when and where music and sounds occur, stylistic/artistic decisions, branding and trailer music, systems and solutions for implementation, memory and optimisation, recording mixing and mastering, occasional overwhelmed panicking…

I’ve been using the word “holistic” a lot recently to describe how I approach music and audio design. To me this means thinking about the complete interactive audio experience, rather than treating music and sound design as separate disciplines. Obviously, not every game would suit this approach, but the aesthetic and scope of a project like MV2 lends itself very well to this way of working.

Taking on everything had its downsides, and towards the end of development it became an intense workload for one person. It was definitely on the limit in terms of what I could have handled. On reflection, having an extra pair of hands in the later months may have been wise – in terms of both workload and creative perspective – but it’s also amazing to see a finished game that I have such a personal investment in.

I love working within an audio team, but MV2 felt like an opportunity for me to ‘do my thing’ on a fantastic project that would reach a lot of people. Having been in the industry for a while now, I know you either have to be very lucky or work really hard to get this kind of opportunity!

Can you share your inspirations for MV2’s music and sound aesthetic, and what your main composition tools are?

As the general/high-level concept, I wanted to create a sound aesthetic that felt gentle and spacious, creating a sonic space where players would be soothed and encouraged to appreciate the beauty of the game.

Sonically it sits in between organic and electronic, and has a nostalgic, lo-fi textural edge to it. There’s a very broad palette of sound sources in the game: lots of acoustic instruments, tuned percussion, nylon string guitar, piano, gamelan, and orchestral textures (I actually learned to “play” the Low Whistle for the mother character’s flute instrument), then there’s plenty of electronic/synth/processed audio – but always with an emphasis on more organic and analog-sounding textures. As with Lands End VR, I felt it was important to steer clear of new-age cliché – particularly with the more electronic ambient sounds.

Bibio’s production style is a big reference point, as is Steve Reich’s minimalism and Brian Eno’s early ambient music. I’d also definitely point to Martin Stig Anderson’s work on Limbo and Inside, particularly in terms of the ‘holistic’ approach I mentioned earlier. I actually wrote a blog piece for Ustwo on some of the specific musical influences, which you can read here!

Reaper is pretty much my main sound design DAW and I’m using it more and more for music – but I still go back to Logic for a lot of music work. One or more of the following plug-ins would have touched every sound in the game: Uh-e Satin, XLN RC-20, Valhalla Plate, and Fabfilter Pro-Q2.

Did you do anything sonically to try and represent the underlying architectural illusions which form the basis of the game? Or the relationship between mother and daughter that is illustrated? In other words, how did you translate some of that narrative into sound?

Storytelling is the most exciting aspect of a project to me (nowadays, so much more than fiddling around with audio toys, instruments, and such!) Being immersed in places, characters, and narratives is what makes an experience meaningful and leaves a lasting impression.

I’m glad MV2 focused on a more defined story structure than the original game, as it gave me the opportunity to shape the audio experience around this journey of the mother and child. It’s a simple story and is presented in a fairly impressionistic and abstract way, but this is really a sweet spot for sound and music to play their part – with a lot of freedom.

There are some recurring melodic motifs and sonic themes that run throughout the game (tied to particular places and key moments), but I don’t feel this is anything particularly sophisticated or meticulously thought out. The working style of the Ustwo team made this practically impossible. (A bit more on this in the next question!)

The main focus for me was to provide an emotional guide for the player, supporting the narrative journey and balancing the contrast from level to level. I wanted to be broad-stroked and impressionistic in the spirit of the art and design, but I think the music and audio also help to add a level of unity to the aesthetic. In the final stages of development, members of the team noted how the audio was acting as a kind of glue or backbone to an experience where art and design was characterised by the contrasting styles of the different artists working on the game.

While working as an outsourcer with Ustwo Games, how did you manage the relationship with the studio?

I think I have quite an unusual audio set-up, at least in the sense that I’m very portable and have a variety of environments that I work in. I divide my time between working from my studio (a professional recording / monitoring environment), the Ustwo studio, and also a home setup.

In some ways Ustwo Games are incredibly easy to work with. The level of autonomy, trust, respect, and support I had from the team was amazing. But there’s also a very spontaneous, iterative (occasionally chaotic and unstructured!) side to their process that can make any kind of planning difficult. For example, over the course of the 18-month development, the characters and story outline were not really locked down until the last 10 weeks, and the final level of the game didn’t even exist about 3 weeks before we went live on the app store.

On one hand the freedom and flexibility is great, but the lack of structure and reluctance to lock down design at an earlier stage makes it difficult to realise detailed and nuanced ideas with the audio. The key was to really embrace the spontaneity. Many of the audio moments that fans have noted as favourites were ideas that came together very quickly, when I had no time to over-intellectualise and was simply bouncing off inspiration from the art and design work.

Ustwo is always keen to show games in a different light by challenging perceptions of the medium and reaching out to a wider audience. I certainly share this passion. One of the recent post-launch events was a live session at London’s Victoria & Albert museum, where we performed arrangements of some of the MV2 soundtrack live with my band.

From a technical point of view, what audio engine did you use and did you have an audio programmer in the studio helping you? What were your most important technical challenges?

We were using Wwise with Unity. For a project like this, I think it’s reasonable to say that Wwise is absolutely essential, and I can’t imagine how it would have been possible without it. It empowers me with the tools and insights I need to do my job.

The game’s technical director Manesh Mistry has a background in music technology, and his role in championing adequate support for audio (as well as licensing Wwise) was a huge benefit to the project. Ustwo is a young studio and we’ve worked hard to cultivate a healthy attitude towards audio – it’s great to see this being embraced.

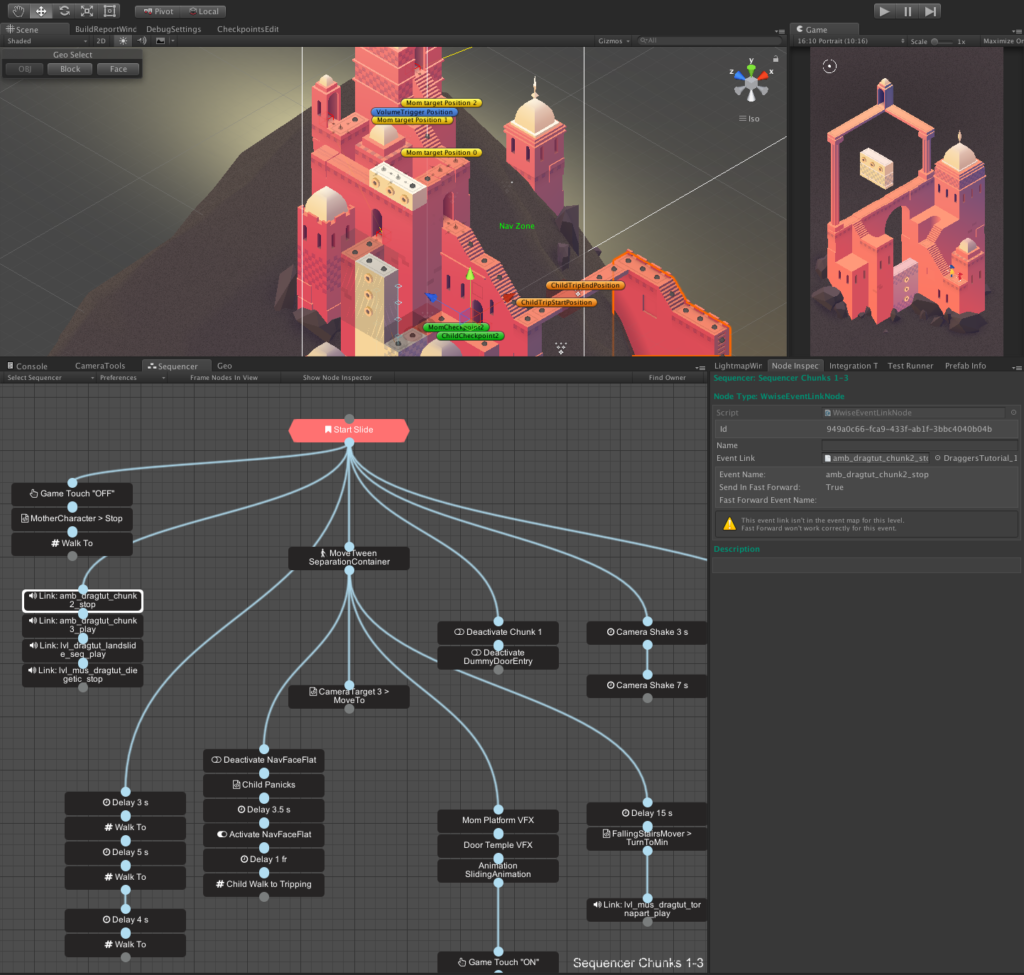

On the Unity side, the programmers put a lot of work into making some excellent bespoke tools for building levels – including a very elegant visual scripting tool for sequencing events and gameplay. We implemented audio functionality directly into these tools, which made it easy for me to plug in my Wwise Event hooks and parameters.

On an aesthetically focussed project like MV2, when it comes to implementation I’m keen to keep things simple where possible – particularly with Wwise at my disposal. I’m happy to sacrifice some flexibility/power in a system if it allows me to get audio in easily and quickly, allowing more time to focus on the creative side and making content.

What is your asset creation approach? Do you record a lot of material which you then implement wherever fits best, or do you plan ahead and compose specifically for the various sections of the game? Roughly how many iterations do you make before being satisfied with the result?

I really don’t have a fixed approach or methodology, and try to constantly adapt to the needs of the project. As mentioned previously, embracing spontaneity was a necessity with MV2, and often iteration wasn’t even an option.

A very cool thing we did early in development was to make an entire album of concept music for the game in just a couple of weeks. (We even released this digitally under a pseudonym, as a bit of an experiment!) I went into the studio and put down a bunch of ideas, so that we had something to feed into the early concepts that were happening in the design and art. A few of those tracks were adapted to fit into the final game and it proved to be a really useful resource and process.

Can you share some tips on successfully achieving dynamic music and non-linearity? Since MV2 is a puzzle based game, players can spend any amount of time within a same level – from a few seconds to long minutes, what are your techniques to avoid the music becoming repetitive?

Here’s a breakdown of the audio in one of the ‘chunks’ (sublevels) of MV2:

- A minimal guitar piece plays as the main ambient backdrop.

- The interactive rotating platforms have playful musical layers attached to their movement – as do the two buttons that move the geometry around.

- After a short while the mother character starts to play her flute (which triggers random phrases in a sympathetic musical key).

- As each phase of the puzzle completes, you get a musical sting.

- The global navigation ‘ping’ that confirms the character movement also has it’s randomised hang drum note (that is tuned sympathetically to each level in the game).

- An example like the above elaborates on the holistic approach I mentioned earlier. There’s a mixture of diegetic, non-diegetic, ambient, and interactive elements all working together to form the audio experience.

I felt it was important to not overuse musical interactions, and place them at points where we wanted to encourage the player to take a moment to be playful. There are also a lot of interactive objects that have more subtle musical layers, and I would expect many players not to notice the tonality – but hopefully it helps add to the overall feeling of a tactile world.

As an audio designer and composer for mobile games, what was your strategy for mixing? How would you compare working on a mobile game VS other platforms?

I always mix as I go along, and do a lot of A/B comparison between levels, occasionally profiling the levels in Wwise to monitor the overall headroom and make sure things are fairly consistent.

I’ve worked on many console projects and really there’s no reason why we should treat mobile differently. Obviously, it’s mixing in stereo, and the best-case scenario is that the player will listen on headphones or maybe connected to a bluetooth system. The usual considerations apply, dynamic range and overall master level are really a creative decision to be made according to the needs of a project. I did a bit of testing on phone and tablet speakers towards the end, which are getting surprisingly good.

I’m cautious of treating ‘mobile games’ as a category. Clearly considerations for something like Monument Valley are different from a game like Candy Crush or CSR Racing. Not to mention that our mobile devices are clearly destined to become consoles when connected to a display and controller.

A parting thought: We actually managed to get a Unity analytics hook into the game that detected (upon completing each level) whether or not the player had headphones plugged in.

This felt a bit like opening pandora’s box…would the results reveal the worst possible statistic for negotiating the next audio budget? Or would we delight at all the audio-loving listeners using their trendy Skullcandy’s?

Rather than revealing the results here, I’d be fascinated to ask people to take a guess in the comments below (% of people that played the game with headphones) and I’ll reveal the answer after we’ve seen a few!

.jpg)

Comments

Gary Hiebner

January 16, 2018 at 03:11 pm

I'm guessing the percentage was quite high that users WERE using headphones.

Kenny Young

January 17, 2018 at 03:07 am

83.7%!

Egil Sandfeld

January 17, 2018 at 03:26 am

Great article and interesting insight into how others go around :) My guess would be that a rather low amount of users use headphones for casual gaming. I would put it at around 37%

Andy Grier

July 11, 2018 at 05:47 am

Higher than most mobile titles for sure. I'm going to take a guess around 71% headphones, 27% speakers and a small (but frustrating) 2% of 'sound was off or they smashed device music over the top'. I really hope it's higher than my predictions though!